- OpenAI integrated Codex into the ChatGPT mobile app on May 14, 2026, available in preview on iOS and Android across all plan tiers.

- The mobile feature lets users monitor Codex live environments, review outputs, approve commands, change models, or start new tasks from a phone.

- The release follows OpenAI’s April rollout of background execution for Codex on desktop and a Chrome extension that lets the agent operate inside live browser sessions.

- Anthropic released a similar feature called Remote Control for Claude Code in February 2026; the parallel pace reflects active competition between the two on agentic coding tools.

What Happened

OpenAI integrated its Codex coding agent into the ChatGPT mobile application on May 14, 2026, allowing developers to monitor and manage their development workflows remotely from a phone, according to TechCrunch’s reporting by Lucas Ropek. The feature is available in preview to all ChatGPT plan tiers across iOS and Android. The mobile interface surfaces Codex live environments running on the user’s primary device, allowing a phone to act as a control plane rather than an execution environment in itself.

Why It Matters

Codex was first launched as a desktop tool roughly a year ago, and the mobile integration is the third major expansion of its execution surface in two months. April brought a desktop background-execution capability that lets Codex take care of various tasks autonomously. Earlier in May, OpenAI introduced a Chrome extension that allows the agent to work in live browser sessions. The mobile launch this week extends that pattern across the full set of devices a developer holds.

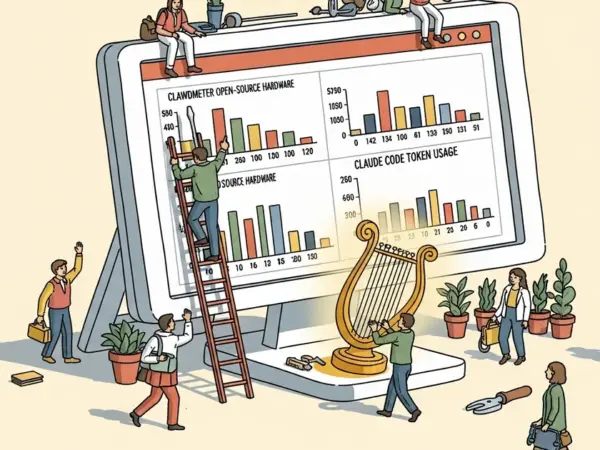

The expansion mirrors a parallel push by Anthropic, which released Remote Control for Claude Code in February 2026 to enable the same off-device monitoring pattern. TechCrunch framed the back-and-forth directly: “The flurry of feature releases from both OpenAI and Anthropic speaks to the tense competition between the two over whose agentic coding tool will become the most widely used. Over the past year, Anthropic’s Claude Code has gained in popularity among businesses and tech professionals alike, although both tools continue to be widely used.”

Technical Details

The mobile integration surfaces Codex live environments running on the user’s primary device, allowing a phone to act as a control plane rather than an execution environment. From the mobile interface, users can work across all of their threads, review agent outputs, approve or reject commands, change the underlying model the agent is using, or initiate new tasks. OpenAI stated in its launch announcement: “This is more than the ability to remotely control a single task or dispatch new tasks to your computer. From your phone, you can work across all of your threads, review outputs, approve commands, change models, or start something new.”

The feature is currently labeled preview, indicating OpenAI plans further iteration before general availability. The architectural pattern — desktop execution, mobile control — follows a model first popularized by SSH and remote-desktop tools but extended here to stateful agentic execution rather than discrete commands.

Who’s Affected

Existing Codex users on Plus, Pro, Team, and Enterprise plans gain the mobile control surface immediately. GitHub Copilot, Anthropic Claude Code, Cursor, Windsurf, and Cognition Labs’ Devin are the named or implicit competitors whose mobile and remote stories now have a sharper benchmark. Mobile-first developers in markets where laptops are less common — particularly in parts of Asia and Latin America — gain a more usable on-ramp to agent-assisted coding. Sea Limited, which OpenAI featured in a customer interview the same week and disclosed has 87 percent weekly active Codex use across its engineering org, is the type of geographically distributed developer population most likely to benefit from mobile workflow monitoring.

What’s Next

The release pattern through 2026 — desktop background execution in April, browser extension earlier in May, mobile integration this week — suggests OpenAI is building toward an “execute anywhere, control anywhere” architecture that detaches the agent from any single host. Anthropic’s response to the mobile-control feature has not been publicly detailed beyond the existing Remote Control product. The next product expansion likely targets either a deeper IDE integration or a multi-agent coordination layer that lets users orchestrate parallel Codex agents from a single mobile thread.