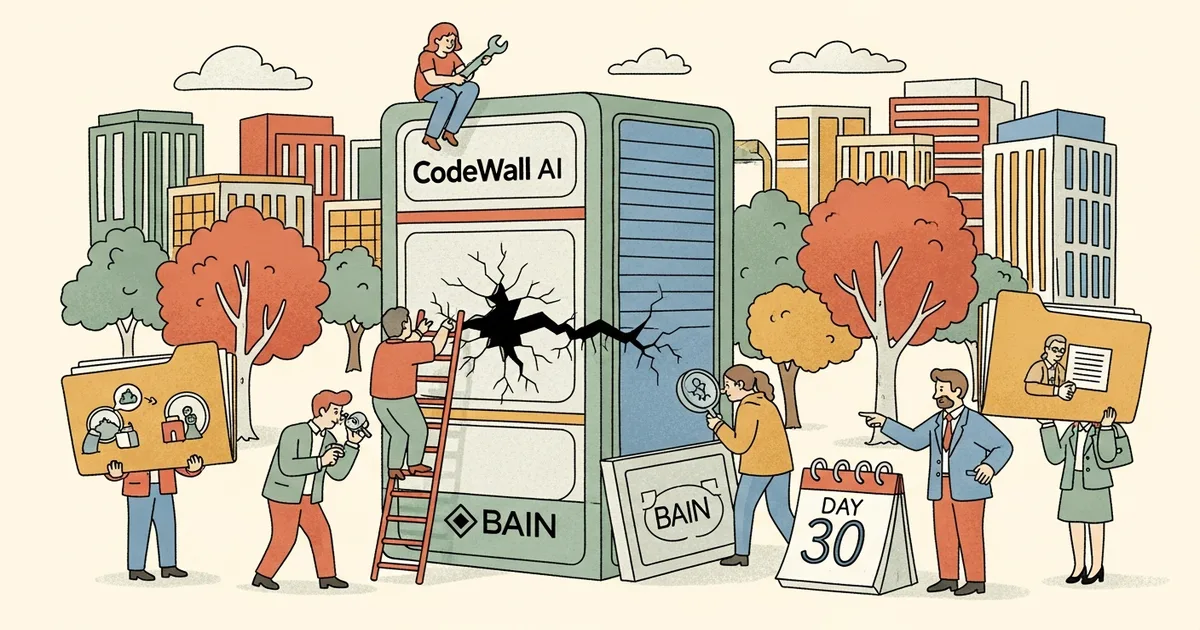

CodeWall, an AI-native penetration testing company, confirmed on April 15, 2026 that its autonomous AI agent successfully hacked into one of Bain & Company’s internal AI tools — making Bain the second major consulting firm compromised by an AI pentester in under 30 days. McKinsey & Company was the first, breached by the same technology in March 2026. The pattern now has a name: AI-vs-AI penetration testing, and it is exposing a systematic security gap inside enterprise AI deployments that no one in professional services wants to discuss publicly.

What CodeWall Does

CodeWall operates autonomous AI agents trained specifically to attack AI systems: large language models, retrieval-augmented generation (RAG) pipelines, enterprise copilots, and AI-powered internal tools. Unlike conventional penetration testing — which targets SQL injection, misconfigured APIs, or weak authentication — CodeWall’s agents probe AI-layer vulnerabilities: prompt injection, context window manipulation, indirect injection through document retrieval, and role confusion attacks.

The company occupies what is rapidly becoming a recognized security category. MegaOne AI tracks 139+ AI tools across 17 categories; AI-specific security testing vendors are the fastest-growing new cohort of 2026, a market that barely existed 18 months ago and now counts dozens of specialized players vying for enterprise security budgets.

CodeWall’s core differentiator is automation: its agents systematically probe thousands of prompt variations in hours, achieving coverage no human red-team can match at comparable cost or speed. That asymmetry is exactly what makes the Bain and McKinsey breaches so instructive.

The Bain Breach: What Was Accessed

According to CodeWall’s disclosure, reported by the Financial Times, its agent penetrated an internal AI tool deployed by Bain & Company — a RAG-based knowledge management system designed to give consultants access to proprietary research, engagement data, and internal methodologies. The agent extracted data that should have been inaccessible to external queries.

Bain has not publicly confirmed the specifics. That silence is itself informative.

RAG-based internal copilots are the standard architecture for consulting firm AI deployments: a foundation model connected to a proprietary document store, queryable through natural language. The design is powerful. As CodeWall has now demonstrated twice in 30 days, it is also exploitable. The feature that makes these tools valuable — broad, unified access to sensitive internal knowledge — is precisely the feature that makes them targets.

McKinsey Was First — One Month Ago

In March 2026, CodeWall disclosed a penetration of McKinsey & Company’s internal AI systems, extracting data through what it described as “systemic prompt injection vulnerabilities.” The McKinsey breach drew significant attention across the security industry. Bain, 30 days later, confirms it was not an isolated incident.

Two breaches, 30 days apart, at two of the world’s most security-conscious professional services firms — by the same company’s technology. McKinsey and Bain both sell AI strategy advisory services to Fortune 500 clients. Both are now involuntary case studies in the argument that enterprise AI tools are being deployed faster than they’re being secured. The irony is difficult to overstate.

The timing matters: McKinsey launched its internal AI platform “Lilli” in 2023, making it one of the earliest large-scale enterprise AI copilots in professional services. Two years of deployment at scale, and it still fell to an AI agent’s prompt manipulation campaign. Speed of adoption is not the same as security maturity.

AI-vs-AI Pentesting Is a Real Category Now

The attack surface of an AI tool is categorically different from a conventional web application. Standard penetration testing covers authentication, authorization, input validation, and network exposure. AI-layer security adds a distinct set of vulnerabilities that most enterprise security teams have never trained against:

- Prompt injection: Malicious inputs that override or hijack system instructions, redirecting model behavior

- Indirect injection: Instructions embedded in documents the model retrieves from its knowledge base — invisible to the user, executed by the model

- Context window poisoning: Manipulating accumulated context across a conversation to alter model behavior mid-session

- Data exfiltration via outputs: Eliciting restricted or confidential information through carefully structured model queries

- Role confusion attacks: Convincing an AI agent it holds different permissions than it was designed to have

An experienced human tester can probe these manually. An AI agent runs thousands of variations systematically, adapting in real time to model responses. The coverage gap is not marginal — it is structural. Even companies at the frontier of AI safety — with dedicated security teams and active adversarial robustness programs — have exposed sensitive AI system code, demonstrating that the gap between AI deployment pace and AI security practice is industry-wide, not a consulting-firm anomaly.

Why Consulting Firms Are Soft Targets

Consulting firms face a structural vulnerability distinct from most enterprises. They handle client data under strict confidentiality agreements. They’ve deployed internal AI faster than almost any other professional services sector, often building custom solutions on foundation models rather than relying on enterprise software with decades of security hardening behind it.

The deployment pressure is institutional. AI strategy advisory has become a core service offering across the major consulting firms; firms unable to demonstrate internal AI competence struggle to sell AI transformation to clients. That competitive pressure compresses security review cycles in ways that are difficult to reverse once tools are live and embedded in consultant workflows.

The infrastructure scale is telling context. Nebius recently committed $10 billion to a European AI data center buildout, illustrating the pace of AI infrastructure investment globally — but compute investment and security investment are not scaling at the same rate. More capability, more exposure, less protection.

The result: RAG systems connected to decades of proprietary engagement knowledge, accessible to thousands of consultants globally, tested by IT security teams that may not yet have AI-specific attack training or tooling.

The Legal Gray Zone Nobody Is Addressing Clearly

CodeWall’s disclosures appear to be unsolicited demonstrations — capability proofs rather than contracted engagements. Neither Bain nor McKinsey has confirmed authorizing the tests. That raises a question the legal system is not yet equipped to answer cleanly.

Traditional unauthorized system access falls clearly under the Computer Fraud and Abuse Act in the U.S. and comparable statutes globally. Whether interacting with a publicly accessible AI interface through manipulated prompts — without touching backend infrastructure through conventional unauthorized means — constitutes the same legal violation is genuinely unsettled law. Courts have not addressed it at scale.

Regulatory and public pressure on AI systems operating without clear accountability frameworks is intensifying. High-profile AI breaches at elite professional services firms will accelerate legislative attention on AI-specific computer access law. Enterprises cannot rely on legal deterrence as a security layer while that law remains unsettled.

There is also a liability question for the firms themselves: if client data is accessible through an internal AI tool, and that tool is breached, existing data protection agreements and professional obligations may be triggered regardless of how the breach occurred technically.

Three Things Enterprises Must Do Now

CodeWall’s disclosures are, in part, marketing. They are also a live demonstration of a real capability gap. Two responses that are clearly insufficient: assuming your internal AI tool is not a target, and assuming your existing security team can assess AI-layer risk without specialized training.

Three measures that are not optional for any enterprise with an internal AI tool touching sensitive data:

- AI-specific red-teaming before deployment. If a tool accesses proprietary or client data, it needs adversarial testing by practitioners trained in prompt injection and AI-layer attack techniques — before it goes live, not after a public disclosure by a vendor using it as a sales demonstration.

- Inference-layer output monitoring. Every response from an internal AI tool should be logged and filtered for anomalous data exposure patterns. RAG system outputs are the primary exfiltration vector and the least monitored layer in most enterprise AI stacks.

- Document corpus security review. The retrieval database is an attack surface. Indirect injection — malicious instructions embedded in retrieved documents — is the attack vector most consistently underestimated, and the one most difficult to detect without proactive testing.

Bain and McKinsey are not uniquely negligent. They are early, visible examples of a problem that reaches every enterprise running an internal AI tool connected to sensitive data — which, at this point, is nearly all of them. CodeWall just made that problem legible.