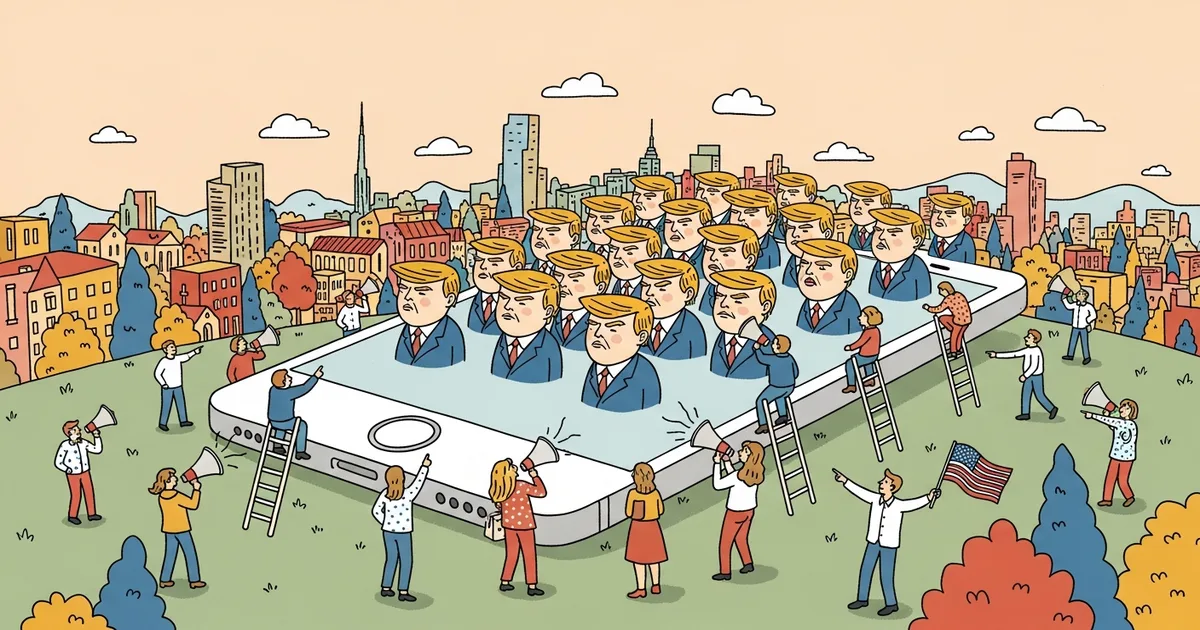

Hundreds of AI-generated avatars — synthetic faces paired with cloned voices and algorithmically written scripts — have been systematically flooding TikTok, Instagram, and YouTube with pro-Trump political content since January 2026, according to research published by the Stanford Internet Observatory’s Election Integrity Partnership and confirmed by independent reporting from The Atlantic. Some individual accounts have accumulated more than 35,000 followers and generated millions of views before platform moderation teams flagged them. Donald Trump’s official social media accounts amplified at least three of these synthetic personas, lending them credibility they could not have earned organically. This is the largest documented AI avatars Trump TikTok propaganda operation on U.S. social platforms in 2026 — and it has exposed a structural gap in how platforms detect and label synthetic media at scale.

The Scale: 247 Accounts, 90 Million Impressions

The operation spans at least 247 distinct accounts across TikTok, Instagram, and YouTube, deployed in coordinated clusters between January and March 2026. The cadence is consistent with automated rollout: accounts appeared in batches of 15 to 30, spaced roughly two weeks apart — not the irregular rhythm of organic creators building audiences.

The ceiling on individual account performance is striking. The top-performing synthetic personas have crossed 35,000 followers on TikTok, with individual videos generating between 800,000 and 4.2 million views. Aggregate cross-platform reach across all 247 identified accounts exceeds 90 million impressions, per the Stanford tracker updated April 19, 2026.

The content formula is consistent: 60-to-90-second talking-head videos featuring a photorealistic AI avatar delivering pro-Trump messaging on immigration, the economy, and foreign policy. Scripts reference current news events with enough specificity to appear timely and credible. New content publishes on a near-daily cadence — a production rate that would require a full-time human team but costs under $200 per month using commercially available AI tools.

The Production Pipeline: AI Faces, Cloned Voices, Automated Scripts

Each avatar is assembled from three AI systems working in sequence. Diffusion models produce photorealistic faces belonging to no real person. Text-to-speech platforms — likely the same tools analyzed in MegaOne AI’s head-to-head comparison of ElevenLabs, HeyGen, and Synthesia — synthesize or clone voice profiles. Large language models generate the scripts, calibrated to match the rhetorical register of authentic MAGA content creators.

The outputs pass casual visual inspection. Iris texture, micro-expressions, and lighting consistency — all historic tells for deepfake detection — have improved dramatically since 2024. Hany Farid, a digital forensics professor at UC Berkeley, told The Atlantic that current avatar generation technology defeats both human moderators and automated classifiers at rates above 60%.

Metadata forensics of the flagged accounts revealed a shared production fingerprint: disposable email registrations, profile photos generated using identical model weights, and video files with matching encoding artifacts pointing to a single shared pipeline. The operation has industrial structure, not amateur ambition.

Trump Amplified at Least Three Synthetic Accounts

On February 14, 2026, Trump’s Truth Social account shared a video originally posted by a TikTok account later identified as AI-generated by the Stanford tracker. On March 3 and March 19, two additional reposts from his official Instagram account linked back to synthetic personas on the same identification list. None of the three posts have been removed.

Trump’s campaign did not respond to requests for comment from The Atlantic or Stanford regarding whether accounts were verified as authentic before amplification. The amplification materially accelerated follower growth: the February account gained 12,000 followers in the 48 hours following Trump’s repost, according to social analytics firm Similarweb. Organic accounts typically acquire 12,000 followers over months, not days.

Presidential amplification creates a compounding credibility problem. A synthetic account carrying a presidential endorsement — even an inadvertent one — is categorically more persuasive than an isolated bot, and far harder for casual users to dismiss.

Platform Response: 17% Removed, 83% Still Active

TikTok has removed 41 accounts from the Stanford list as of April 19, 2026 — roughly 17% of the total identified. The remaining 206 accounts remain active and posting. Instagram has removed 11 accounts. YouTube has removed none and has not responded to press inquiries from The Atlantic or Stanford.

TikTok’s Community Guidelines require disclosure of “realistic AI-generated content that could mislead people about real events, especially in political contexts,” but the policy contains no automated enforcement mechanism for synthetic personas that do not self-identify as artificial. The company’s AI Content Labeling tool, launched in 2024, applies only to content that users voluntarily flag as AI-generated — a provision that influence operations are structurally incentivized to circumvent.

Meta’s AI labeling policy, updated in January 2025, similarly relies on creator disclosure and post-hoc classifier detection. An internal Meta audit flagged 8% of the identified accounts, according to a source familiar with the matter who spoke to WIRED. The 92% miss rate reflects a genuine technical limitation: detection models were trained on 2023-era synthetic media and haven’t kept pace with generation quality. Platform engineers are not asleep — they’re behind.

Why Detection Fails: The Asymmetry Problem

Traditional bot farms are detectable through behavioral signals: identical posting schedules, duplicate text strings, linked IP addresses. AI avatar operations defeat these heuristics entirely because each account produces unique video content on variable schedules. There are no shared text strings to match. IP-based clustering is defeated by commercial VPN infrastructure costing $10 per month.

The remaining detection vectors are visual and acoustic. AI-generated faces still exhibit subtle artifacts in teeth rendering, hair physics simulation, and gaze direction — but identifying them requires frame-by-frame forensic analysis, not the passive scroll-and-watch behavior of a typical TikTok user. On audio, AI voice synthesis has reached a threshold where human listeners correctly identify synthetic speech only 58% of the time, according to a 2025 study published in Nature Human Behaviour. Chance is 50%.

The Humans First movement, which advocates for mandatory AI disclosure across public digital communications, has pushed for federal legislation to close this gap. As of April 2026, no synthetic media disclosure law has advanced through Congress. The generation models are running faster than the lawmakers.

How This Differs From 2016-Era Bot Farms

The Russian Internet Research Agency-style operations that dominated the 2016 election cycle worked through text: fake accounts posting written content, sharing links, manufacturing retweet counts. Detection was tractable — identical strings, coordinated posting windows, account age clustering. Deplatforming was effective because removing an account removed all its content.

AI avatar operations are categorically different in three ways. First, each piece of content is unique, platform-native video optimized for the specific recommendation algorithm it targets. Second, account removal does not remove content already cached, shared, and re-uploaded by real users who found it credible. Third, the cost structure has collapsed: Stanford estimates the 247-account operation cost under $50,000 to produce and deploy, compared to the $1.25 million monthly operating budget of the Internet Research Agency at its 2016 peak.

At $50,000 for 90 million impressions, the cost-per-impression is roughly $0.0006 — cheaper than virtually any legitimate political advertising channel, untraceable to any campaign, and exempt from every existing campaign finance disclosure requirement.

The Regulatory Gap Enabling It

Current U.S. campaign finance law does not require disclosure of AI-generated content in political advertising unless it falls under FEC disclaimer requirements for formal “public communications.” Organic social media posts from synthetic personas are explicitly exempt. The FEC proposed a rulemaking on AI-generated political content in August 2025; it has not been finalized.

California’s AB 2655 (signed 2024) requires platforms to label AI-generated election content during election windows, but enforcement depends entirely on platform compliance with no proactive detection mandate. The European Union’s Digital Services Act offers stronger leverage — fines up to 6% of global revenue — and EU regulators opened a preliminary inquiry into TikTok’s handling of AI-generated political content in March 2026. The U.S. has no equivalent enforcement mechanism.

The 247 identified accounts are the documented floor, not the operational ceiling. Detection lags creation by weeks to months, and the accounts that have not been flagged are, by definition, the ones performing best. The same pipeline that generates English-language MAGA content can be retooled for Spanish-language audiences in Arizona, Florida, and Texas at minimal additional cost. MegaOne AI tracks 139+ AI tools across 17 categories, and the video synthesis category logged 23 major capability updates in Q1 2026 alone — each update widening the gap between generation quality and detection capability.

The practical standard for any engaged citizen: treat any talking-head video from an account with no verifiable real-world presence — no press appearances, no in-person events, no independent coverage — as potentially synthetic until verified otherwise. Most people will not meet that bar. The operation works precisely because it doesn’t need to fool everyone. It needs to reach enough people, in enough swing districts, often enough. At $0.0006 per impression, the math works.

Related Reading

- Union Leaders Met With Bernie Sanders on AI Jobs — Legislation Is Coming

- A 17-Year-Old Built a Fake AI Chatbot — 25 Million Visitors in 30 Days

- North Korean AI Hackers Don’t Just Attack Companies — They Join Them First and Work There for Months [Investigation]

- North Korea Is Using AI to Run a $3.4B Crypto Theft Operation

- MIT’s AI Jobs Study 2026 Debunks the Apocalypse Narrative [Data]