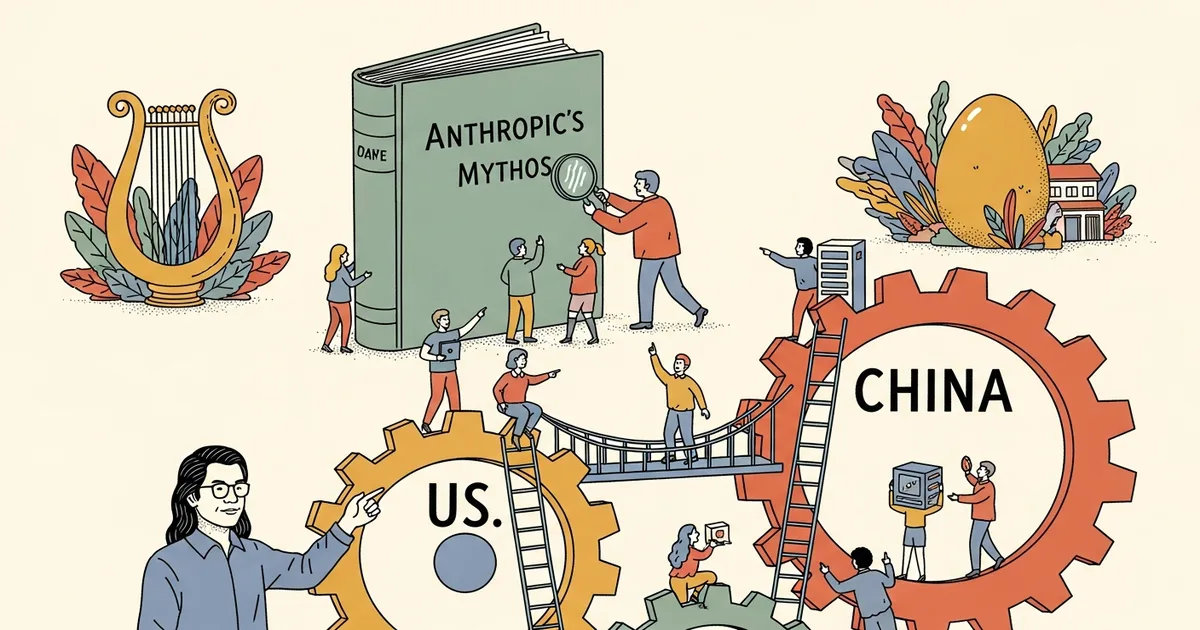

- Nvidia CEO Jensen Huang said Anthropic’s Mythos development demonstrates why the US and China need formal AI safety dialogue.

- Huang argued researchers from both countries should cooperate to establish shared norms for deploying increasingly powerful AI systems.

- The comments come as Nvidia faces ongoing US export restrictions on advanced chips destined for China, which have materially reduced the company’s addressable market there.

- No formal US-China bilateral AI safety framework currently exists, though both governments have participated in separate multilateral AI governance discussions.

What Happened

Nvidia Chief Executive Officer Jensen Huang, speaking publicly on April 15, 2026, said that Anthropic’s Mythos advance illustrates why the United States and China must pursue direct dialogue on artificial intelligence safety, according to a Bloomberg report. Huang said AI researchers in the world’s two largest economies should work together to agree on how to safely use technology that is growing more capable. The remarks, reported by Bloomberg, mark a notable instance of a major US semiconductor executive framing a specific AI capability milestone as a catalyst for diplomatic engagement.

Why It Matters

Huang’s call for dialogue arrives against a backdrop of deepening technological competition between Washington and Beijing. The US Commerce Department has restricted exports of Nvidia’s most advanced data center chips — including the H100 and the China-specific H20 — to Chinese customers, measures that have directly curtailed Nvidia’s revenue in one of its historically largest markets. At the same time, China has accelerated domestic AI development, with labs such as DeepSeek producing models that have drawn international attention for their performance relative to training cost. No standing bilateral AI safety mechanism between the US and China currently exists, though both nations participated separately in discussions at the 2023 UK AI Safety Summit at Bletchley Park.

Technical Details

Bloomberg’s report describes Anthropic’s Mythos as a breakthrough development, though the full primary article was not accessible for independent technical verification at publication time. Huang’s framing suggests he views Mythos as a capability threshold significant enough to shift the policy calculus around AI governance — a posture consistent with how safety-focused AI labs, including Anthropic itself, have characterized frontier model development. Anthropic, founded in 2021 by former OpenAI researchers including Dario Amodei and Daniela Amodei, has previously published safety-oriented research under its Responsible Scaling Policy, which ties deployment decisions to measured capability evaluations. Whether Mythos crosses any of those internal thresholds was not confirmed in available sourcing.

Who’s Affected

Nvidia has a direct commercial stake in the outcome of US-China technology policy: the company has estimated that US chip export controls have cost it tens of billions of dollars in potential China sales. Huang’s comments are unlikely to be read as politically neutral given that context. For Anthropic, whose Mythos work is cited as the trigger for Huang’s remarks, the attention elevates the company’s research profile in a geopolitically charged setting. AI safety researchers and policymakers in both countries who have argued for technical exchanges on frontier AI risks may find Huang’s statements useful as a signal that industry leadership is willing to support such dialogue, though concrete mechanisms remain absent.

What’s Next

It is not clear whether Huang’s remarks will prompt any formal diplomatic response from Washington or Beijing, or whether they reflect coordinated messaging with Anthropic. The Biden and Trump administrations have both maintained the chip export restrictions Nvidia has publicly criticized, and no senior US official has publicly endorsed a bilateral AI safety forum with China as of this report. Anthropic has not issued a public statement connecting Mythos to geopolitical AI governance discussions, according to available sourcing at time of publication.