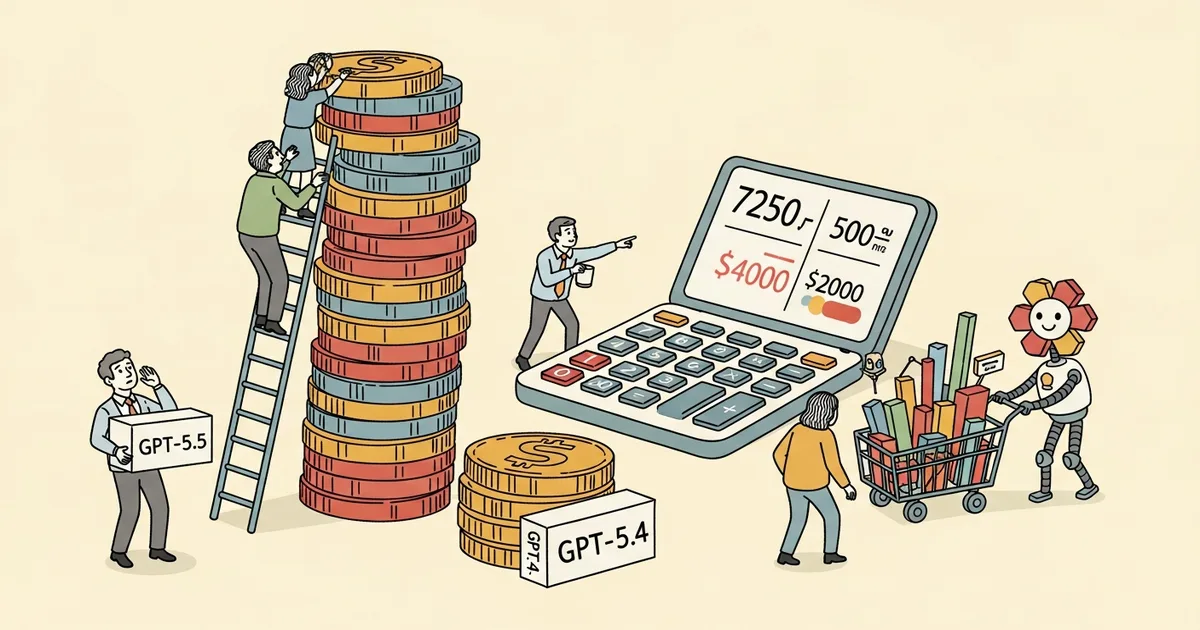

- GPT-5.5’s list price doubled vs GPT-5.4: $5/M input + $30/M output, up from $2.50/$15 — but real-world costs from OpenRouter’s April 2026 usage logs rose 49-92% depending on input length.

- For inputs under 2,000 tokens, response length barely changes, nearly doubling effective costs (+92% per million tokens consumed).

- For 10K-25K input range, response length is 19-34% shorter, partially offsetting list-price doubling — costs only rise +51%.

- Anthropic’s Claude Opus 4.7 followed a similar pattern: 30-40% price increase driven by higher token consumption rather than direct list-price changes.

What Happened

OpenRouter usage data from April 2026 shows GPT-5.5 real-world costs rose 49 to 92 percent compared to GPT-5.4, depending on input length. The per-token list price doubled — input tokens at $5 per million and output at $30 per million, up from $2.50 and $15 — but the effective cost increase varies because GPT-5.5 produces shorter responses for some input lengths and longer for others.

Why It Matters

Pricing is the most consequential commercial axis for frontier-model adoption. OpenAI‘s framing on the GPT-5.5 launch (covered May 5) emphasized factuality improvements and “super app” positioning. The OpenRouter analysis shifts the framing: the headline list-price doubling is real, but real-world cost depends on workload mix. Short-prompt workloads see the worst cost increase (+92%); long-context workloads see the smallest (+49% to +51%). For developers using GPT-5.5 in interactive chat applications (typically short prompts), the effective cost is nearly double. For developers using it in long-document analysis or agentic workflows (long context), the cost increase is more manageable.

Technical Details

OpenRouter’s per-input-length cost analysis (average $/M tokens consumed):

- <2K tokens: $4.89 → $9.37 (+92%)

- 2K-10K tokens: $2.25 → $3.81 (+69%)

- 10K-25K tokens: $1.42 → $2.15 (+51%)

- 25K-50K tokens: $1.02 → $1.65 (+62%)

- 50K-128K tokens: $0.74 → $1.10 (+49%)

- 128K+ tokens: $0.71 → $1.31 (+85%)

The structural explanation: for inputs over 10,000 tokens, GPT-5.5 responses are 19 to 34 percent shorter, easing costs somewhat. In the 2,000-10,000 token range, responses run 52 percent longer. For short inputs under 2,000 tokens, response length barely changes, so the doubled list price flows through directly. The 128K+ tier behaves similarly to short prompts because long-context responses tend toward verbose synthesis output.

The Artificial Analysis context: a separate analysis from Artificial Analysis previously found only a 20 percent cost increase for GPT-5.5 — but that study tested benchmarks rather than real tasks. The discrepancy between Artificial Analysis (+20%) and OpenRouter (+49% to +92%) illustrates the difference between standardized benchmark inputs and real-world prompt distributions.

The Anthropic parallel: Anthropic raised Claude Opus 4.7 prices 30 to 40 percent, driven by higher token consumption rather than direct list-price changes. With both OpenAI and Anthropic heading toward IPOs (OpenAI rumored late 2026, Anthropic similar), prices are likely to keep climbing as both companies optimize for revenue ahead of public-market scrutiny.

Who’s Affected

Developers building consumer-chat applications on GPT-5.5 see the worst cost increase (+92% for short prompts) — Cursor, ChatGPT-style consumer products, and customer-support agents fall in this category. Developers using GPT-5.5 for long-context analysis (legal review, codebase analysis, research synthesis) see more manageable +49% to +51% increases. The DeepClaude routing tool (covered May 4) — which lets Claude Code run on DeepSeek V4 Pro at $0.87/M output tokens (~17x cheaper than GPT-5.5) — gains a much sharper economic argument as the GPT-5.5 cost increase compounds. The Chinese open-weight cohort (DeepSeek, Moonshot Kimi, Xiaomi MiMo) gains commercial leverage on price-per-task workflows. OpenRouter itself benefits from increased usage as developers route around the most expensive paths.

What’s Next

Watch for OpenAI to respond either by clarifying the response-length variation or by introducing pricing tiers that smooth the short-prompt penalty. Anthropic’s Opus 4.7 pricing is likely to face similar OpenRouter analysis. The broader trend — frontier-lab IPO trajectories driving sustained pricing increases — implies that price-per-task economics for AI deployment will continue to favor the cheapest tier (GPT-5.4 Nano, Gemini 3.1 Flash-Lite, Claude Haiku) and open-weight options (DeepSeek V4 Flash, Llama-based) over the frontier tier.