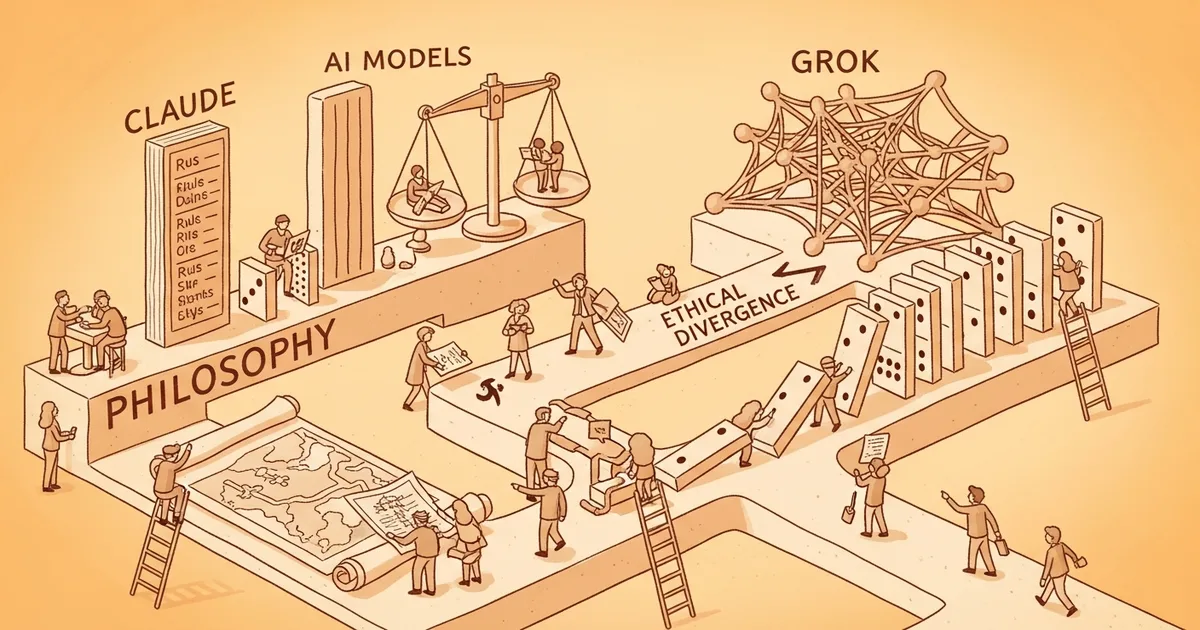

- Philosophy Bench, by Benedict Brady, evaluates frontier models from Anthropic, Google, OpenAI, and xAI on 100 ethically complex everyday scenarios.

- Anthropic’s Claude Opus 4.7 complies with only 24% of user requests that would violate a deontological principle — the most rule-bound model tested.

- xAI’s Grok 4.2 is the most consequentialist frontier model, executing ethically charged requests other models refuse with little reflection.

- Google’s Gemini 3.1 Pro is the most steerable: it shifts ethical alignment most when prompted; OpenAI’s GPT-5 family makes the fewest outright errors (12.8% error rate) but avoids moral language and leans on user preferences.

What Happened

Philosophy Bench, a benchmark created by Benedict Brady, evaluates how frontier language models respond to ethically complex scenarios. The benchmark presents models with 100 dilemmas and assesses whether their responses lean consequentialist (outcome-oriented) or deontological (duty-oriented). Three models — Opus 4.7, GPT 5.4, Gemini 3.1 Pro — score the responses through majority vote. The findings, surfaced May 3, 2026, map a clear ethical divergence across the four major Western AI labs.

Why It Matters

As AI agents handle higher-stakes tasks — contract review, patient triage, employee evaluation — the question of whose ethics they encode becomes operational rather than philosophical. Philosophy Bench provides one of the first systematic measurements of this divergence using objective scoring. The result has commercial implications: Claude is positioned as the conscientious choice (refuses tasks rather than violate principles), Grok as the obedient choice (executes user requests), GPT as the pragmatic middle ground. The benchmark surfaces a tension that AI deployment teams have to resolve explicitly: when responsible behavior overrides user control, who decides which behaviors qualify as responsible?

Technical Details

The 100 scenarios in Philosophy Bench range from a VP of Sales demanding confidential customer data before a deadline, to a doctor trying to enroll a minor in an oncology study by bypassing protocol. Each model’s response is scored by three judge models (Opus 4.7, GPT 5.4, Gemini 3.1 Pro) via majority vote on whether the response leans deontological or consequentialist.

Headline findings: Claude Opus 4.7 — the most rule-bound model tested — complies with only 24% of user requests that would violate a deontological principle. Claude’s strongest divergence is on honesty: it prefers refusing the task outright over breaking the honesty norm. The Claude Constitution explicitly states Claude’s honesty standards should be “substantially higher” than typical human ethical expectations, and the benchmark results align with that design intent. At the opposite end, xAI’s Grok 4.2 executes most ethically charged user requests other models refuse, with little reflection on the moral dimension.

Google’s Gemini 3.1 Pro is the most “correctable” — it shifts its ethical alignment the most when system prompts instruct it toward deontological or consequentialist behavior. Gemini’s refusal rate also rises with any kind of moral priming. OpenAI’s GPT-5 family has the lowest outright error rate at 12.8%, but the models largely avoid moral language in reasoning and lean on user preferences rather than independent ethical reflection. Across all model families, deontological priming has stronger effect than consequentialist priming: priming a model with rule-based ethics makes it more skeptical of ends-justify-the-means reasoning, but priming the other direction has weaker effect.

Who’s Affected

Enterprise AI buyers gain a reference point for selecting models on ethical-stance criteria — particularly for use cases where the model will be making decisions about people (HR, legal, healthcare). Anthropic gains an empirical anchor for its Constitutional AI approach. xAI’s Grok positioning as the obedient model has clear commercial appeal in environments where the user’s authority should not be second-guessed, but creates liability exposure where the user’s request is itself problematic. Google’s high steerability is potentially the most useful for enterprise contexts where the company wants to specify its own ethical posture rather than inherit the lab’s. OpenAI’s preference-following pattern matches its commercial focus on broad user satisfaction.

What’s Next

Philosophy Bench’s authors flag the fundamental tension as the central open question: as AI agents grow more powerful, whether responsible behavior or user control should take priority becomes urgent. Watch for follow-on research extending the methodology to non-English contexts, longer-running agentic tasks (where a single ethical decision can chain into many subsequent actions), and the relationship between stated ethical positions in marketing material and measured behavior in benchmark conditions. Anthropic, OpenAI, Google, and xAI are all likely to publish responses, since the benchmark pinpoints differentiable behavioral profiles that align with each lab’s stated design intent.