A comprehensive technical document shared on r/MachineLearning on March 22 provides a detailed framework for designing AI chip software and hardware from the ground up. The author, who previously worked on Google’s Tensor Processing Units (TPUs) and NVIDIA GPU performance characterization, maps the complete design space from workload analysis through architecture selection to compiler and runtime implementation.

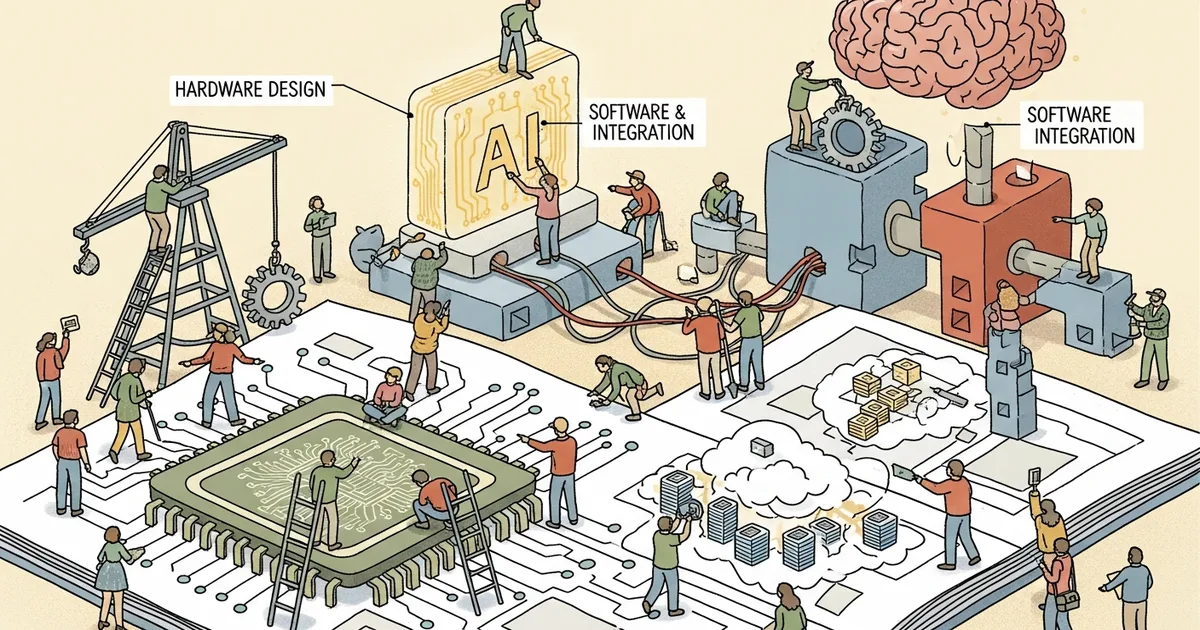

The document addresses a gap in publicly available knowledge about AI chip design. While individual components — attention mechanisms, quantization schemes, memory hierarchies — are well-documented in isolation, the interactions between hardware architecture decisions and software stack design are rarely discussed in a unified framework. The paper connects these layers, showing how choices at the algorithm level constrain hardware design and vice versa.

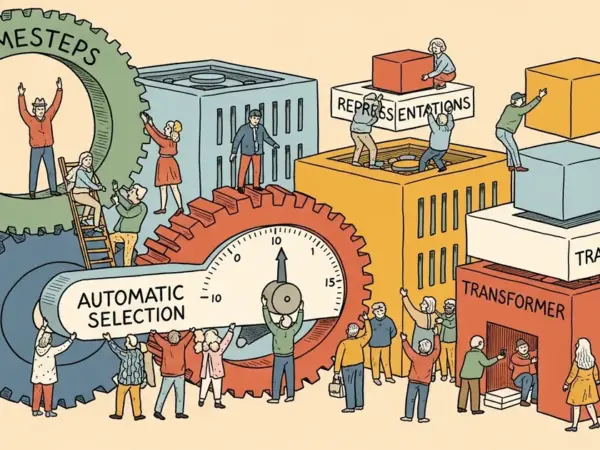

Key technical contributions include analysis of memory bandwidth bottlenecks across different AI workloads, comparison of dataflow architectures for transformer inference versus training, guidelines for mapping operator graphs to custom silicon, and practical considerations for compiler design that targets non-standard architectures. The author draws on experience with both Google’s and NVIDIA’s approaches to illustrate tradeoffs between programmability and efficiency.

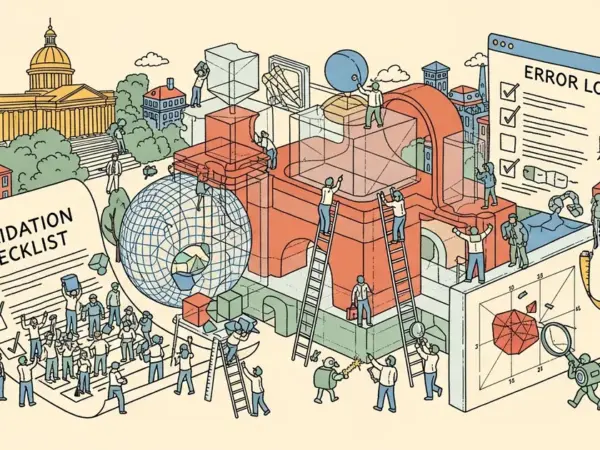

The timing is relevant as dozens of AI chip startups compete to build alternatives to NVIDIA’s GPUs. Most focus on narrow performance claims — faster attention, cheaper inference, lower power — without addressing the full-stack integration challenges that determine whether a chip succeeds in production. The document provides a checklist of considerations that chip designers often discover late in the development process, when architectural changes are expensive or impossible.

For the AI hardware industry, the document serves as both a technical reference and a cautionary guide. Building competitive AI silicon requires simultaneous optimization across algorithms, architecture, compilers, and runtime systems — a scope that few teams have the cross-disciplinary expertise to execute. The author’s experience at both major silicon vendors lends credibility to recommendations that might otherwise read as theoretical.