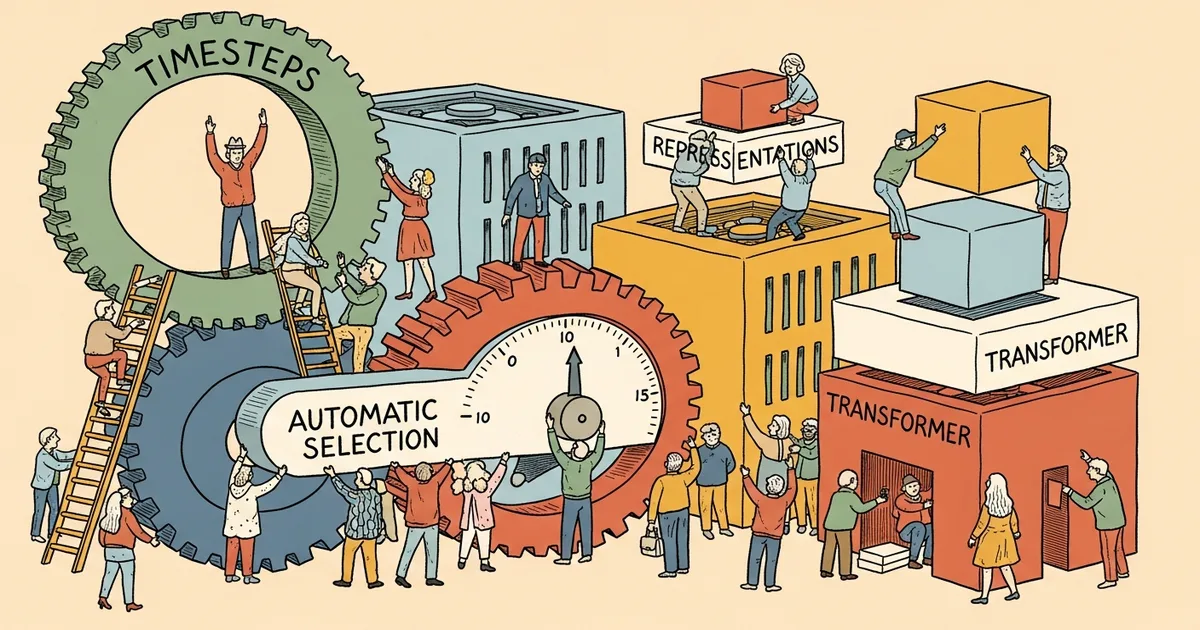

- A-SelecT automatically identifies the most information-rich timestep for Diffusion Transformer (DiT) representation learning in a single run, eliminating expensive exhaustive timestep searches.

- DiT models using A-SelecT surpass all prior diffusion-based methods on classification and segmentation benchmarks.

- The method addresses two constraints holding back DiT discriminative performance: inadequate timestep selection and insufficient exploitation of transformer-specific features.

- Unlike previous approaches, A-SelecT requires no computationally intensive search over timestep-feature combinations.

What Happened

A team of researchers including Changyu Liu, James Chenhao Liang, Wenhao Yang, Yiming Cui, and colleagues has introduced A-SelecT (Automatically Selected Timestep), a method that improves how Diffusion Transformers extract useful representations for tasks like image classification and segmentation.

Diffusion models, originally developed for image generation, have increasingly been repurposed for discriminative tasks where the model needs to understand image content rather than create it. The challenge has been identifying which point in the diffusion process produces the most useful internal representations. A-SelecT solves this by dynamically finding that optimal point without trial and error.

The full research team also includes Jinghao Yang, Tianyang Wang, Qifan Wang, Dongfang Liu, and Cheng Han. The paper was submitted to the Computer Vision and Pattern Recognition category on arXiv.

Why It Matters

Diffusion Transformers (DiTs) have emerged as a promising alternative to U-Net-based diffusion models for both generative and discriminative tasks. However, extracting strong representations from DiTs requires selecting the right timestep — the point in the noise-to-image process where the model’s internal features capture the most meaningful information about the input.

Previous approaches relied on exhaustive searches over timestep-feature combinations, which is computationally expensive and does not scale well. A-SelecT reduces this to a single-run process, making DiT-based representation learning practical for a wider range of applications.

The results are notable: DiTs equipped with A-SelecT outperform all prior diffusion-based approaches on standard classification and segmentation benchmarks. This positions DiTs as a viable foundation model architecture not just for generation but for understanding visual content, expanding the return on investment for organizations training large diffusion models.

Technical Details

The core innovation is a method that “dynamically pinpoints DiT’s most information-rich timestep from the selected transformer feature in a single run.” During the diffusion process, a model applies incremental denoising steps, and each timestep produces different internal representations. Early timesteps capture high-level structure while later timesteps focus on fine details.

A-SelecT addresses two specific constraints that the authors identified in current DiT training. The first is inadequate timestep searching: existing methods either pick a fixed timestep or search exhaustively across all possibilities, requiring multiple complete training runs to determine which timestep works best. The second is insufficient exploitation of DiT-specific feature representations, meaning previous work did not fully leverage the architectural differences between transformers and U-Nets when extracting discriminative features.

The transformer architecture in DiT produces features at every layer and every timestep, creating a large search space. A-SelecT narrows this by jointly selecting the optimal timestep and the optimal transformer layer feature, treating them as a coupled selection problem rather than two independent choices.

The method was validated across classification and segmentation benchmarks. On both task types, DiT models using A-SelecT achieved state-of-the-art results among diffusion-based approaches, demonstrating improvements in both efficiency and accuracy over prior methods that required multi-run timestep optimization.

Who’s Affected

Computer vision researchers exploring diffusion models for discriminative tasks will find this directly applicable. The method is particularly relevant for teams that have invested in DiT architectures for generation and want to repurpose those same models for understanding tasks without additional training overhead.

Practitioners working on image classification and semantic segmentation pipelines may benefit from integrating DiT-based feature extractors, now that A-SelecT removes the timestep search bottleneck. Teams that have already trained DiT models for generation can potentially reuse those same weights for discriminative tasks with minimal additional cost.

What’s Next

The paper demonstrates results on classification and segmentation, but whether A-SelecT’s timestep selection generalizes to other discriminative tasks such as object detection, depth estimation, or video understanding remains to be validated. The method’s effectiveness may also depend on the specific DiT architecture and pretraining data used.

Additionally, while A-SelecT eliminates the exhaustive search cost, the underlying requirement for a pretrained DiT model means that researchers still need access to generative pretraining infrastructure before they can benefit from this approach.

Related Reading

- PhD Student Designs Photonic Chip That Performs KV Cache Selection 944x Faster Than GPUs

- Stable Diffusion Review 2026: The Open-Source Image Generator That Changed Everything

- NVIDIA Open-Sources AITune to Automate PyTorch Inference Backend Selection

- Aurora Makes AI 1.25x Faster by Learning While It’s Running — No Retraining, No Downtime

- DeepMind Study: LLM Rewrites Game Theory Algorithms and Outperforms Human Experts