- DesignWeaver is a new interface that helps novice designers write better prompts for AI image generation by extracting design dimensions from generated images.

- A 52-participant study found that users crafted longer prompts with more domain-specific vocabulary and produced more diverse product designs.

- The system was developed by researchers at UC San Diego and accepted to CHI 2025, the top conference in human-computer interaction.

- The approach introduces “dimensional scaffolding,” which surfaces attributes like material, texture, and shape as interactive options rather than requiring users to know design terminology.

What Happened

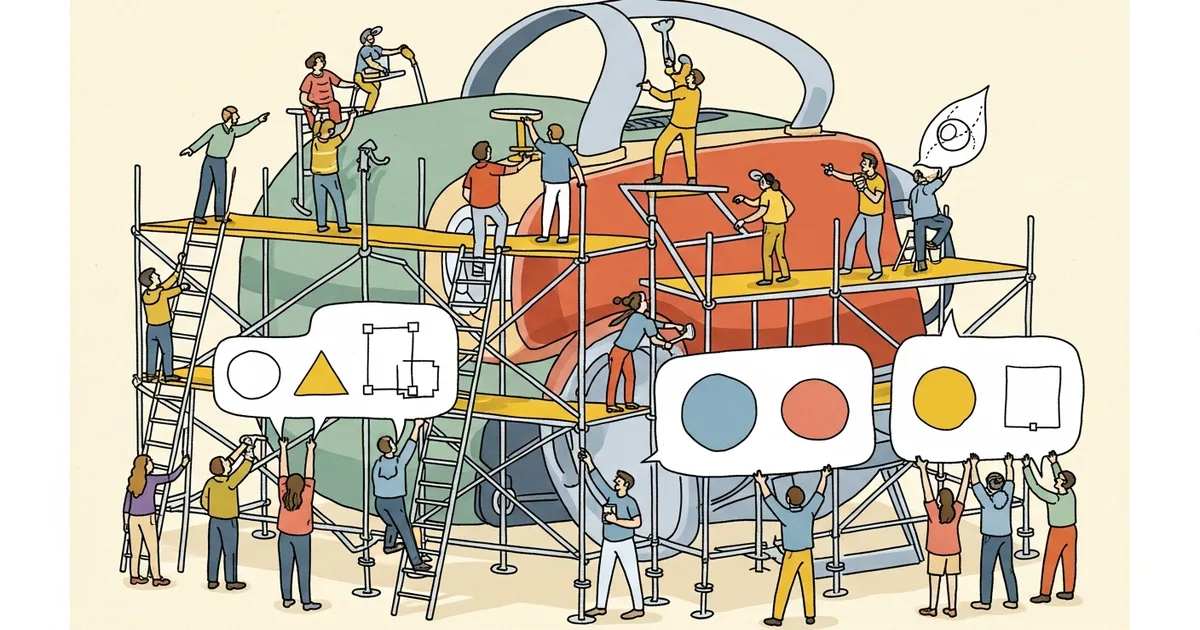

Researchers at the University of California, San Diego have developed DesignWeaver, an interface that helps inexperienced designers use text-to-image AI tools more effectively. The system, described in a paper published on arXiv and accepted to CHI 2025, introduces a technique called “dimensional scaffolding” that bridges the gap between visual exploration and text-based prompt writing.

The paper was authored by Sirui Tao, Ivan Liang, Cindy Peng, Zhiqing Wang, Srishti Palani, and Steven P. Dow. Their work addresses a well-documented problem in AI-assisted design: while generative AI tools like Midjourney and Stable Diffusion allow anyone to create product design concepts quickly, beginners often lack the vocabulary and domain knowledge to write prompts that produce useful, professional-quality results.

Why It Matters

Text-to-image AI tools have made visual prototyping accessible to people without formal design training, but the quality of outputs depends heavily on the quality of prompts. The research team conducted formative interviews with 12 experienced product designers and found that professionals typically rely on “visual references to guide co-design discussions rather than written descriptions.”

This creates a fundamental mismatch. The AI tools require text input, but the people who could benefit most from them — novice designers, students, and non-designers in product teams — do not know the specialized terms needed to produce targeted results. A professional might specify “chamfered polycarbonate housing with a matte brushed-aluminum accent,” while a beginner might type “nice modern case.” DesignWeaver attempts to close that vocabulary gap systematically.

The implications extend beyond product design. Any creative field where AI tools require domain-specific prompting — architecture, fashion, interior design, graphic design — faces the same knowledge barrier between expert and novice users.

Technical Details

DesignWeaver works by generating an initial set of product design images from a basic user prompt, then analyzing those images to extract key design dimensions such as material, shape, color palette, surface texture, and proportion. These dimensions are presented to the user as an interactive palette, allowing them to select and combine attributes without needing to know the correct terminology in advance.

The system creates what the researchers call “dimensional scaffolding,” a structured intermediary layer that sits between the user and the AI model. As users explore and select dimensions from the palette, the system automatically refines the underlying text prompt, incorporating domain-specific terms that the user might not have known to include on their own.

In a controlled study with 52 participants, DesignWeaver users produced measurably better results than a control group using standard prompting methods. They crafted longer prompts containing more specialized design vocabulary and generated more diverse and innovative product design concepts across multiple iterations. However, the researchers noted one important limitation: users who worked with DesignWeaver “developed higher expectations than current text-to-image models could fulfill,” suggesting the interface may outpace the capabilities of the underlying generation technology.

Who’s Affected

The research is most relevant to product designers, industrial designers, and design students who use AI tools for early-stage concept exploration. It also has implications for the developers of text-to-image platforms, who could integrate similar scaffolding features to improve their products for non-expert users and reduce the steep learning curve associated with prompt engineering.

Companies that build AI-powered design tools, including Canva, Figma, and Adobe, may find the dimensional scaffolding approach useful for onboarding new users. The technique could also benefit engineering and marketing teams that use AI-generated visuals for rapid prototyping and concept validation but lack formal design training.

What’s Next

The 26-page paper, which includes 22 figures detailing the system architecture and study results, will be presented at CHI 2025. One open question is whether dimensional scaffolding can generalize beyond product design to other creative domains where similar vocabulary gaps exist between experts and novices. The current system is also limited by the capabilities of the underlying text-to-image models, which may not be able to render all the design variations that the scaffolding interface can specify.

Related Reading

- Cognichip Raises $60M to Apply AI to Chip Design, Claims 75 Percent Cost Reduction

- Alibaba’s Accio Hits 10M Users as Small Sellers Adopt AI Product Sourcing

- Debate Protocol Design Shapes Multi-Agent AI Outcomes, Controlled Study Finds

- Zhipu AI Launches GLM-5V-Turbo, a Multimodal Model That Converts Design Mockups to Code