Groq

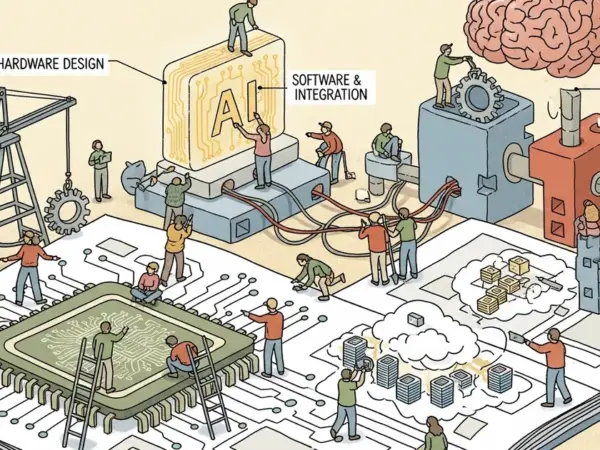

Groq provides ultra-fast AI inference through its specialized Language Processing Units (LPUs), significantly accelerating large language models.

Groq specializes in AI inference, utilizing its custom-built Language Processing Units (LPUs) to deliver exceptionally fast and efficient processing for large language models (LLMs) and other AI models. Its technology, particularly the new NVIDIA Groq 3 LPU, is designed to address latency-sensitive workloads and memory bottlenecks, offering performance gains over traditional GPUs for real-time AI applications. GroqCloud provides access to this infrastructure.

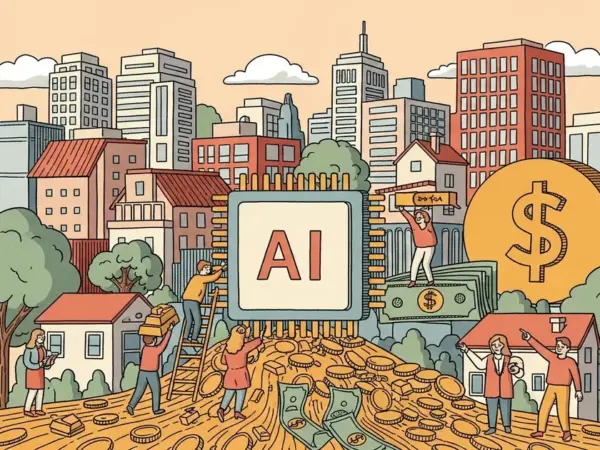

Groq's acquisition by Nvidia for $20B in late 2025 validates its ultra-fast LPU inference technology as market-defining, positioning it as a leader in AI infrastructure. The integration with Nvidia's GPU systems significantly enhances AI inference efficiency, making it highly valuable for enterprise applications.

Anthropic API

9/10Anthropic API provides access to its advanced Claude large language models for complex reasoning, coding,…

OpenAI API

9/10The OpenAI API provides access to advanced AI models for integrating capabilities like language understanding,…

Hugging Face

9/10Hugging Face is a versatile platform and open-source community that provides tools to streamline machine…

Together AI

8/10Together AI is an AI Native Cloud providing high-performance infrastructure and tools for building, training,…

Fireworks AI

8/10Fireworks AI is a high-performance inference platform for deploying and fine-tuning generative AI models with…

LangChain

8/10LangChain is a developer framework for building AI applications and agents powered by large language…

Visit the official Groq website