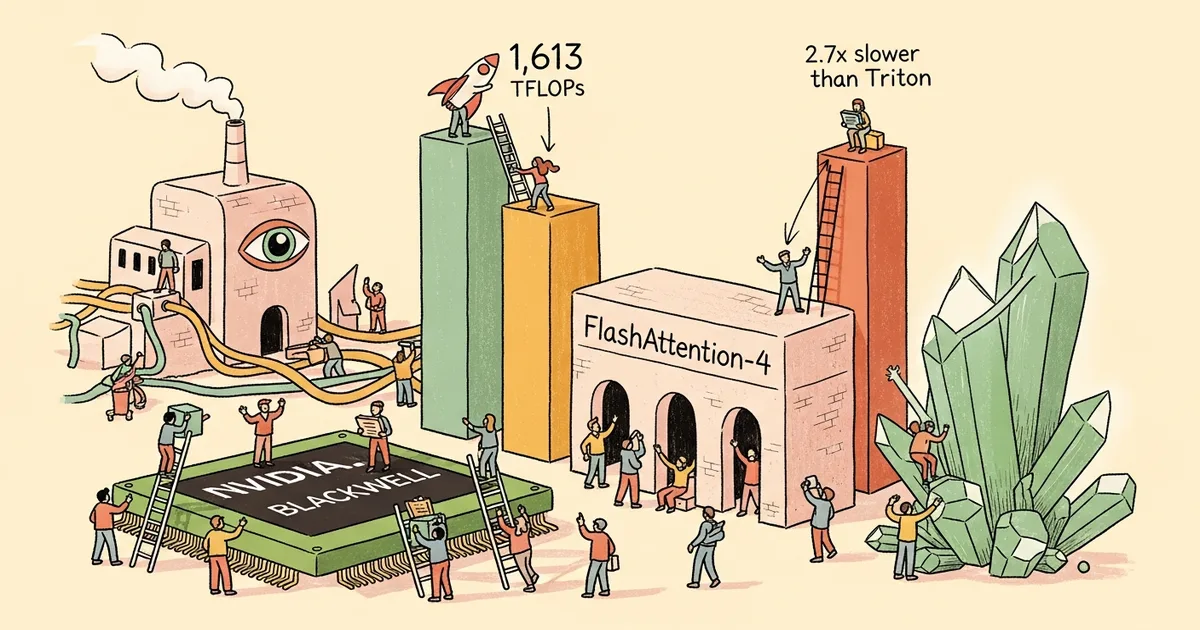

- FlashAttention-4 achieves 1,605 TFLOPs per second on NVIDIA B200 GPUs with BF16 precision, reaching 71 percent hardware utilization and delivering 1.1 to 1.3 times speedup over cuDNN 9.13.

- The implementation is written entirely in CuTe-DSL, a Python-embedded kernel language, cutting compile times by 20 to 30 times compared to traditional C++ template approaches.

- Developed by Tri Dao and collaborators from Princeton, Together AI, Meta, NVIDIA, and Colfax Research, released March 5, 2026.

- Key innovations include software-emulated exponential functions, ping-pong Q tile scheduling, and a 2-CTA backward pass that reduces atomic operations by 50 percent.

What Happened

On March 5, 2026, Tri Dao and a team of researchers from Princeton University, Together AI, Meta, NVIDIA, and Colfax Research released FlashAttention-4, a redesigned attention kernel optimized for NVIDIA’s Blackwell GPU architecture. The paper, titled “FlashAttention-4: Algorithm and Kernel Pipelining Co-Design for Asymmetric Hardware Scaling,” is available on arXiv (2603.05451) with accompanying open-source code on GitHub.

The team includes Ted Zadouri, Markus Hoehnerbach, Jay Shah, Timmy Liu, Vijay Thakkar, and Tri Dao. The work addresses a fundamental shift in GPU hardware design where tensor core throughput has scaled faster than other functional units.

Why It Matters

Attention computation is the primary bottleneck in transformer-based AI models, and FlashAttention has become the standard optimization used across virtually all large language model training and inference workloads. Every major AI lab, from OpenAI to Google DeepMind to Anthropic, relies on FlashAttention variants in their training infrastructure. Each generation has delivered substantial speedups that directly translate to reduced training costs and faster inference.

FlashAttention-4’s 1.1 to 1.3 times speedup over cuDNN 9.13 and 2.1 to 2.7 times speedup over Triton on NVIDIA B200 GPUs means organizations training large models on Blackwell hardware will see immediate throughput improvements. The peak performance of 1,605 TFLOPs per second at 71 percent utilization pushes closer to the theoretical hardware limits.

Technical Details

Blackwell GPUs present an asymmetric scaling challenge: fifth-generation tensor cores deliver doubled throughput with larger tile sizes (128x256x16 for BF16/FP16), but shared memory bandwidth (128 bytes per cycle per SM) and exponential functional units (16 operations per cycle per SM) have not scaled proportionally. FlashAttention-4 redesigns the attention algorithm around these constraints.

The forward pass uses ping-pong Q tile scheduling with a dedicated correction warpgroup to keep tensor cores continuously fed. Software exponential emulation distributes computation across MUFU.EX2 and FMA units using polynomial approximation, working around the limited exponential throughput. The implementation exploits Blackwell’s 256 KB tensor memory (TMEM) per SM and fully asynchronous MMA operations.

The backward pass introduces a 2-CTA design that pairs cooperative thread arrays to reduce shared memory traffic and cuts atomic operations by 50 percent. A deterministic mode using semaphore-based locking achieves 85 to 90 percent of nondeterministic performance, addressing reproducibility requirements in research and production.

The entire implementation is written in CuTe-DSL, CUTLASS’s Python-embedded kernel language, which compresses compile times by 20 to 30 times versus C++ templates while maintaining full hardware expressivity.

Who’s Affected

AI research labs and companies training large language models on NVIDIA Blackwell GPUs will see direct benefits when integrating the new kernels into their training pipelines. Cloud providers offering B200 and GB200 instances can deliver better price-performance to customers, which could influence GPU rental pricing on platforms like AWS, Google Cloud, and CoreWeave.

Framework developers maintaining PyTorch, JAX, and other deep learning libraries will need to integrate the new kernels to pass the performance gains through to end users. The shift to CuTe-DSL also lowers the barrier for GPU kernel development, potentially enabling a broader set of researchers to contribute optimizations rather than relying on a small group of CUDA C++ experts.

What’s Next

FlashAttention-4 currently targets Hopper and Blackwell architectures (H100, B200, GB200). Support for other GPU families and future NVIDIA architectures will determine how broadly the optimizations apply. AMD and Intel GPU users do not benefit from these kernel-level optimizations, which are tightly coupled to NVIDIA’s hardware features.

The asymmetric scaling problem the paper identifies is likely to intensify in future hardware generations as tensor core throughput continues to outpace memory bandwidth and special function unit capacity. This suggests that algorithm-hardware co-design will remain essential for attention computation rather than being a one-time engineering effort.

Related Reading

- TurboQuant Optimization Achieves 22.8 Percent Decode Speedup in llama.cpp by Skipping Redundant KV Dequantization

- NVIDIA Donates GPU Resource Allocation Driver to Kubernetes Open-Source Project

- NVIDIA Partners with Six Energy Companies to Build Grid-Flexible AI Data Centers

- Google Now Rewrites News Headlines in Search Results Using AI