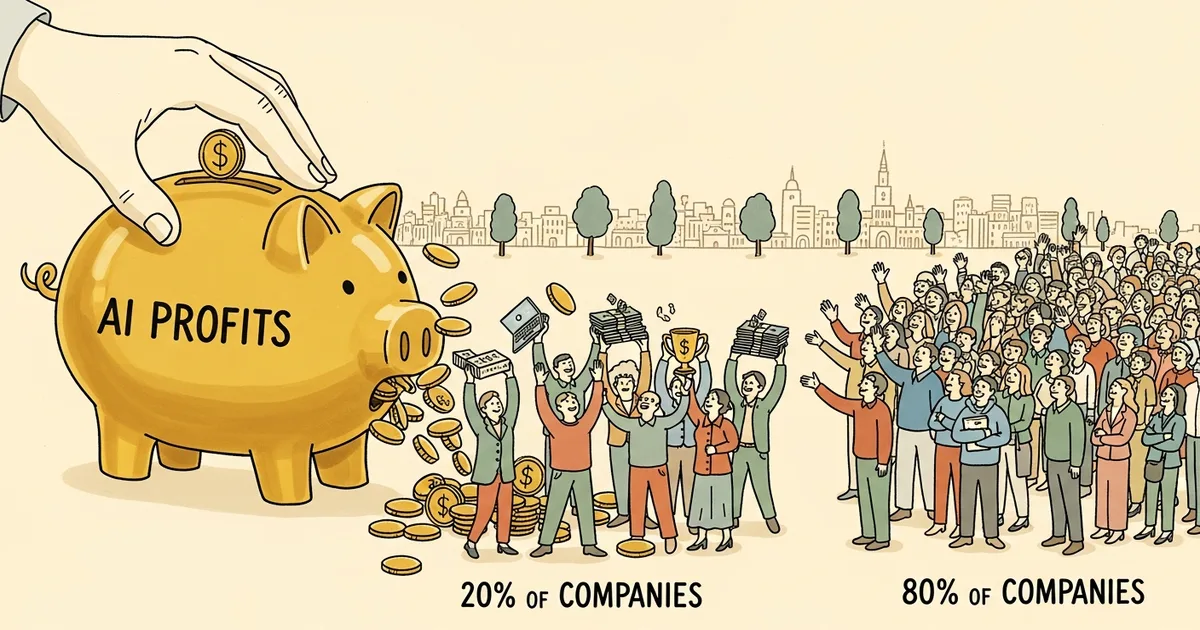

PricewaterhouseCoopers (PwC), in its 2026 AI Performance study released April 2026, surveyed 1,217 executives across 25 industry sectors and produced a finding that should end the “democratizing AI” narrative for good: 74% of AI’s total economic value is captured by just 20% of companies. The remaining 80% are dividing what’s left. The divide is structural, not accidental — and it’s accelerating.

How PwC Measured the Winners

The PwC AI Performance study is among the largest executive-level AI adoption surveys conducted to date, drawing from 1,217 C-suite leaders and senior decision-makers across 25 sectors globally. Respondents were segmented into two cohorts: “leaders” — organizations extracting disproportionate economic value from AI deployments — and “the rest,” companies that have adopted AI tools but are not generating returns at scale.

PwC weighted responses by reported revenue impact, cost reduction, and net-new revenue streams attributable to AI. The 74% figure represents the leaders’ aggregate share of total economic value generated across all surveyed organizations — not market capitalization, not AI investment spend, but operational and revenue value actually extracted from deployed systems. That distinction matters. This isn’t measuring who bought the most AI licenses. It’s measuring who turned AI into money.

The most predictive variable in the dataset wasn’t budget, sector, or company size. It was deployment depth — how broadly and centrally AI is integrated into core business operations versus running in isolated experiments.

The 74/20 Split in Detail

Twenty percent of companies are capturing 74% of AI’s economic output. That’s not a bell curve. It’s a winner-take-most distribution that mirrors what happened with cloud infrastructure, mobile platforms, and e-commerce in previous technology cycles.

The leader cohort isn’t just using AI more frequently — the study found leaders are 3.4x more likely than the broader population to be deploying AI for business reinvention: new products, new market entry, new operating models. The majority of adopters are using AI to automate existing workflows. That’s a productivity play. Leaders are running a growth play. The economic difference between those two orientations is not incremental.

Companies in the leader cohort reported AI contributing an average of 12–15% to revenue growth over the 24 months preceding the survey. The laggard cohort reported under 3%. Apply that delta across capital allocation, hiring decisions, product investment, and proprietary data accumulation — and the gap doesn’t close, it compounds quarterly.

What AI Leaders Actually Do Differently

The PwC data surfaces four consistent behaviors that separate the 20% from everyone else.

- Full operational integration, not feature deployment. Leaders have embedded AI into core processes — pricing algorithms, risk modeling, customer acquisition, supply chain management — as the operating layer, not an add-on running in parallel. The rest have pilots. Leaders have infrastructure.

- Board-level AI accountability with P&L ownership. In leader organizations, AI performance is reported as a boardroom KPI tied to revenue outcomes. Among laggards, AI accountability typically sits with IT or a designated “AI team” with no direct P&L accountability — which means no forcing function to scale.

- Data infrastructure built before AI deployment, not during. Leaders spent an average of 2–3 years building clean, accessible, governed data pipelines before scaling AI applications. The rest are attempting to build data infrastructure and AI applications simultaneously — a sequencing error that creates technical debt faster than the AI creates value.

- Reinvention metrics, not productivity metrics. Leaders measure AI success by new revenue generated and new markets entered, not by hours saved or headcount reduction. That measurement choice drives entirely different investment decisions and risk tolerance.

This pattern is visible in how major enterprises are moving. OpenAI’s reported $1 billion enterprise deal with Disney illustrates the scale at which leader-cohort companies are committing to AI as core infrastructure — not a pilot, not an experiment, but a multiyear operational bet that smaller competitors structurally cannot match at the same speed.

The Pilot-Purgatory Problem

The PwC AI Performance study names a specific failure mode afflicting the 80%: pilot purgatory — the state of running perpetual proofs-of-concept that never reach production scale.

The data is specific: 58% of non-leader companies report having 10 or more active AI pilots. The median time an AI pilot has been running without scaling to production is 14 months. These are not companies ignoring AI. They’re companies that have allocated budget, built teams, and run experiments — and are stuck at the threshold between testing and operating.

The causes are structural. Pilot-stage AI typically runs on sandboxed data, disconnected from production systems. It’s evaluated against technical performance metrics — accuracy, uptime, user adoption rates — rather than the revenue and margin outcomes that drive capital allocation decisions. And the organizational change required to embed AI into production (retraining staff, restructuring workflows, creating AI-accountable roles with genuine authority) requires a level of organizational commitment that a pilot budget never actually funds.

The cost of staying in pilot mode isn’t neutral. Every quarter spent piloting is a quarter the leader cohort is generating proprietary training data, hardening AI-native workflows, and compounding returns. The Humans First movement — which has framed measured AI adoption as an ethical stance — may inadvertently be providing philosophical scaffolding for organizations that are simply stuck. Hesitation dressed as caution is still hesitation.

Why the Gap Is Self-Reinforcing

The 74/20 split isn’t a snapshot — it’s a trajectory. The structural advantages leaders hold create compounding returns that make the gap harder to close with each passing quarter.

Infrastructure investment is the most visible signal of this dynamic. Nebius’s announced $10 billion AI data center in Finland reflects a broader pattern: AI-forward organizations are locking in compute access at enterprise scale, at multi-year pricing, while the majority of companies are still negotiating their pilot budgets. This creates a capability ceiling for late movers that is structural, not just financial.

MegaOne AI tracks 139+ AI tools across 17 categories, and the adoption pattern is consistent: enterprise-tier platforms with deep integration capabilities are accelerating among leader-cohort companies, while mid-market AI adoption is fragmenting into disconnected point solutions. Fragmentation is a symptom of pilot culture — buying individual tools for individual experiments rather than committing to integrated capabilities tied to strategic outcomes.

The historical parallel holds. Companies that committed to cloud infrastructure between 2010 and 2015 built data assets, operational competencies, and cost structures that entrants in 2018 couldn’t replicate regardless of budget. The PwC AI Performance study suggests AI is following the same adoption curve — compressed into a shorter window, which means the cost of delay is proportionally higher.

The Acquisition Signal the Survey Doesn’t Capture

One proxy for leader behavior that sits outside the PwC survey data: M&A velocity. AI-leading companies aren’t just deploying AI capabilities — they’re acquiring them. The consolidation of AI talent and IP at the enterprise level is happening through acquisition at a pace that outstrips internal development in both speed and quality.

The pattern of major AI players moving aggressively on acquisitions reflects the same logic the PwC data surfaces: time-to-integration matters more than build-vs-buy economics at this stage. The 80% still debating internal development versus licensing are losing ground to the 20% who determined that buying and shipping is faster than deciding.

What the 80% Can Actually Do

The path forward from pilot purgatory requires three specific interventions, in sequence.

First: move one core business process — not a pilot — fully onto AI within 90 days. Not an adjacent function. Not a new experiment. A process that’s already tracked, already has defined success metrics, already has executive visibility. The objective is to generate a real P&L signal from AI deployment, not a learning exercise. A learning exercise is a pilot. A P&L signal is evidence for the next investment decision.

Second: establish board-level AI accountability tied to revenue or margin outcomes, with quarterly reporting. Without this, AI investments compete against operational costs in annual planning cycles and lose. Boards approve capital for things they’re accountable for. Until AI appears on that list with genuine metrics, the investment stays underfunded relative to what leaders are committing.

Third: stop evaluating AI pilots against pilot metrics. Technical accuracy rates, user adoption percentages, and error reduction statistics are the wrong frame for an investment decision. The only relevant question is: what is the revenue or margin impact of this capability at production scale? Every pilot evaluation that can’t answer that question should be restructured or shut down.

The 74% concentration isn’t a permanent verdict — but the PwC AI Performance study makes clear it won’t reverse through incremental experimentation. The companies currently in pilot purgatory face a straightforward choice: commit to production AI with the urgency the data demands, or accept a compounding structural disadvantage. The window to make that choice is narrowing every quarter.