OpenAI Codex, the company’s enterprise AI coding platform, received a major infrastructure overhaul on April 17, 2026, introducing pay-as-you-go Codex-only seats, token-based billing with no rate limits, and a lightweight model powered by dedicated Cerebras silicon. The platform now serves 2 million weekly active users — a 5x increase in three months — growing at more than 70% month-over-month.

That growth trajectory is not accidental. It reflects a deliberate pricing pivot: removing the per-seat floor that previously made enterprise AI coding adoption a budgeting conversation before a capability conversation.

The OpenAI Codex Enterprise Seat Model, Explained

ChatGPT Business and Enterprise subscribers can now add Codex-only seats at zero fixed monthly cost. Billing is entirely token-based — you pay for what the agents actually consume, not for seats they theoretically occupy.

For engineering teams evaluating AI coding tools, this removes the principal objection: committing to fixed per-seat licensing before knowing actual utilization. A 200-person engineering org that deploys Codex to 40 active users pays for 40 users’ token consumption, not 200 seat reservations.

The model mirrors how cloud infrastructure is sold. Idle capacity costs nothing.

Token-Based Billing With No Rate Limits

The previous Codex tier imposed rate limits that frustrated enterprise deployments during peak development cycles. The new model eliminates those caps entirely.

Token-based pricing without rate limits means Codex can now operate as a genuine background agent — running overnight, handling large refactoring jobs, or processing entire codebases in a single automated session. For teams using Codex for asynchronous work rather than interactive assistance, this is a fundamental capability shift.

OpenAI has not published specific per-token rates for Codex-only seats as of this writing. Enterprise negotiations will likely determine final pricing for large deployments.

Cerebras Hardware Inside: What GPT-5.3-Codex-Spark Actually Means

The most technically significant element of this update is the hardware. OpenAI’s new GPT-5.3-Codex-Spark lightweight model runs on dedicated Cerebras chips — a notable departure from the NVIDIA GPU infrastructure underpinning most of OpenAI’s production stack.

Cerebras’ wafer-scale engine architecture delivers dramatically faster inference for token-heavy workloads — exactly what a coding assistant does: generate token sequences rapidly with low latency. For a model optimized for speed over raw capability, Cerebras silicon makes engineering sense.

This marks one of the first disclosed Cerebras hardware deployments in a major OpenAI production product. Embedding it specifically in the Codex product line suggests strong performance on code generation workloads — a segment where inference speed matters more than maximum parameter scale. The race to build custom AI silicon infrastructure is accelerating across the industry, and OpenAI’s Cerebras bet is a concrete data point in that arms race.

MCP Expands: Four Changes That Matter for Enterprise

The Model Context Protocol expansion in this update is substantial. OpenAI added four specific capabilities: resource reads, tool-call metadata, custom-server tool search, and file-parameter uploads.

- Resource reads allow Codex to pull live data from MCP servers rather than relying solely on context passed at session start — enabling dynamic, up-to-date repository state rather than stale snapshots.

- Tool-call metadata gives Codex structured information about the tools it’s invoking, improving reliability across multi-tool chained workflows.

- Custom-server tool search lets enterprise teams register proprietary internal tools and have Codex discover and invoke them contextually, without hardcoding tool selection into every prompt.

- File-parameter uploads enable direct file attachment to tool calls — critical for code review workflows, documentation generation, or analysis of large file sets.

Taken together, these four changes move Codex from a model with some tool access to an agent with a proper tool ecosystem.

Plugins and Automations: Connecting Codex to Enterprise Systems

OpenAI added Plugins and Automations to Codex specifically to connect it to existing enterprise infrastructure — addressing the consistent objection that AI coding tools work in isolation but don’t integrate with the systems engineering teams actually use.

Automations enable scheduled and event-triggered Codex tasks: a nightly code quality scan, or an agent that fires on every pull request merge, without requiring human initiation of each session. Plugins extend Codex’s reach into third-party systems — ticketing platforms, internal documentation, CI/CD pipelines.

The full catalog of available integrations at launch hasn’t been enumerated publicly. The architectural intent is clear: OpenAI wants Codex to sit inside enterprise workflows, not alongside them as a separate tool engineers tab to manually.

2 Million Weekly Users: What the Growth Rate Signals

The user growth numbers are notable even by AI industry standards. Reaching 2 million weekly active users with 70%+ month-over-month growth suggests Codex has crossed from power-user tool to mainstream developer adoption.

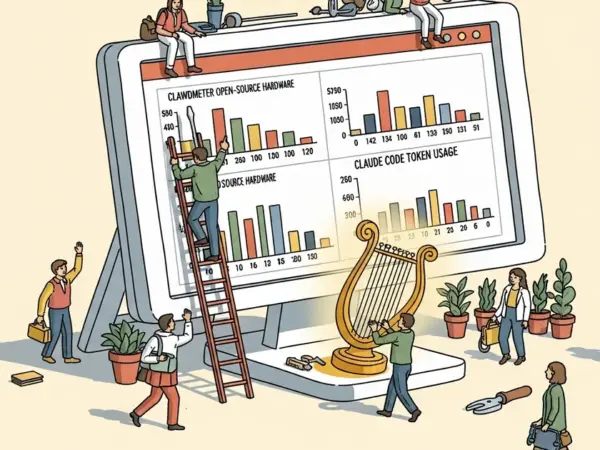

For context: Cursor, one of Codex’s primary competitors, took roughly 18 months from launch to reach 1 million users. Codex appears to be scaling faster — though the comparison isn’t direct, since Codex benefits from distribution through the existing ChatGPT Enterprise install base. MegaOne AI tracks 139+ AI tools across 17 categories, and coding assistant adoption has been one of the most consistently accelerating segments in that dataset over the past year.

The 5x growth in three months maps to the December 2025 through March 2026 window, when OpenAI shipped a series of coding model improvements. Product velocity is driving adoption rather than marketing spend alone.

Codex vs. Cursor vs. Claude Code: The Competitive Landscape

The three-way competition among leading AI coding platforms now maps to distinct segments with different structural advantages.

Cursor has established strong developer mindshare through deep IDE integration, primarily among individual developers and small teams. Its $20/month Pro tier has set a pricing anchor the market navigates around. Codex’s pay-as-you-go model disrupts that comparison directly — teams with variable Codex usage could pay materially less than the $20/month equivalent, while consistently high-volume teams may pay more.

Claude Code, Anthropic’s terminal-first coding agent, competes primarily on depth of reasoning and agentic capability. Recent model upgrades have given Claude Code strong performance on complex multi-step tasks. Codex is now matching this agentic approach while adding enterprise infrastructure advantages — the Plugins and Automations layer in particular gives Codex a native enterprise integration story that Claude Code doesn’t currently offer at the same level.

OpenAI’s distribution advantage is real and structural. Embedding Codex into ChatGPT Enterprise accounts that already exist bypasses the independent sales cycle Cursor and Anthropic must navigate. For enterprise procurement teams, Codex is already in the building.

Three Metrics That Will Determine Whether This Sticks

Whether this update represents a durable competitive shift depends on three data points that will become clear over the next two quarters.

Token consumption per active user. If Codex-only seats generate per-user revenue comparable to Cursor’s $20/month equivalent at comfortable enterprise utilization levels, the pay-as-you-go model is sustainable. If revenue per seat falls significantly below that benchmark, expect a floor price to appear.

MCP ecosystem adoption. The expanded MCP support creates compounding value only if enterprise teams actually build integrations. The custom-server tool search capability is particularly critical — if third-party developers publish Codex-compatible tool servers at scale, the platform value becomes self-reinforcing.

Cerebras reliability at enterprise scale. Running production coding infrastructure on non-NVIDIA hardware is a meaningful operational bet. If GPT-5.3-Codex-Spark maintains availability and latency SLAs at enterprise volumes, it validates the hardware decision and signals OpenAI’s willingness to diversify its silicon stack well beyond what anyone expected. OpenAI’s infrastructure decisions have historically previewed strategic direction well before public announcements confirm it.

Engineering teams evaluating AI coding tools in 2026 should run a 60-day token consumption audit against current Cursor or GitHub Copilot spend before committing to any fixed-seat model. The Codex pay-as-you-go structure is now the most favorable entry point for teams with variable coding workloads — but the enterprise value proposition compounds only at the integrations layer, which requires actual deployment to validate.