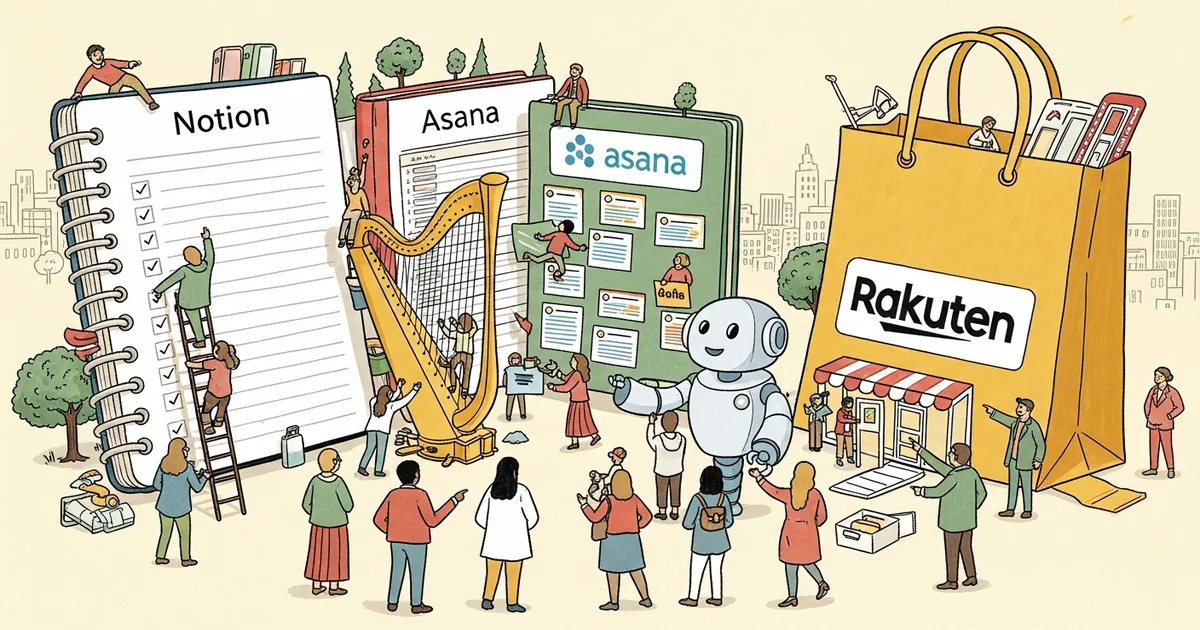

Anthropic, the AI safety company valued at $61.5 billion, launched Claude Managed Agents on April 10, 2026 — a composable API suite designed to take enterprise AI agents from prototype to production in days rather than months. Notion, Asana, Rakuten, Sentry, and Vibecode are already running on the platform, marking one of the most concentrated early-adopter cohorts in recent agentic AI history.

The launch positions Anthropic directly against OpenAI’s Assistants API and Google’s Vertex AI Agent Builder — two platforms that have struggled to convert developer enthusiasm into reliable production deployments. Claude Managed Agents claims to solve the infrastructure gap that has slowed enterprise adoption: the messy middle between a working demo and a system handling real user load.

What Claude Managed Agents Actually Does

Claude Managed Agents is not a single API endpoint. It is a composable suite — a collection of primitives that developers wire together based on their specific workflow requirements. The core components include an agent harness pre-tuned for Claude’s performance characteristics, production-grade orchestration infrastructure, and a set of managed tools covering memory, file access, and web retrieval.

The “managed” label matters. Anthropic handles the infrastructure layer — scaling, latency optimization, model routing, and uptime — so engineering teams do not have to. For enterprises that have spent quarters building internal scaffolding on top of raw model APIs, this is a meaningful offer: outsource the plumbing, keep control of the logic.

MegaOne AI tracks 139+ AI tools across 17 categories, and the pattern is consistent: most enterprise failures in agentic deployments happen not at the model layer but at orchestration. Claude Managed Agents targets exactly that failure point.

Five Early Adopters, Five Different Use Cases

Anthropic’s decision to launch with five named customers — rather than a vague waitlist — signals genuine production readiness. Each adopter represents a distinct application for claude managed agents:

- Notion — Automated workspace management: content summarization, task routing, and document generation at scale across its 100+ million users.

- Asana — Work intelligence agents that monitor project health, surface blockers, and generate status updates without human prompting.

- Rakuten — Customer service and merchandising agents across Rakuten’s e-commerce and fintech properties, which serve over 1.7 billion registered members globally across 30 countries.

- Sentry — Error triage agents that analyze stack traces, correlate incidents, and draft resolution suggestions directly in developer workflows.

- Vibecode — AI-powered development acceleration using agents to handle code review, refactoring suggestions, and test generation at the repository level.

These are not proof-of-concept deployments. Rakuten’s scale — 1.7 billion registered members across financial services, travel, and e-commerce — indicates Anthropic’s infrastructure claims are being stress-tested at enterprise grade from day one.

The Composable API Architecture, Explained

Traditional agentic frameworks require developers to assemble their own stack: pick a model, add a memory layer, build tool-calling logic, handle retries, manage context windows, implement logging. Claude Managed Agents replaces this assembly process with pre-built, interoperable modules.

The architecture is deliberately modular. Developers can deploy a simple single-agent workflow or compose multi-agent pipelines where specialized agents hand off tasks to one another. Anthropic describes this as an “agent harness” design — Claude operates within a defined harness tuned to optimize for performance and reliability, not raw capability exploration.

This is a direct response to enterprise AI’s most persistent complaint: model APIs are powerful but fragile in production. A single rate-limit spike, a context overflow, or an unexpected tool-call failure can cascade into user-facing errors. The managed infrastructure absorbs those failure modes before they reach the application layer.

The Prototype-to-Production Speed Claim

Anthropic’s headline claim is speed: claude managed agents can take a working prototype to a production deployment in days, not months. That is a specific enough claim to scrutinize.

The bottleneck in traditional enterprise agent deployments is not model capability — it is infrastructure readiness. Teams spend weeks configuring autoscaling, setting up monitoring, building fallback logic, and security-reviewing data flows. If Claude Managed Agents genuinely abstracts those concerns, the days-not-months timeline is plausible for teams already familiar with Anthropic’s API surface.

The composable design also reduces integration time. Rather than building custom connectors for every tool, developers access standardized integrations through Anthropic’s managed layer. According to Anthropic’s documentation, the platform handles authentication, rate limiting, and tool-call reliability — three of the top engineering-hours sinks in agentic deployments.

Claude Managed Agents vs. OpenAI Assistants vs. Google Vertex AI

Three major platforms now compete for enterprise agent deployments. The differences are architectural, not cosmetic.

| Platform | Model | Infrastructure | Composability | Enterprise Fit |

|---|---|---|---|---|

| Claude Managed Agents | Claude (Anthropic) | Fully managed | Composable API suite | High — production SLAs, cloud-agnostic |

| OpenAI Assistants API | GPT-4o, o3 | Partially managed | Fixed tool set | Medium — developer-first, reliability gaps noted |

| Google Vertex AI Agents | Gemini | GCP-integrated | Workflow builder + API | High — but GCP-native lock-in |

OpenAI’s Assistants API launched in late 2023 with significant fanfare, but developer feedback has consistently cited reliability gaps and limited composability. OpenAI’s enterprise strategy in 2025 leaned toward high-profile direct deals rather than platform maturity, leaving the developer infrastructure story underserved.

Google Vertex AI Agents has the advantage of deep GCP integration — ideal for organizations already running workloads on Google’s cloud. Its limitation is the same as any cloud-native product: enterprises not on GCP face significant migration overhead to access the full stack.

Claude Managed Agents enters with cloud-agnostic positioning — accessible via API regardless of underlying infrastructure. For enterprises on AWS or Azure, that removes a significant adoption barrier that Vertex cannot match without a GCP commitment.

What “Managed” Means in Enterprise Procurement Terms

The word “managed” carries specific weight in enterprise contracts. It signals shared operational responsibility: Anthropic commits to uptime, performance, and security standards so the customer’s engineering team does not have to maintain a parallel ops function.

For large organizations, the calculus is straightforward. The cost of maintaining bespoke agentic infrastructure — engineering hours, incident response, compliance review — routinely exceeds the cost of a managed API contract. Reliability requirements are strict, and the failure modes of poorly maintained agent systems are increasingly visible to end users and regulators.

Rakuten’s participation is particularly telling. A company managing 1.7 billion registered members across financial services, travel, and digital content has zero tolerance for infrastructure that breaks under load. The fact that Rakuten went production at launch — rather than running an extended closed pilot — suggests the reliability story withstood internal enterprise scrutiny.

As Anthropic’s earlier agent infrastructure work revealed, the company has been building toward managed agentic deployment for over a year. The 2026 source code incident inadvertently confirmed the depth of their internal agent tooling — Claude Managed Agents is the productized version of that infrastructure.

The Competitive Pressure Behind the April Timing

This launch does not exist in a vacuum. OpenAI, Google, and Amazon are all accelerating enterprise agent offerings in 2026. Anthropic’s April timing is a deliberate stake-in-the-ground: establish production-ready credibility before the market consolidates around two or three dominant platforms.

The five-customer launch cohort spans productivity software, commerce at scale, developer tooling, and coding — a breadth that is intentional. Anthropic is signaling horizontal applicability rather than vertical specialization, which is the positioning required to compete with platform giants who bundle agent infrastructure with existing enterprise relationships.

The broader enterprise pushback against opaque AI systems also plays in Anthropic’s favor. The company’s Constitutional AI foundation and documented safety methodology resonate with procurement teams navigating increasing regulatory scrutiny around autonomous systems in the EU and US. A managed infrastructure contract from a safety-oriented vendor is an easier sell to legal and compliance than a self-managed deployment on a model with less documented behavior.

According to Gartner’s 2025 enterprise AI survey, 67% of large enterprises cited “reliability in production” as the top barrier to agentic AI adoption — not model capability, not cost. Claude Managed Agents is a direct product response to that finding.

The bottom line: Claude Managed Agents is the first enterprise agent platform that pairs a production-grade infrastructure commitment with a credible, named early-adopter cohort. Enterprises evaluating agentic deployments in Q2 2026 should treat it as a primary consideration — not because Anthropic says so, but because Rakuten, Asana, and Notion already made that call with production workloads.

![Editorial illustration for: OpenAI Killed Sora — Here Are 5 AI Video Generators That Are Actually Better [Ranked 2026]](https://megaoneai.com/wp-content/uploads/2026/04/sora-alternatives-2026-600x450.webp)