A nanophotonics PhD student has designed PRISM, a photonic chip architecture that replaces the GPU-based key-value cache scanning bottleneck in large language model inference with optical broadcast computation. The design achieves O(1) constant-time block selection regardless of context length — a fundamental improvement over the O(N) linear scaling of current GPU-based approaches. At one million tokens of context on an NVIDIA H100, the photonic approach is approximately 944 times faster and uses 18,000 times less energy than the equivalent GPU scan.

The bottleneck PRISM addresses is specific and well-understood. Block-sparse attention methods like Quest and RocketKV reduce the number of key-value blocks fetched from memory during each decode step, but they still require scanning all N block signatures stored in high-bandwidth memory (HBM) to determine which blocks are relevant. On an H100 GPU processing one-million-token contexts, this scan takes approximately 8.5 microseconds per query. In batch serving scenarios where hundreds of queries run simultaneously, this scanning cost becomes the dominant performance constraint.

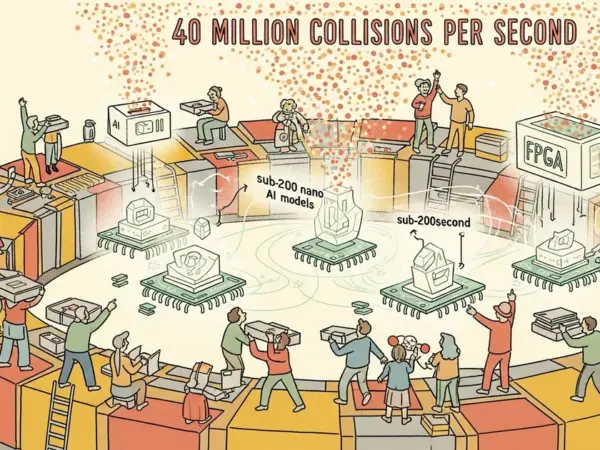

PRISM replaces the electronic scan with an optical broadcast mechanism. Instead of sequentially reading block signatures from memory and comparing them against the current query, the photonic chip broadcasts the query optically to all block signature comparators simultaneously. Each comparator performs its relevance check in parallel using photonic interference — a physical computation that happens at the speed of light rather than at the clock speed of electronic circuits. The result is a constant-time lookup that doesn’t scale with context length.

The energy reduction is equally significant. GPU-based memory scanning requires moving data from HBM through the memory hierarchy to compute units, a process that consumes energy proportional to the amount of data moved. Photonic computation avoids this data movement entirely by performing the comparison in the optical domain, where the energy cost is determined by the laser power required for the broadcast rather than by the number of memory accesses. At 18,000 times less energy per operation, the photonic approach could make million-token context windows economically viable for real-time serving workloads.

The design is currently theoretical — PRISM exists as a chip architecture specification rather than a fabricated device. Moving from design to silicon requires photonic foundry fabrication, integration with electronic control systems, and validation that the performance claims hold in physical hardware. However, the architecture addresses a genuine bottleneck that the GPU industry has not solved through incremental improvements, and photonic computing for AI inference has attracted increasing attention from both academic researchers and companies like Lightmatter and Luminous Computing.