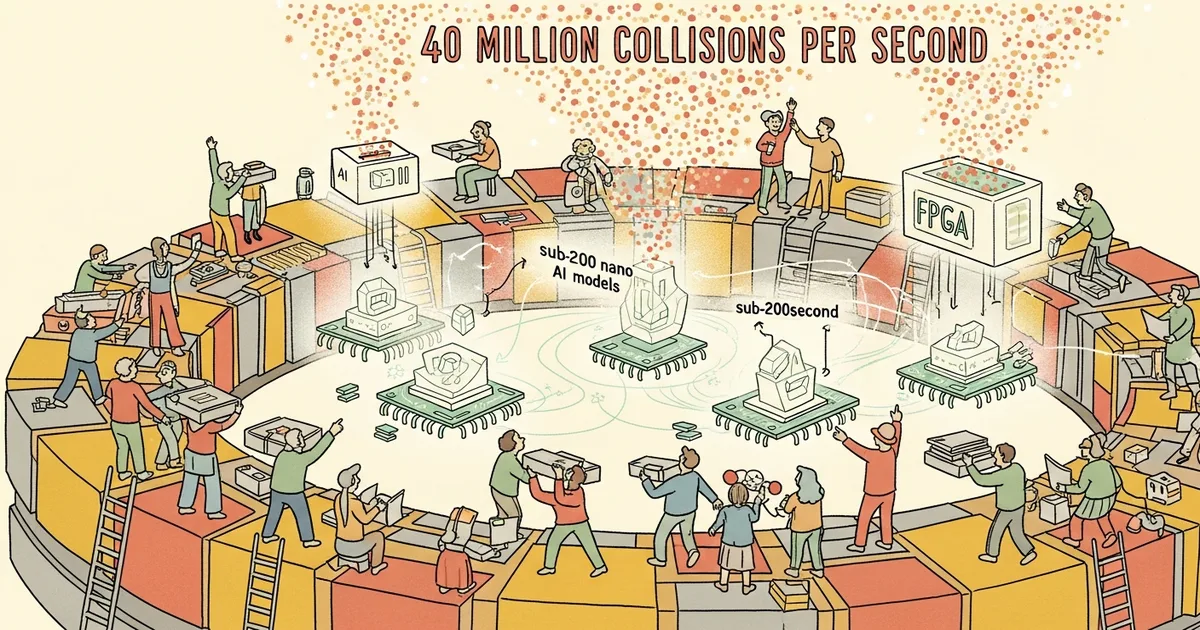

- CERN’s Level-1 Trigger system uses approximately 1,000 FPGAs to filter Large Hadron Collider collision data in under 50 nanoseconds, rejecting over 99.7 percent of events in real time.

- The AXOL1TL anomaly detection algorithm identifies rare physics signatures outside Standard Model topology patterns, running directly on FPGA hardware at the detector edge.

- The open-source hls4ml tool translates machine learning models from PyTorch and TensorFlow into synthesizable C++ code deployable on FPGAs, SoCs, and ASICs.

- The LHC generates approximately 40,000 exabytes of raw sensor data per year, with peak data streams reaching hundreds of terabytes per second.

What Happened

CERN has deployed ultra-compact AI models directly onto field-programmable gate arrays (FPGAs) to filter particle collision data from the Large Hadron Collider in real time. As reported by The Register on March 22, 2026, the approach bypasses conventional computing entirely, embedding machine learning inference into the silicon hardware that sits at the detector edge. Thea Aarrestad, Assistant Professor of Particle Physics at ETH Zurich and a specialist in anomaly detection for LHC data optimization, has led work on these hardware-accelerated inference systems.

The technique addresses one of physics’ most extreme data challenges: the LHC produces roughly 40,000 exabytes of raw sensor data per year, with peak throughput reaching hundreds of terabytes per second. No feasible storage or conventional computing system can process this volume, making real-time filtering at the hardware level a necessity.

Why It Matters

Traditional trigger systems at particle colliders rely on programmable logic with fixed thresholds to decide which collision events to keep. CERN’s approach replaces portions of this logic with trained machine learning models that can identify anomalous physics signatures, potentially catching rare events that fixed-threshold systems would miss. This matters because discoveries in particle physics often hinge on detecting statistically improbable events buried in trillions of collisions.

The work also has implications beyond particle physics. The hls4ml framework, developed as an open-source tool, enables any researcher to convert standard machine learning models into hardware-deployable code, opening the door for similar ultra-low-latency inference in fields like autonomous systems, medical devices, and telecommunications.

Technical Details

CERN’s Level-1 Trigger system processes approximately 10 terabytes per second of data arriving via fiber optic cables from the detector. The system’s roughly 1,000 FPGAs perform digital reconstruction and filtering within a 50-nanosecond decision window. This is orders of magnitude faster than GPU-based inference, which typically operates in the millisecond range.

The AXOL1TL algorithm runs directly on these FPGAs, analyzing detector signals to determine which collision events contain scientifically promising signatures outside Standard Model topology patterns. The algorithm rejects over 99.7 percent of incoming data. After Level-1 filtering, a High Level Trigger system comprising 25,600 CPUs and 400 GPUs further reduces the data stream, selecting approximately 1,000 interesting collisions from every 100,000 events per second.

To fit machine learning models onto resource-constrained FPGAs, the team applied quantization, pruning, parallelization, and knowledge distillation. The hls4ml transpiler converts models written in PyTorch or TensorFlow into synthesizable C++ that can be deployed on FPGAs, systems-on-chip, or custom ASICs. Notably, tree-based models outperformed deep learning alternatives in this application. The processing architecture breaks from the Von Neumann sequential model, instead triggering computation on data availability.

Who’s Affected

Particle physicists across CERN’s global analysis network of 170 sites in 42 countries, aggregating 1.4 million computer cores, stand to benefit from improved trigger-level filtering. Better real-time anomaly detection increases the chance of capturing rare physics events that could point to phenomena beyond the Standard Model.

The broader machine learning and embedded systems communities gain access to hls4ml as an open-source tool for deploying models at nanosecond-scale latency. Hardware engineers working on edge AI applications in aerospace, automotive, and medical imaging face similar constraints and can apply the same quantization and synthesis techniques.

What’s Next

CERN’s High Luminosity LHC upgrade, planned for the coming years, will increase the data rate from the current 4 terabytes per second to a projected 63 terabytes per second. This roughly 16-fold increase will demand even more aggressive on-chip filtering and may require moving from FPGAs to custom ASICs for additional performance gains. The scalability of hls4ml and the AXOL1TL approach at these higher data rates remains an open engineering challenge that the team is actively researching.

Related Reading

- Study Finds AI Users Adopt Incorrect Answers 73% of the Time When Models Err

- Researchers Propose Using AI Language Models as Cultural Archives for Global Societies

- Stanford: AI Models Endorse Harmful Advice in Nearly Half of Cases

- Cog Review 2026: Packaging ML Models Into Production Containers

- AI21 Review 2026: Enterprise-Grade Language Models with Jamba Architecture