- Google DeepMind introduced an experimental Gemini-powered AI pointer that captures both visual and semantic context around the cursor.

- Two demos are live in Google AI Studio: image editing and map place-finding, both operable by pointing and speaking.

- A deeper integration, Magic Pointer, is rolling out inside Chrome; a further integration is planned for Googlebook, Google’s new Gemini-powered laptop line.

- The system targets the workflow friction of switching to a separate AI chat interface and re-describing what’s on screen.

What Happened

Google DeepMind introduced an experimental AI-enabled mouse pointer powered by Gemini that understands not just where the cursor is but what the cursor is pointing at and why it matters, MarkTechPost reported on Wednesday. Two demos are live in Google AI Studio today — image editing and map place-finding, both operable by pointing and speaking. A deeper integration called Magic Pointer is rolling out inside Chrome, with a further integration planned for Googlebook, Google’s new Gemini-powered laptop line announced the same week. The research and demos are detailed on the DeepMind blog.

Why It Matters

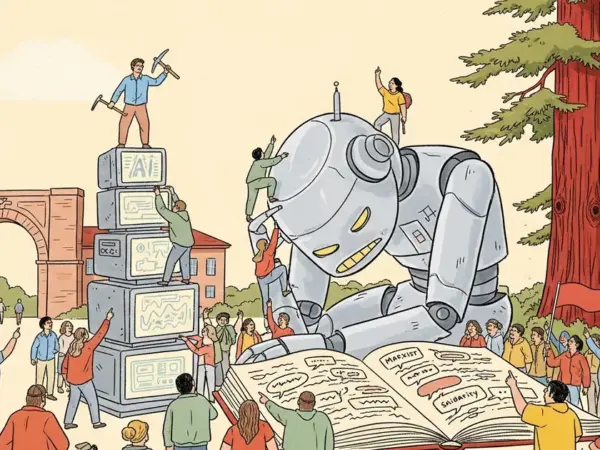

The pointer concept addresses a well-known friction in current AI workflows. Typical AI assistants live in their own window or chat interface; users have to leave their current context, describe what they were doing, query the assistant, and paste the result back. The pointer instead surfaces AI awareness in-place, with the cursor as the interaction anchor. The architectural shift moves AI interfaces from text-in, text-out to a context-aware system that knows what the user is currently looking at.

This is, in effect, DeepMind’s attempt to make AI a part of the operating-system interaction layer rather than a separate application. Adobe’s recent work on context-aware AI inside Creative Cloud, Apple’s Apple Intelligence framework, and Microsoft’s Recall (controversial when first announced in 2024) all represent adjacent attempts at the same problem.

Technical Details

The system is in an experimental stage. The two Google AI Studio demos let users point at elements on screen and speak commands, with Gemini interpreting both the visual content around the pointer and the spoken query together. Magic Pointer extends this into Chrome — the user’s browser becomes a surface where the pointer can extract context from any web page being viewed. The Googlebook integration suggests deeper OS-level hooks, though Google has not yet detailed the implementation.

Per the DeepMind blog post, the experimental principles include cursor-awareness as a continuous signal rather than a discrete event, multimodal interpretation of visual context plus spoken query, and execution of next steps inside the surface where the user is already working. The author of MarkTechPost’s coverage, Michal Sutter, framed the gap directly: current LLM interfaces are text-in, text-out, and have no awareness of the screen state around them.

Who’s Affected

End users of Chrome, Google AI Studio, and Googlebook gain the most immediate access. Apple, Microsoft, and Adobe — all of whom are building context-aware AI into their respective products — face renewed competitive pressure to ship comparable in-place AI interfaces. Developers building AI-native applications gain a research reference and, potentially, an API that exposes the cursor-context primitives. Accessibility advocates may have concerns or benefits depending on how the pointer interacts with screen readers and other assistive technologies.

What’s Next

The pointer remains experimental and is not yet a general-availability product. Google AI Studio demos are live today; Magic Pointer in Chrome and the Googlebook integration are rolling out. DeepMind has not committed to a general-availability date or API timeline. Expect updates at Google I/O and at upcoming Pixel and Googlebook product events. Apple’s WWDC, scheduled for June, is a likely competitive response window.