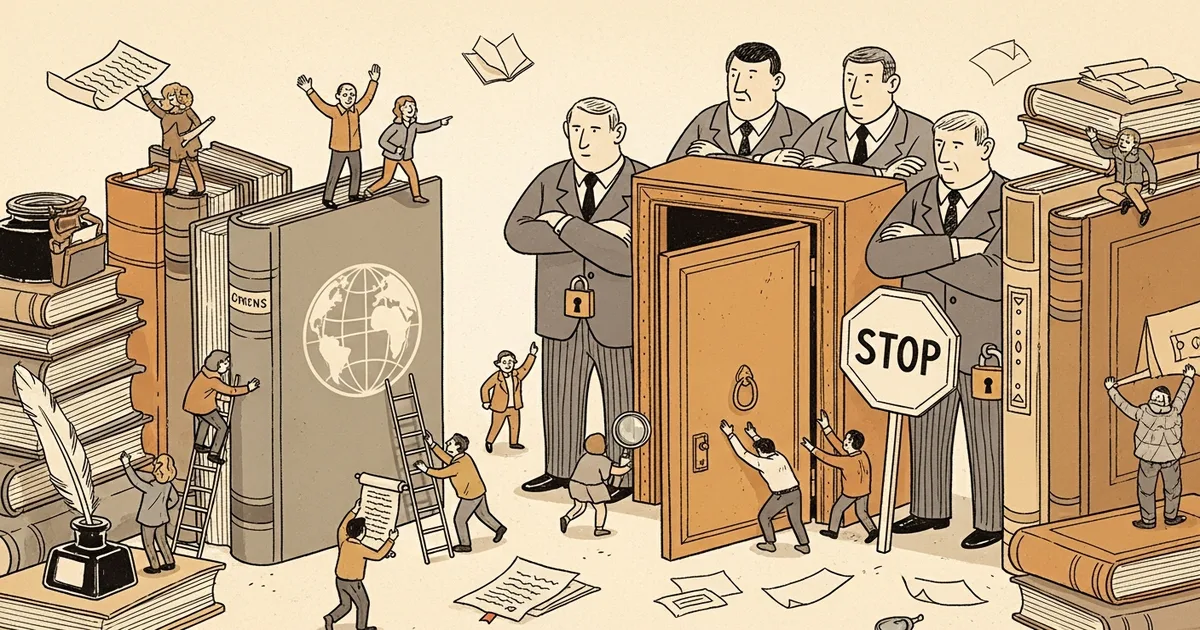

The Electronic Frontier Foundation published a formal warning on March 16, 2026, arguing that major news publishers blocking the Internet Archive’s web crawlers will not curb AI data harvesting but will eliminate a decades-long public record of how news was originally published. The post, written by EFF staff writer Joe Mullin, names The New York Times as the primary actor and notes The Guardian appears to be following suit.

- The New York Times is blocking Internet Archive crawlers using technical measures that go beyond standard robots.txt rules.

- The Wayback Machine holds more than one trillion archived web pages and has preserved news sites since the mid-1990s.

- Publishers cite AI training concerns as justification, but the Internet Archive is a nonprofit with no commercial AI operations.

- The EFF argues that archiving constitutes fair use, citing court precedents including the Google Books digitization case.

What Happened

In a March 16, 2026 post for EFF’s Deeplinks blog, Joe Mullin detailed how The New York Times has begun blocking the Internet Archive from crawling its website. The technical measures the Times is using go beyond robots.txt — the conventional web protocol for directing automated crawlers — and instead apply more aggressive blocking that prevents the Archive’s preservation infrastructure from capturing the site. The Guardian has also appeared to begin similar blocking, according to Mullin’s account.

The Internet Archive, which has been online since the mid-1990s, operates the Wayback Machine, which now stores more than one trillion archived web pages. Journalists, researchers, legal professionals, and courts use it routinely to retrieve earlier versions of web content, including news articles that have since been edited, quietly corrected, or removed entirely.

Why It Matters

The Wayback Machine has functioned for nearly three decades as the primary public record of how the web looked at specific points in time. For news content specifically, archived pages often represent the only independent documentation of how a story appeared before post-publication changes were made.

“Those archived pages are often the only reliable record of how stories were originally published,” Mullin wrote. “In many cases, articles get edited, changed, or removed — sometimes openly, sometimes not. The Internet Archive often becomes the only source for seeing those changes.”

The move by publishers follows an escalation in disputes over AI training data. The New York Times filed suit against OpenAI and Microsoft in 2023, alleging that training large language models on copyrighted news content constitutes infringement. Several other publishers filed similar claims. Those lawsuits remain active as of early 2026.

Technical Details

The method the Times is using to block the Archive goes beyond the robots.txt standard that has governed web crawling since the 1990s. Robots.txt files allow website operators to specify which automated agents may access which pages. The Times is using measures that actively block the Archive’s crawlers rather than simply signaling non-permission under the conventional protocol.

The Wayback Machine’s current scale — more than one trillion pages indexed — reflects its role as a broad-spectrum web archive. The Archive crawls public web content broadly and makes it available without commercial application.

The EFF cites established fair use precedent as the legal basis for the Archive’s activities. Mullin references the outcome of the Google Books litigation, in which courts found that digitizing books to create searchable indexes constituted fair use — a precedent the EFF argues applies to nonprofit archiving of public web content. “There’s a strong case that such training is fair use,” Mullin wrote, distinguishing between commercial AI operations and archival preservation.

Who’s Affected

The most direct impact falls on journalists and researchers who rely on archived news pages as evidentiary records — particularly in cases where publishers have altered or removed content after publication. Courts have used Wayback Machine captures as exhibits in legal proceedings where original publication dates or editorial decisions were disputed.

The Internet Archive itself operates as a 501(c)(3) nonprofit. It does not develop or sell AI systems and does not license its archive to commercial AI developers as a matter of course. As Mullin noted, the organization did not initiate the AI copyright dispute and has no commercial stake in it.

Developers who use the Wayback Machine’s API for research, digital humanities projects, and media analysis tools will also lose access to Times and Guardian content if the blocking persists and expands to other publishers.

What’s Next

The EFF has not indicated plans to file litigation over the archival blocking. Mullin’s argument is that publishers are misdirecting their response: the correct target for AI training grievances is AI companies, not nonprofit libraries. “Organizations like the Internet Archive are not building commercial AI systems. They are preserving a record of our history,” he wrote.

The underlying publisher lawsuits against AI companies — including the Times v. OpenAI case — are still working through the courts. Their outcomes will shape how AI training data rights are defined in U.S. law, but those rulings will not directly address whether publishers can block nonprofit archivists.

The EFF warns that if the trend toward archival blocking spreads, the public record of web-based news from the 2020s onward will become increasingly sparse. “Turning off that preservation in an effort to control AI access,” Mullin wrote, “could essentially torch decades of historical documentation over a fight that libraries like the Archive didn’t start, and didn’t ask for.”