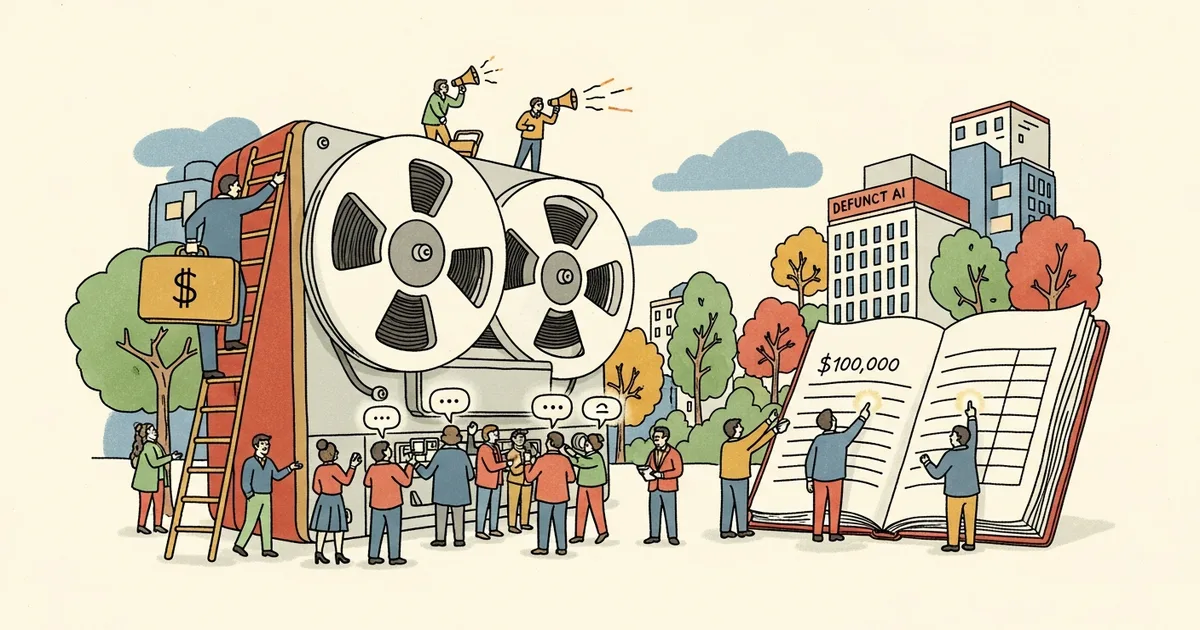

When a startup dies, the servers don’t go dark overnight. Gizmodo reported on April 17, 2026, that defunct AI startups are selling their former employees’ private conversations — Slack messages, emails, and internal documents — for up to $100,000 per dataset, to AI training companies and corporate intelligence firms seeking high-quality human-generated data.

The employees whose messages are being sold typically have no idea the transaction is occurring. Under current U.S. federal law, there is nothing illegal about it.

How Startup Employee Conversations Become Sellable Assets

When a startup shuts down, its intellectual property — including data assets — passes to creditors, acquirers, or the founders. Communications on company-owned systems are classified as corporate property under virtually every standard employment agreement. Gizmodo reported that brokers specializing in AI training data have built a dedicated pipeline targeting defunct companies, contacting administrators and bankruptcy trustees before infrastructure is decommissioned.

The transaction is logistically simple: the buyer receives a bulk export of the company’s communications — Slack workspaces, email archives, internal wikis, code repositories, document management systems — in exchange for a lump payment. Pricing ranges from a few thousand dollars for small teams to $100,000 or more for companies with large headcounts or specialized expertise in technically valuable domains: machine learning, drug discovery, quantitative finance.

What Data Gets Packaged — and Its Market Value

These datasets are extensive. According to the Gizmodo investigation, buyers receive direct messages between employees, group channel conversations, email threads, code review comments, strategy documents, and HR communications. The most valuable datasets come from technical teams where discussions contain domain-specific reasoning, debugging sessions, and expert-level problem-solving — exactly the high-signal human reasoning that AI labs pay premiums to capture.

The data is not anonymized in most cases. Names, job titles, email addresses, and organizational context are preserved because they add training signal for professional communication and domain expertise. An engineer’s private Slack message explaining why a model architecture failed is worth considerably more with their credentials attached than as an anonymous exchange — and buyers know it.

Who’s Buying AI Training Data From Defunct Startups

The primary buyers are AI companies seeking synthetic-free, human-generated professional dialogue. This isn’t scraped web content — it’s expert-level conversation in natural professional context, one of the scarcest categories in AI training data. MegaOne AI tracks 139+ AI tools across 17 categories; the shortage of high-quality training data is a known constraint across nearly every foundation model provider.

Corporate intelligence firms represent a second buyer category. These firms resell parsed employee data to competitors, investors conducting due diligence, or hedge funds modeling talent flows. A startup’s internal Slack archive can reveal product roadmaps, key personnel departures, competitive analysis, and morale signals invisible in any public filing.

The transaction fits into a broader, increasingly aggressive data acquisition market. OpenAI has pursued content licensing deals worth billions, in part because the supply of high-quality human-generated text is finite and increasingly litigated. Defunct startup data sidesteps those disputes entirely — there is no ongoing party with standing to object.

The Legal Vacuum That Makes This Completely Legal

No U.S. federal law explicitly prohibits a company from selling employee communications data to a third party after shutdown. The Computer Fraud and Abuse Act governs unauthorized access, not authorized sales. HIPAA and FERPA protect health and education data. The Electronic Communications Privacy Act covers interception, not post-collection commercial transactions. None of them touch this.

State frameworks offer marginal coverage. California’s CCPA gives residents some rights over personal data, but its employee data protections are limited and contested in court. The EU’s GDPR would prohibit this practice for European employees — U.S. workers have no federal equivalent. The American Privacy Rights Act stalled in both 2024 and 2025, leaving the regulatory gap fully intact.

Employment agreements provide the structural foundation for these sales. Virtually every standard offer letter contains language confirming that communications on company-owned systems are company property — clauses most employees sign without negotiation and never revisit. Combined with the absence of comprehensive federal data protection law, selling a former employee’s private messages is legally equivalent to selling the office furniture.

Why Training Data Hunger Makes This Market Grow

The demand side stems directly from how foundation models are built. Large language models require vast quantities of human-generated text to develop coherent reasoning and domain expertise. Web-scraped data has deteriorating quality as AI-generated content fills more of the internet — a feedback loop that makes authentic expert professional dialogue increasingly scarce and increasingly valuable.

Recent episodes have spotlighted AI companies’ aggressive data acquisition practices. A source code exposure incident at Anthropic briefly revealed details of AI agent architecture and how companies think about proprietary data. The data and talent acquisition dynamics documented in recent AI company deals reflect the same underlying scarcity.

Defunct startup data is attractive precisely because it sits in a legal gray zone: authentic, expert-level, and acquired without licensing complexity. A 50,000-message Slack export from a team of machine learning engineers represents training signal that would otherwise require expensive human annotation contracts or direct licensing agreements with individuals who have every incentive to say no. The growing backlash against AI data harvesting from human creators has made above-board acquisition harder; bankruptcy sales require no consent at all.

What Startup Employees Can Actually Do Now

The practical reality: most former employees cannot prevent the sale of past conversations once data is held by a defunct company. Legal recourse is minimal. But concrete steps reduce exposure going forward.

For current startup employees: Treat company-owned infrastructure as public. Avoid using company Slack, email, or document systems for any communication you wouldn’t want published. Use personal devices and accounts for personal matters — the separation has legal and practical value.

For former employees of shutting-down companies: file a written request for deletion of personal data under applicable state law. California residents have the strongest leverage under CCPA. The request may not succeed, but it creates a paper trail and may complicate a sale to a buyer with concerns about downstream liability.

For anyone negotiating employment agreements: ask specifically about data retention and sale policies for employee communications. Most startups lack explicit policies. Surfacing the question may prompt one. It won’t be in the standard boilerplate — you have to request it.

The economics point in one direction. As AI training data becomes scarcer and more valuable — and as startups continue failing at their current rate — the supply of liquidatable employee data will grow. The only protection available right now is behavioral, and it starts before the next message is sent.