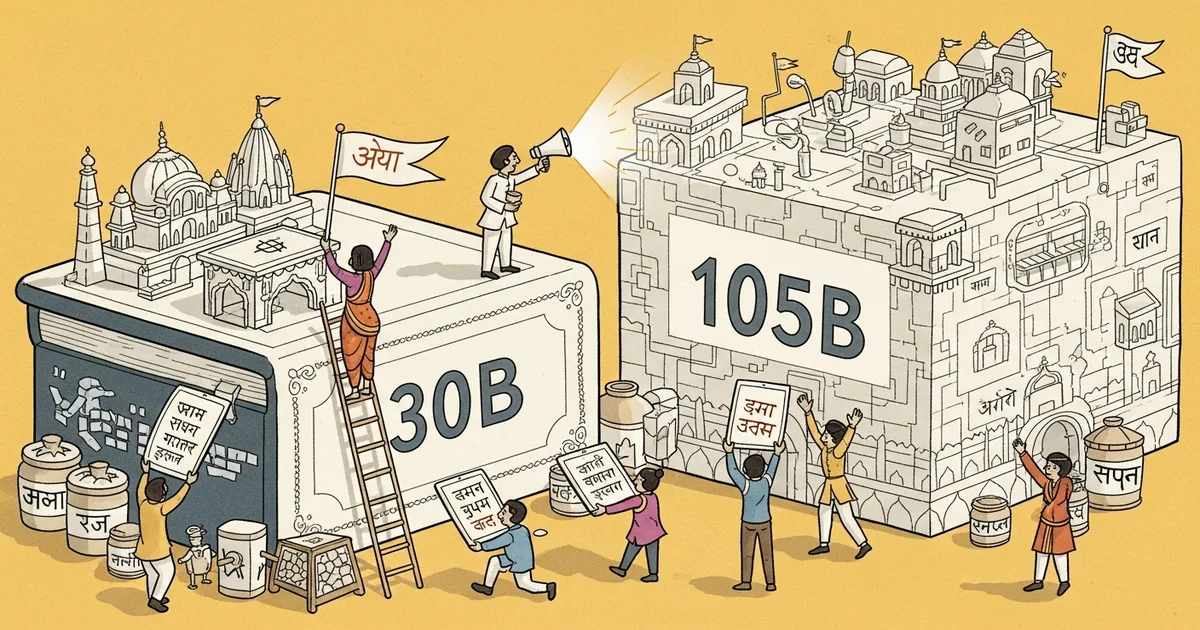

Indian AI startup Sarvam unveiled two large language models — a 30-billion and a 105-billion parameter variant — at the India AI Impact Summit in New Delhi, trained specifically to serve India’s 22 officially recognized languages with native fluency rather than through translation layers. Author and speaker attribution details from the summit were not available at time of publication.

- Sarvam’s 30B and 105B models are trained from scratch on large-scale Indian language corpora spanning all 22 official languages, including Hindi, Tamil, Telugu, Bengali, Marathi, and Gujarati.

- The 105B model is among the largest foundation models built specifically for Indian languages, targeting complex reasoning, document analysis, and multi-turn conversation.

- The 30B variant is designed for deployment on more accessible infrastructure, making it viable for government agencies and startups with constrained compute budgets.

- India’s national AI mission has deployed 38,000 GPUs to support sovereign AI development, with 20,000 additional units planned.

What Happened

Sarvam released two new foundation models at the India AI Impact Summit in New Delhi — a 30-billion parameter variant targeting cost-accessible deployment and a 105-billion parameter variant designed for high-complexity tasks, both covering India’s 22 official languages. The models were trained on Indian language data from inception rather than adapted from English-centric architectures, a structural choice the company says produces qualitatively different results in regional language handling. The original reporting is available via Google News.

Why It Matters

The dominant multilingual models from OpenAI, Anthropic, and Google are trained primarily on English data, with Indian languages incorporated as secondary additions rather than first-class training targets. The practical consequence is degraded performance in idiomatic phrasing, domain-specific vocabulary, and cultural context — a gap that becomes operationally significant in sectors like healthcare, agriculture, and government services where precise language directly affects outcomes.

India’s public digital infrastructure is being built around the expectation that AI can deliver services reliably in regional languages. The iGOT Karmayogi civil servant training platform and the AIKosha national AI resource hub both depend on models that function natively in Hindi, Tamil, Telugu, and other regional languages — a requirement that English-first imports have not consistently satisfied.

Technical Details

Both models were trained on corpora spanning India’s 22 officially recognized languages, including Hindi, Tamil, Telugu, Bengali, Marathi, Gujarati, and 16 others. Sarvam’s approach differs from fine-tuning an English foundation model on regional data: the company integrates multilingual capability into the base training process from the outset, which it says preserves idiomatic expressions and domain-specific terminology that translation-layer approaches distort or discard.

The 105-billion parameter model is described as handling complex reasoning, extended document analysis, and multi-turn dialogue in regional languages, with performance Sarvam characterizes as approaching English-language frontier models at comparable scale. The 30-billion parameter model is scoped for deployment environments with limited GPU access — enough to run without dedicated AI hardware clusters, making it viable for agencies and smaller enterprises that cannot sustain large inference costs.

No independently verified benchmark results — such as scores on IndicGLUE or AI4Bharat evaluation suites — were published alongside the announcement, which limits external verification of the performance claims at this stage.

Who’s Affected

Indian government agencies building AI-assisted public services are the most direct users: platforms like iGOT Karmayogi and AIKosha require regional language fluency at scale, and domestic foundation models reduce dependency on foreign API providers. Enterprises in healthcare, financial services, and agriculture — sectors where regional language accuracy affects real outcomes for real users — gain access to models trained for their operational context rather than adapted from English-first architectures.

Sarvam operates alongside BharatGen and Gnani as part of India’s sovereign AI ecosystem. Together, these companies address a market of more than one billion people who communicate primarily in languages other than English — a population that English-first AI has historically underserved at the application layer.

What’s Next

India’s national AI mission has already deployed 38,000 GPUs to support foundation model development, with 20,000 additional units in the pipeline — a level of infrastructure investment that signals sustained government intent to build domestic AI capacity rather than rely on imported models. As of publication, Sarvam had not disclosed API access details, licensing terms, or a general availability timeline for the two new models.

The key open question is independent evaluation. Benchmark results on standard multilingual suites — IndicGLUE, Sangraha, or the AI4Bharat benchmark set — would allow researchers and enterprises to verify whether the models’ demonstrated performance matches the claims made at the summit. Until those results are published, the 105B model’s characterization as frontier-comparable in Indian languages remains an assertion rather than a measured finding.

Related Reading

- AI Roundtable Platform Lets 200 Models Debate and Vote on Your Questions

- AI Team OS Turns Claude Code Into an Autonomous Multi-Agent System at Zero API Cost

- Developer Builds Plain-Text Cognitive Architecture That Gives Claude Code Persistent Memory

- Apple Research Exposes Critical Gaps in Multimodal AI Safety

- AI Model Releases Hit Record Pace as Platforms Bundle Multiple Models to Fight Subscription Fatigue