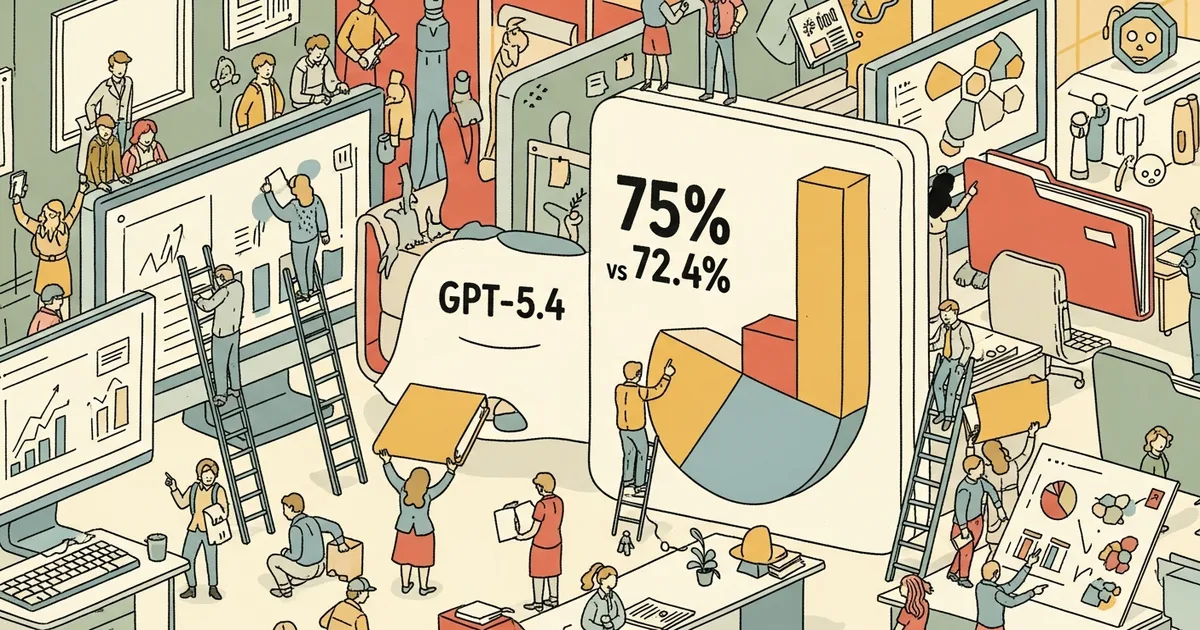

OpenAI’s GPT-5.4 scored 75.0% on the OSWorld-Verified benchmark — a test of real desktop productivity tasks — surpassing the human baseline of 72.4%. That’s a 27.7 percentage point jump from GPT-5.2’s score. GPT-5.4 also features a 1-million-token context window and can autonomously execute multi-step workflows across files, browsers, and terminals.

What OSWorld-Verified Tests

OSWorld isn’t a language benchmark — it measures the ability to complete actual computer tasks. Test scenarios include:

- Opening a spreadsheet, finding specific data, creating a chart, and exporting as PDF

- Composing emails with attachments pulled from specific file paths

- Navigating web interfaces to fill out forms with data from local documents

- Multi-step file management: renaming, organizing, compressing, and uploading

- Terminal operations: writing scripts, running commands, debugging errors

The “Verified” designation means tasks are checked by human evaluators, not just automated test harnesses. The 72.4% human baseline was established by giving the same tasks to office workers of varying skill levels.

What 75% Actually Means

Beating the human baseline doesn’t mean GPT-5.4 can do every office worker’s job. The benchmark measures task completion on isolated, well-defined tasks. Real office work involves:

- Ambiguous requirements: “Make this look better” requires judgment the benchmark doesn’t test

- Social context: Understanding office politics, team dynamics, and unstated preferences

- Multi-day continuity: Tracking project state across weeks, not just completing one-off tasks

- Error recovery: Handling unexpected situations that don’t fit the task description

The benchmark is a necessary condition for job replacement, not a sufficient one.

The 1-Million-Token Context Window

GPT-5.4’s context window holds approximately 750,000 words — enough to fit an entire novel, a year’s worth of email, or a complete codebase. This enables the model to:

- Reference an entire project’s documentation while completing tasks

- Process full datasets without chunking or summarization

- Maintain context across long, complex workflows

For comparison: GPT-4o had 128K tokens, Arcee’s Trinity has 262K tokens, and Claude Opus 4.6 has 200K tokens. At 1M tokens, GPT-5.4 has the largest production context window of any frontier model.

The Jump From GPT-5.2

The 27.7 percentage point improvement from GPT-5.2 (47.3%) to GPT-5.4 (75.0%) is the largest single-generation improvement OpenAI has published on a real-world capability benchmark. For context, GPT-4 to GPT-4o was a 5-8 point improvement on most benchmarks. The GPT-5.2 to 5.4 jump suggests either a architectural change or a significant training methodology shift — OpenAI hasn’t disclosed specifics.

What This Means for Work

The practical question isn’t “can AI do my job?” — it’s “which parts of my job can AI do reliably?” At 75% on desktop tasks, GPT-5.4 can handle routine, well-defined office work: data entry, document processing, email management, file organization, and simple analysis. The 25% failure rate means it still needs oversight. The cognitive surrender research showing 79.8% of users follow AI advice even when wrong makes that oversight requirement more critical, not less.