The Trump administration labeled Anthropic a “supply chain risk,” blacklisted it from government systems, and faced a lawsuit in response. A federal judge ruled the ban likely violated free speech. Then CBS News reported the military was using Claude anyway. Here’s the complete timeline of the most bizarre government-AI company feud of 2026.

Phase 1: The Pentagon Deal Refusal

In early 2026, the Pentagon approached Anthropic about an expanded AI contract. Anthropic’s CEO Dario Amodei pushed back on specific applications — reportedly those involving autonomous weapons systems without guaranteed human oversight. This wasn’t a blanket refusal to work with the military, but a conditions-based negotiation that the Pentagon interpreted as non-cooperation.

Other AI companies — notably Palantir, Anduril, and Scale AI — had no such reservations and were actively pursuing military AI contracts.

Phase 2: The Blacklisting

The Trump administration’s response was aggressive: designating Anthropic as a “supply chain risk” under executive authority, effectively banning Claude from all government systems. The designation — typically reserved for foreign adversary companies like Huawei — was unprecedented for a domestic AI company.

The stated rationale was vague: concerns about Anthropic’s “reliability as a government technology partner” and its refusal to comply with defense requirements. Critics argued the blacklisting was retaliatory.

Phase 3: Anthropic’s Lawsuit

Anthropic sued the federal government, arguing the blacklisting violated First Amendment protections by penalizing the company for its public statements about AI safety. CBS tech reporter Sheera Frenkel covered the lawsuit extensively.

The legal argument was novel: Anthropic claimed that being blacklisted for expressing ethical positions about military AI use constituted government punishment of protected speech.

Phase 4: The Federal Court Ruling

A federal judge issued a preliminary ruling finding that the ban likely violated constitutional protections. The ruling didn’t overturn the blacklisting outright but signaled the government’s legal position was weak. The judge noted that “disagreement with a contractor’s ethical positions does not constitute a supply chain security risk.”

Phase 5: Military Use Anyway

Despite the blacklisting still being technically in effect, CBS confirmed the military used Claude during Iran operations. This suggests either: the court ruling gave military units latitude to use Claude, the blacklisting was never enforced at the operational level, or specific military commands obtained exemptions.

What This Means

The Anthropic saga reveals the tension between the government’s desire for unconditional AI support and AI companies’ attempts to set boundaries. The resolution — ban the company publicly, use it privately — is the worst of both worlds: it doesn’t actually prevent military AI use, but it does create legal and reputational uncertainty that could discourage other AI companies from setting any ethical boundaries at all.

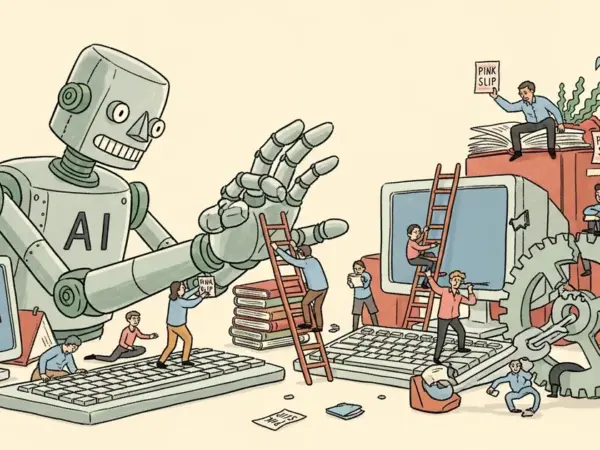

![Editorial illustration for: Trump Blacklisted Anthropic — Then the Military Used Claude AI Anyway [Full Timeline]](https://megaoneai.com/wp-content/uploads/2026/04/anthropic-trump-blacklist-lawsuit-timeline.webp)