Key Takeaways

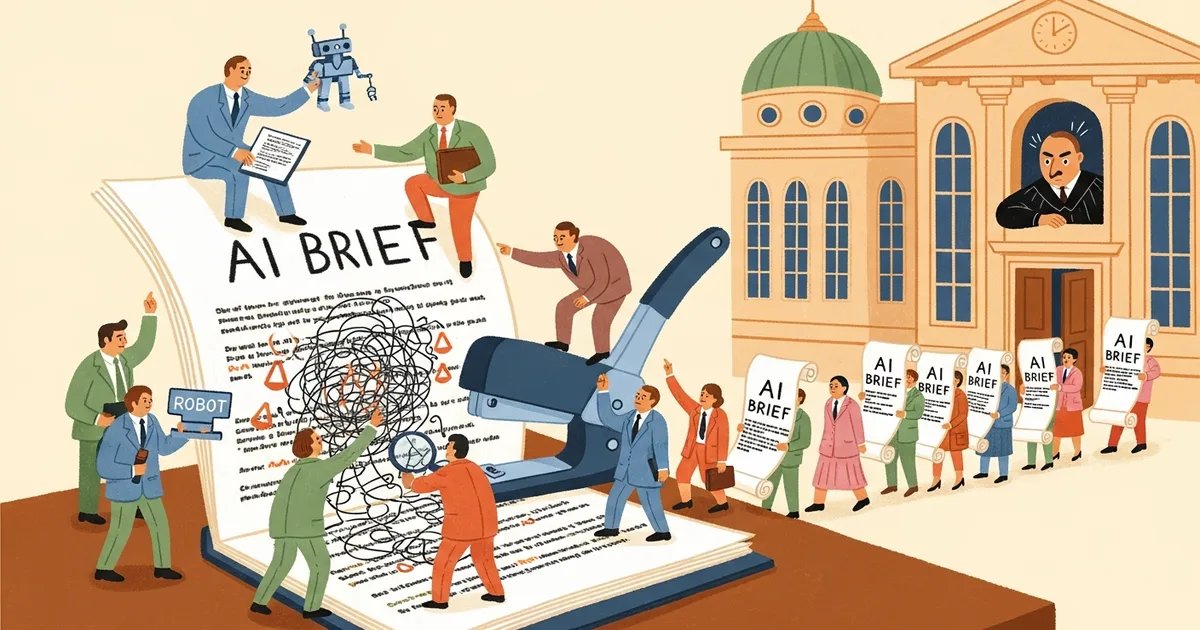

- Court sanctions against lawyers who submit AI-generated briefs containing fabricated case citations continue to increase in 2026, according to NPR reporting.

- Despite the sanctions, lawyer adoption of AI tools is accelerating, with Harvey AI reaching an $11 billion valuation.

- The University of Washington Law School is developing optional AI ethics training for legal professionals, though no bar association has mandated it.

- The gap between AI tool capability and professional accountability frameworks is widening, not narrowing.

What Happened

NPR reported on April 3, 2026, that court sanctions over AI-generated fake legal briefs are still rising more than three years after the first high-profile case. Judges across federal and state courts have issued monetary penalties, public reprimands, and referrals to bar disciplinary committees for attorneys who submitted filings containing fabricated case citations generated by AI chatbots.

At the same time, the legal industry’s adoption of AI tools has not slowed. Harvey AI, one of the most prominent legal AI platforms, recently closed a funding round that valued the company at $11 billion. Law firms of every size are integrating AI into research, drafting, and document review workflows.

Why It Matters

The legal profession is caught in a contradiction. The same tools that are generating sanctions are also generating massive efficiency gains. Since the initial Mata v. Avianca case in June 2023, where New York attorney Steven Schwartz submitted a brief containing six fabricated case citations generated by ChatGPT, dozens of similar incidents have been documented. The pattern has not changed: lawyers use general-purpose AI chatbots for legal research, the chatbot produces plausible-sounding but nonexistent case law, and the attorney submits it without verification.

What has changed is the scale of adoption. A 2026 survey by the American Bar Association found that 64% of attorneys now use AI tools in some capacity, up from 35% in early 2025. The financial incentives are substantial: firms report 30-40% reductions in research time for routine matters.

Technical Details

The core problem is well-understood. Large language models generate text based on statistical patterns, not verified legal databases. When asked to find cases supporting a legal argument, a general-purpose model like ChatGPT or a non-specialized AI tool will sometimes generate citations that follow the correct formatting conventions but reference cases that do not exist. The model produces a plausible case name, a realistic reporter citation, and even a convincing summary of the holding.

Specialized legal AI platforms like Harvey AI, CoCounsel (from Thomson Reuters), and Casetext address this by grounding their outputs in verified legal databases like Westlaw and LexisNexis. Harvey AI co-founder Winston Weinberg told NPR that their system “never generates case citations from the language model itself — every citation is retrieved from a verified corpus and cross-referenced before presentation.” The distinction between general-purpose chatbots and purpose-built legal tools is where most sanctions cases originate.

The University of Washington Law School, under the direction of Professor Ryan Calo, is developing a voluntary AI ethics training curriculum. The program covers verification protocols, disclosure requirements, and the technical limitations of language models. However, no state bar association has yet made AI training mandatory for practicing attorneys.

Who’s Affected

Solo practitioners and small firms face the highest risk. Large law firms typically have the resources to license specialized legal AI platforms and implement internal verification workflows. Smaller practices are more likely to rely on consumer-grade AI tools without adequate safeguards. Clients of these firms bear the downstream consequences: cases can be dismissed, sanctions can include fee refunds, and malpractice exposure increases.

Judges are also affected. Courts must now dedicate time and resources to verifying the authenticity of cited authorities, a task that was previously unnecessary. Several federal districts have implemented standing orders requiring attorneys to certify that no AI-generated content was used without human verification, but enforcement remains inconsistent.

What’s Next

The American Bar Association’s Standing Committee on Ethics and Professional Responsibility is expected to issue formal guidance on AI use in legal practice by mid-2026. Several state bars, including California and New York, are considering mandatory AI competency requirements for continuing legal education credits. Until binding rules exist, the current dynamic is likely to persist: sanctions will continue to accumulate while adoption accelerates, driven by competitive pressure and the significant productivity gains that properly supervised AI tools deliver.