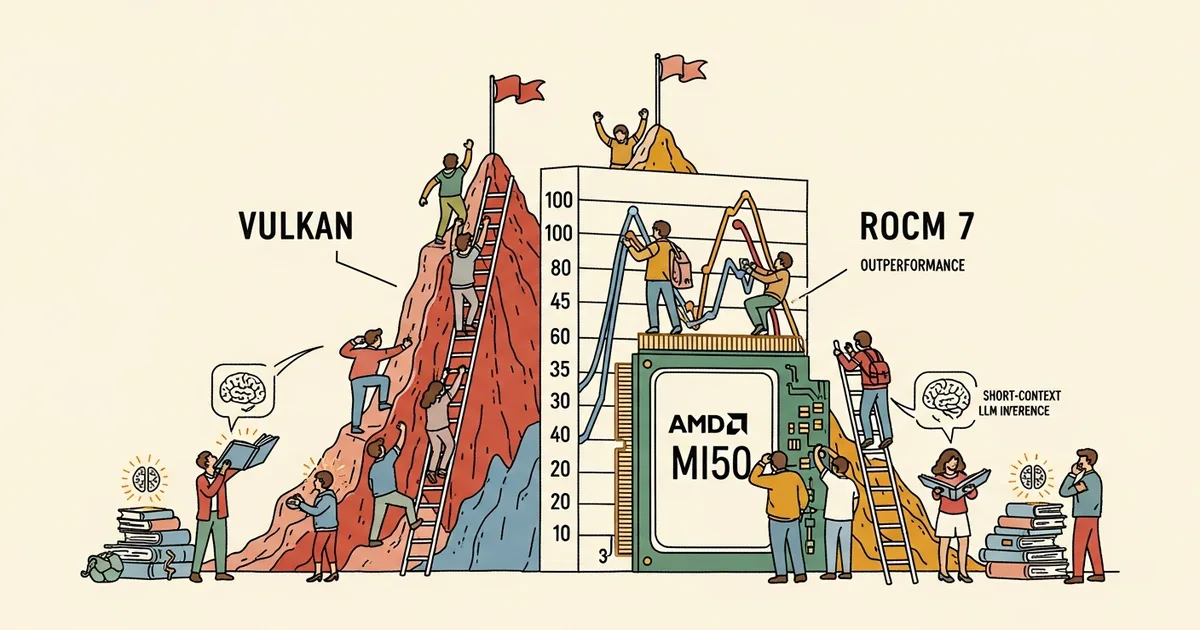

- Community benchmarks show that Vulkan outperforms AMD’s ROCm backend for llama.cpp inference on short-context dense models, with Vulkan delivering 30-45% faster token generation in some configurations.

- ROCm maintains advantages for long-context workloads above 16,000 tokens and mixture-of-experts models, where its HIP kernels handle sparse computation patterns more efficiently.

- The AMD MI50 (32GB VRAM) has become popular in the local AI community due to its availability on the used market at prices well below current-generation cards.

- The results highlight fragmentation in AMD’s AI software stack, with no single backend optimal for all workloads.

What Happened

Benchmarks posted to the LocalLLaMA community and ROCm GitHub repository comparing AMD’s ROCm and Vulkan backends for llama.cpp reveal that Vulkan delivers faster inference for short-context dense models on AMD GPUs including the Radeon Instinct MI50. The tests used recent ROCm builds against Vulkan 1.4.x across multiple quantized models including Qwen 3.5 variants and other popular open-weight models.

On an AMD Radeon RX 6900 XT, Vulkan achieved 83.19 tokens per second on Qwen3-16B (IQ2_M quantization) compared to ROCm’s 57.45 tokens per second. For Qwen3 at Q8_0 quantization, Vulkan delivered 193.74 tokens per second versus ROCm’s 133.23 tokens per second, representing a roughly 45% performance advantage.

Why It Matters

For developers running large language models locally on AMD hardware, the choice of inference backend directly affects whether a model is practically usable. A 30-45% difference in token generation speed can determine whether an LLM response feels interactive or sluggish, particularly for use cases like code completion and real-time chat where latency matters more than throughput. The difference between 57 and 83 tokens per second on a 16-billion-parameter model, as the Qwen3-16B benchmarks showed, is the difference between a noticeable delay and near-instant responses.

The benchmarks also demonstrate that even previous-generation AMD data center GPUs like the MI50 can run substantial language models when paired with optimized inference software. The MI50’s 32GB VRAM capacity and availability on the used market at prices well below current-generation cards have made it popular among local AI enthusiasts, expanding the accessibility of local LLM deployment beyond Nvidia’s ecosystem.

Technical Details

For sub-16,000-token context windows on dense models, Vulkan consistently produced faster prompt processing and token generation speeds than ROCm. The margin was significant enough to make Vulkan the preferred backend for interactive use cases including chatbots, code completion, and real-time assistants. The advantage disappeared for contexts above 16,000 tokens, where ROCm’s more optimized memory management and kernel implementations took the lead.

On mixture-of-experts models like Qwen 3.5 122B, which uses 122 billion total parameters but activates only a subset per inference pass, ROCm outperformed Vulkan regardless of context length. MoE architectures require efficient expert routing and sparse computation patterns that ROCm’s HIP kernels handle more effectively than Vulkan’s general-purpose compute pipeline. Users with dual MI50 configurations reported automatic tensor splitting working well, with both cards maintaining approximately 90% utilization.

Who’s Affected

Local LLM users running AMD GPUs face a practical decision about which backend to use. The benchmarks suggest using Vulkan for quick interactive queries on dense models and switching to ROCm for long-document processing or MoE model inference. This split-backend approach adds complexity but delivers better performance across different workloads. Vulkan also benefits from significantly easier setup compared to ROCm, which requires specific driver versions and kernel configurations that vary by Linux distribution.

AMD itself faces questions about its fragmented AI software stack. ROCm and Vulkan serve different design philosophies: ROCm targets data center workloads with deep hardware integration, while Vulkan provides cross-platform compatibility with lower setup complexity. Nvidia’s CUDA does not face this fragmentation, offering a single backend that works consistently across consumer and data center GPUs. This usability advantage remains one of the primary reasons Nvidia dominates the local AI inference market despite AMD offering competitive hardware at lower price points.

What’s Next

The performance gap between Vulkan and ROCm on short-context workloads has been filed as an issue on AMD’s ROCm GitHub repository. Whether AMD addresses this through ROCm kernel optimizations or whether the community continues to rely on Vulkan as a faster alternative for interactive use remains to be seen. For now, llama.cpp users on AMD hardware must choose their backend based on their primary workload rather than defaulting to AMD’s officially recommended compute stack. The lack of a single optimal backend remains AMD’s most significant software-level disadvantage compared to Nvidia’s unified CUDA ecosystem for local AI inference.