- Google DeepMind released Gemini Robotics-ER 1.6, an updated embodied-reasoning model that acts as a high-level planning and orchestration layer for robotic systems.

- The model outperforms both Gemini Robotics-ER 1.5 and Gemini 3.0 Flash on object pointing, counting, and task-completion recognition, according to Google DeepMind.

- A new instrument-reading capability, developed in collaboration with Boston Dynamics, enables robots to interpret pressure gauges and sight glasses using a multi-step image processing and code execution pipeline.

- Gemini Robotics-ER 1.6 is available through the Gemini API and Google AI Studio, with a Colab notebook for developers.

What Happened

Google DeepMind released Gemini Robotics-ER 1.6, an upgraded version of its embodied reasoning model designed to serve as a cognitive planning layer for robotic systems. The model coordinates high-level task reasoning and can invoke external tools — including Google Search and vision-language-action models — to execute physical tasks. The release was reported by The Decoder in April 2026.

Why It Matters

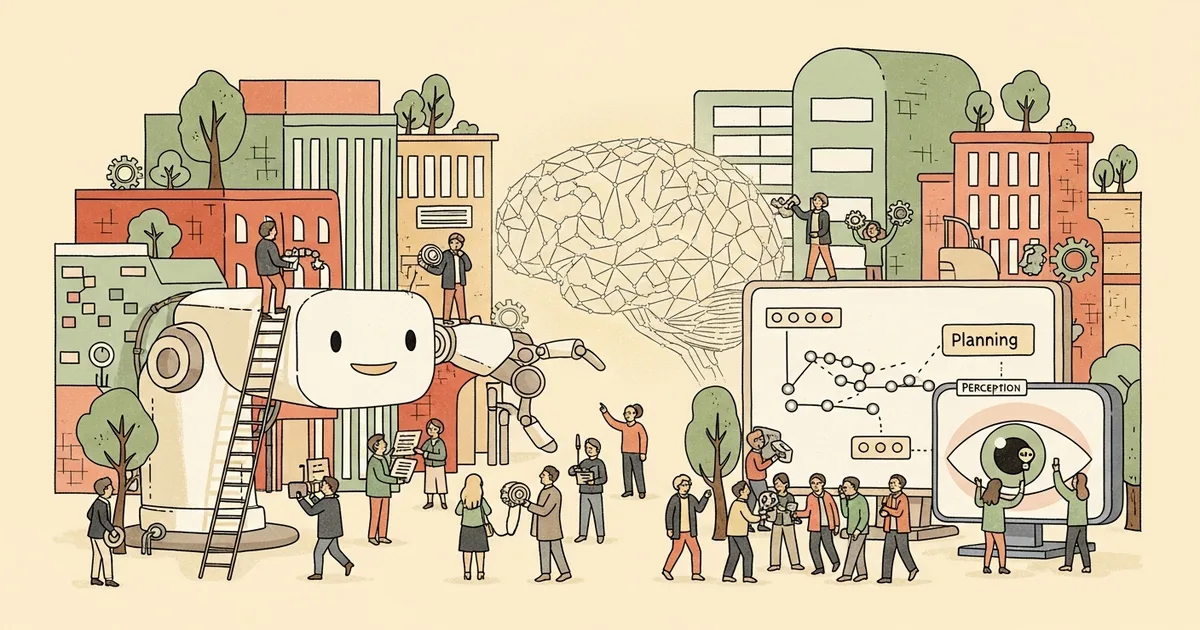

Embodied reasoning models occupy the planning tier of modern robotic stacks, translating high-level instructions into sequences of physical actions while delegating low-level motor control to separate specialized systems. The 1.6 release extends Google DeepMind’s prior work on Gemini Robotics-ER 1.5 with claimed benchmark gains and a new perception capability targeting industrial inspection environments. Google DeepMind has positioned Gemini Robotics-ER as an orchestration model rather than a full end-to-end robot controller, differentiating it architecturally from systems like Physical Intelligence’s π0.

Technical Details

Google DeepMind states that Gemini Robotics-ER 1.6 outperforms both Gemini Robotics-ER 1.5 and Gemini 3.0 Flash across three benchmarks: object pointing, object counting, and recognition of successful task execution. The instrument-reading capability — developed in partnership with Boston Dynamics — uses a multi-step pipeline: the model applies agentic image processing to zoom into gauge or sight-glass displays, uses pointing functions and code execution to calculate proportional scale distances, then draws on world knowledge to interpret the resulting reading. Google DeepMind has not published an independent benchmark paper accompanying this release; the performance claims are based on the company’s own reporting.

Who’s Affected

Boston Dynamics’ Spot robot is reportedly using the instrument-reading feature for active system inspections, making it among the earliest commercial deployments of the capability. Robotics developers can access Gemini Robotics-ER 1.6 through the Gemini API and Google AI Studio; a Colab notebook has been published to lower the integration barrier for third-party teams.

What’s Next

Google DeepMind has not announced a roadmap for subsequent Gemini Robotics-ER versions. Availability through standard developer API channels suggests the company is collecting real-world deployment feedback before its next iteration.