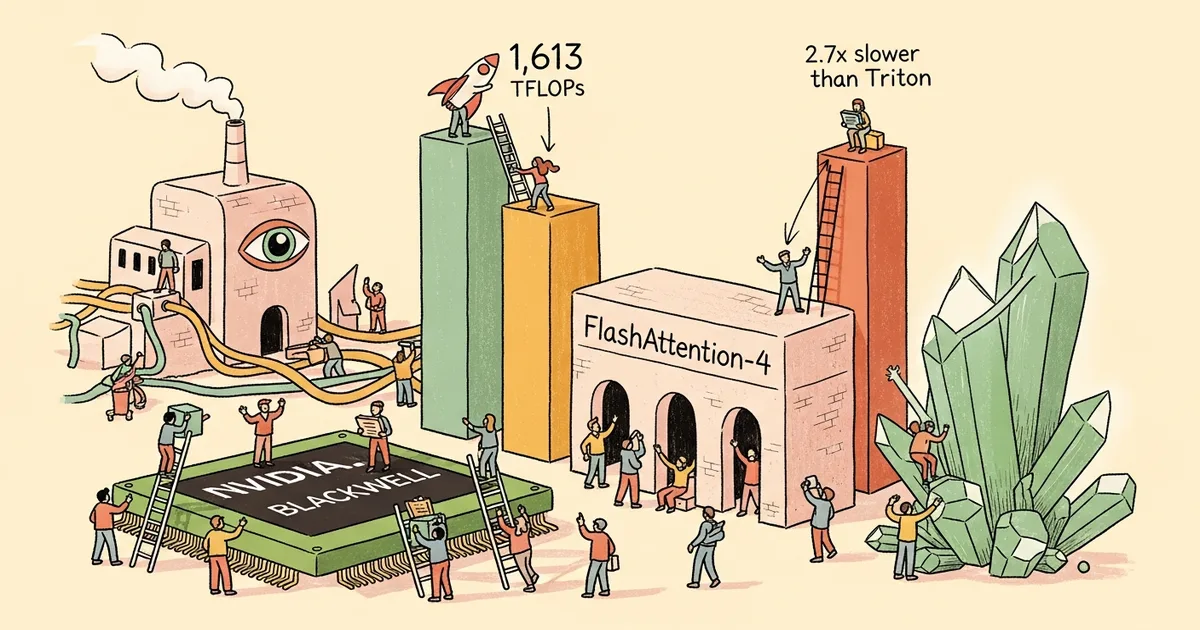

FlashAttention-4, a new attention kernel co-designed for NVIDIA’s Blackwell GPU architecture, achieves 1,613 TFLOPs/s with BF16 precision on B200 GPUs — utilizing approximately 71 percent of the hardware’s theoretical maximum capacity. The implementation, detailed in a technical writeup, runs 2.1 to 2.7 times faster than equivalent Triton kernels and is written entirely in Python using NVIDIA’s NVMath library rather than hand-optimized CUDA.

The performance improvement comes from architectural co-design rather than algorithmic novelty. FlashAttention-4 exploits Blackwell-specific features including the TMA (Tensor Memory Accelerator) for asynchronous data movement, WGMMA (Warp Group Matrix Multiply-Accumulate) instructions for efficient tensor core utilization, and the Blackwell memory hierarchy’s ability to overlap computation with data transfer. These hardware-specific optimizations are why the kernel targets Blackwell exclusively — it would not achieve the same speedup on Ampere or Hopper GPUs.

The decision to implement in Python rather than CUDA represents a significant shift in GPU kernel development. Traditional high-performance GPU kernels are written in CUDA C++ with inline PTX assembly, requiring specialized expertise that few developers possess. FlashAttention-4’s Python implementation through NVMath lowers the barrier to writing performant GPU code, though the abstraction relies heavily on NVIDIA’s compiler infrastructure to generate efficient machine code.

For the AI training ecosystem, FlashAttention-4 means that Blackwell GPUs can process attention computations — the computational bottleneck in transformer models — with significantly less time and energy than previous generations. At 71 percent hardware utilization, the kernel leaves relatively little performance on the table, suggesting that Blackwell’s architecture was designed with attention workloads as a primary target.

The kernel builds on the FlashAttention lineage developed by Tri Dao at Princeton. FlashAttention-1 and -2 reduced attention’s memory footprint from quadratic to linear; FlashAttention-3 optimized for Hopper GPUs. FlashAttention-4’s contribution is demonstrating that hardware-specific co-design can extract substantially more performance than portable implementations, at the cost of being locked to a single GPU architecture.