Tufts University researchers unveiled a neuro-symbolic AI architecture in April 2026 that reduces energy consumption by up to 100 times compared to standard large language model-based approaches — while simultaneously improving task accuracy in robotic systems. The work targets visual-language-action (VLA) models, the current frontier of robotics AI, and will be presented at the International Conference on Robotics and Automation (ICRA) in Vienna in May 2026.

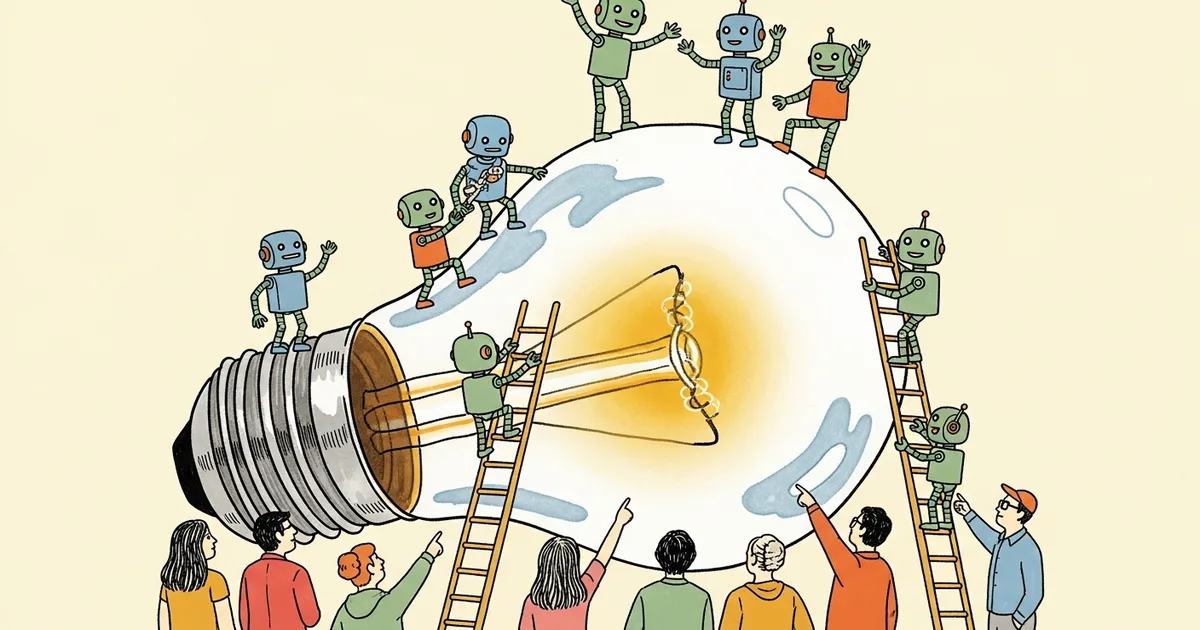

The system works by combining traditional neural networks — the statistical pattern-matching engines that power ChatGPT and its competitors — with symbolic reasoning systems that mirror how humans decompose complex problems into logical steps. The neural component handles perception; the symbolic component handles decision-making. The result is a hybrid architecture that cuts the continuous energy draw of transformer-based inference without sacrificing the perceptual flexibility that makes modern AI useful in physical environments.

What VLA Models Are and Why They Consume So Much Power

Visual-language-action models represent the hardest computational problem in current robotics. Unlike a chatbot that outputs text tokens, a VLA model must simultaneously interpret live camera feeds, parse natural language commands, and translate both into precise physical motor instructions — in real time, continuously, on hardware that may be mounted to a robot arm operating in an unstructured environment.

Running a full transformer-based VLA on embedded hardware is prohibitive by current energy standards. These models require GPU clusters or high-end edge accelerators to achieve acceptable latency. Deploying fleets of such robots at industrial scale creates infrastructure demands comparable to building dedicated AI data centers worth billions of dollars — Nebius’s planned $10 billion facility in Finland is a real-world index of how severe that demand has become.

The Tufts team attacks this problem at the architectural level, not through hardware optimization, model compression, or quantization.

The Neuro-Symbolic Architecture: How It Works

The core insight is that not every part of a robot’s decision loop requires neural-scale computation. Neural networks excel at perception — recognizing objects, parsing language, interpreting spatial relationships from raw sensor data. Symbolic reasoning systems excel at logic — structured rules like “if object A is at position X and gripper is at position Y, execute grasp sequence Z.” The Tufts architecture builds a pipeline that separates these concerns cleanly.

- Perception layer: A neural network processes visual and language inputs, converting raw sensor data into symbolic representations that the reasoning layer can act on

- Symbolic reasoner: A logic engine applies domain-specific rules to those representations, deriving action plans through deterministic inference rather than probabilistic sampling

- Action executor: Physical motor commands are generated from the symbolic plan — not continuously from neural outputs, which eliminates the need for constant high-power inference

The energy savings follow directly from this structure. The energy-intensive neural components activate only when new perceptual input requires processing. The symbolic reasoner handles moment-to-moment decision-making at a fraction of the compute cost — deterministic logic rules consume negligible power compared to transformer attention heads.

The 100x Energy Reduction: What the Numbers Mean

A 100x efficiency claim requires calibration. The Tufts researchers compare their system against baseline VLA architectures running equivalent manipulation tasks under controlled benchmark conditions. To make the figure concrete: if a standard transformer-based VLA system consumes 500 watts during a pick-and-place task, a 100x reduction implies 5 watts — the power budget of a microcontroller, not a GPU.

At 5 watts, the deployment scenarios change entirely. Long-duration field robots become viable on standard battery packs. Powered prosthetics and assistive devices — which have hard constraints on weight and battery size — become candidates for VLA-class intelligence. Household robots that currently require dock-charging every 30 minutes of active operation could sustain hours of continuous task execution.

Accuracy improvements accompany the efficiency gains, which is the less intuitive result. Symbolic reasoning systems are, by design, more consistent than neural networks on tasks with well-defined logical structure. A neural network occasionally misclassifies spatial relationships or misinterprets object categories under lighting changes; a symbolic system operating on verified symbolic representations from the perception layer makes fewer errors on the logical reasoning steps. The two failure modes are different, and the hybrid architecture exploits that difference.

Why This Approach Has Taken This Long to Arrive

Neuro-symbolic AI has existed as a research agenda since the 1980s, when symbolic AI was the dominant paradigm and neural networks were a fringe academic pursuit. The tension between the two approaches defined the field for decades. Symbolic systems were interpretable and logically consistent but brittle — they failed completely on any input outside their hand-coded rule sets. Neural networks were flexible and generalizable but opaque, energy-intensive, and data-hungry.

The commercial AI wave since 2020 has run almost entirely on neural approaches, leaving symbolic systems as an academic backwater. But that neural dominance carries compounding costs: the energy required to run frontier models has become a genuine infrastructure constraint. AI workloads now register as a measurable percentage of national electricity consumption in multiple countries, and the International Energy Agency projected in 2024 that AI data center consumption could double by 2026. The proliferation of AI across every application category — from weather apps to enterprise search — has accelerated that trajectory.

What changed that makes the Tufts approach viable now is the quality of the neural perception layer. Modern vision transformers and language models handle perception tasks with accuracy that 1980s symbolic AI researchers simply could not achieve. That makes the handoff from neural to symbolic clean and reliable in a way it never was before — the symbolic layer receives high-quality input, so its deterministic rules produce high-quality output.

Robotics Is the Right Domain to Prove This Works

The choice to focus on robotics is strategically sound, not accidental. Robotics is where the two biggest constraints on current AI deployment — real-time performance requirements and strict power budgets — converge hardest. A cloud-hosted LLM can consume a megawatt and nobody objects as long as latency stays under a second. A fully autonomous exploration system operating in unstructured terrain has no data center to offload to and no utility grid to plug into.

Robotics also provides benchmarks that are genuinely measurable. Task completion rates, cycle times, error rates under distribution shift, and energy consumption per completed task are all quantifiable. That makes robotic manipulation tasks ideal for validating efficiency claims that might otherwise be dismissed as benchmark-specific artifacts.

VLA models in particular — the category the Tufts work specifically addresses — represent the maximum-difficulty version of the embedded AI problem. Systems that must jointly process vision, language, and action in real time under power constraints are the stress test that matters commercially. Solving that at 100x lower energy cost is not a narrow laboratory finding.

What the ICRA Presentation in Vienna Will Reveal

The International Conference on Robotics and Automation is the premier peer-review venue for robotics research. Presenting the Tufts work there in May 2026 subjects it to scrutiny from the researchers most likely to identify methodological gaps, benchmark limitations, or replication issues — not favorable journal reviewers, but adversarial domain experts.

Three questions will dominate the ICRA hallway conversations. First: how does accuracy hold on out-of-distribution tasks, where the neural perception layer encounters objects, environments, or language instructions unlike those in training data? The symbolic layer can only reason correctly if the neural layer provides accurate symbolic representations — distribution shift corrupts that input. Second: how does the system handle tasks that require reasoning beyond the predefined symbolic rule set? Third: what is the actual engineering cost of building and maintaining the symbolic layer when deploying to a new domain or task category?

None of these are fatal objections. They are engineering constraints that determine the scope of applicability. The answers will shape whether this architecture becomes a deployable platform or a proof-of-concept that informs future hybrid designs.

Commercial Implications for the Robotics Market

Boston Dynamics, Figure AI, and 1X Technologies are all racing to deploy humanoid and semi-humanoid robots at commercial scale in 2026. Energy efficiency is a direct determinant of unit economics, operational cost, and deployment flexibility. A robot platform that runs 100x longer on the same battery — or that can be powered by a system 100x smaller and lighter — is a different product category, not an incremental improvement to an existing one.

Several research groups and major labs including DeepMind have published neuro-symbolic integration work in recent years. The Tufts contribution distinguishes itself through the specific application to VLA models and the task-level energy reduction benchmarks, which are the numbers commercial engineering teams and investors will examine under replication conditions. General claims about hybrid AI efficiency have circulated for years; claims tied to specific task benchmarks on a specific model class are harder to dismiss.

MegaOne AI tracks 139+ AI tools across 17 categories. Neuro-symbolic robotics architectures represent a category that has been dormant in commercial AI tracking but is re-entering relevance rapidly as energy constraints tighten and the robotics market moves from demonstration toward production deployment.

The Architecture Signal Worth Taking Seriously

The prevailing approach to AI capability — scale a transformer, scale the hardware to run it, externalize the energy cost to grid infrastructure — is facing its first principled architectural challenge from the physical AI domain. That challenge is not philosophical. It is grounded in watts, battery chemistry, and the economics of operating a robot at scale without a data center nearby.

If the Tufts neuro-symbolic VLA benchmarks replicate under ICRA peer review, the implication extends beyond robotics: hybrid architectures that allocate neural compute to perception and symbolic compute to reasoning may represent a more sustainable design pattern for any AI system operating under real resource constraints. The robotics domain just happens to make those constraints impossible to ignore. Watch the Vienna conference proceedings in May — the replication data will be more informative than the original paper.

![Editorial illustration for: OpenAI Killed Sora — Here Are 5 AI Video Generators That Are Actually Better [Ranked 2026]](https://megaoneai.com/wp-content/uploads/2026/04/sora-alternatives-2026-600x450.webp)