- Alibaba released three closed-source AI models between March 30 and April 2, 2026: the multimodal Qwen3.5-Omni, the image-generation model Wan2.7-Image-Pro, and the agentic coding model Qwen3.6-Plus.

- Qwen3.6-Plus scores 61.6 on Terminal-Bench 2.0, putting it ahead of Claude 4.5 Opus (59.3) on that agentic coding benchmark, while trailing on SWE-bench Verified (78.8 vs 80.9).

- Qwen3.6-Plus is priced as low as 2 yuan ($0.29) per million input tokens on Alibaba Cloud, and was available free during a preview period on OpenRouter through April 3, 2026.

- OpenAI closed a $122 billion funding round on March 31, 2026, at an $852 billion valuation — the same week Alibaba shipped three separate frontier-tier models.

What Happened

Alibaba’s Qwen team released Qwen3.6-Plus on April 2, 2026, its third proprietary AI model in as many days. The release followed Qwen3.5-Omni on March 30 and Wan2.7-Image-Pro on April 1. All three models are closed-source, meaning developers cannot download, inspect, or adapt the weights — a deliberate departure from Alibaba’s earlier open-source strategy.

Bloomberg reported that the cluster of releases reflects Alibaba’s intent to focus on monetizing its flagship AI services rather than building developer goodwill through open weights. The company has set a stated target of $100 billion in AI revenue over the next five years.

Why It Matters

The timing places Alibaba’s three-model sprint directly alongside OpenAI’s $122 billion funding close on March 31, 2026 — a round anchored by $50 billion from Amazon, $30 billion from Nvidia, and $30 billion from SoftBank at an $852 billion valuation. OpenAI described the capital as fuel for compute infrastructure, research, and product scaling.

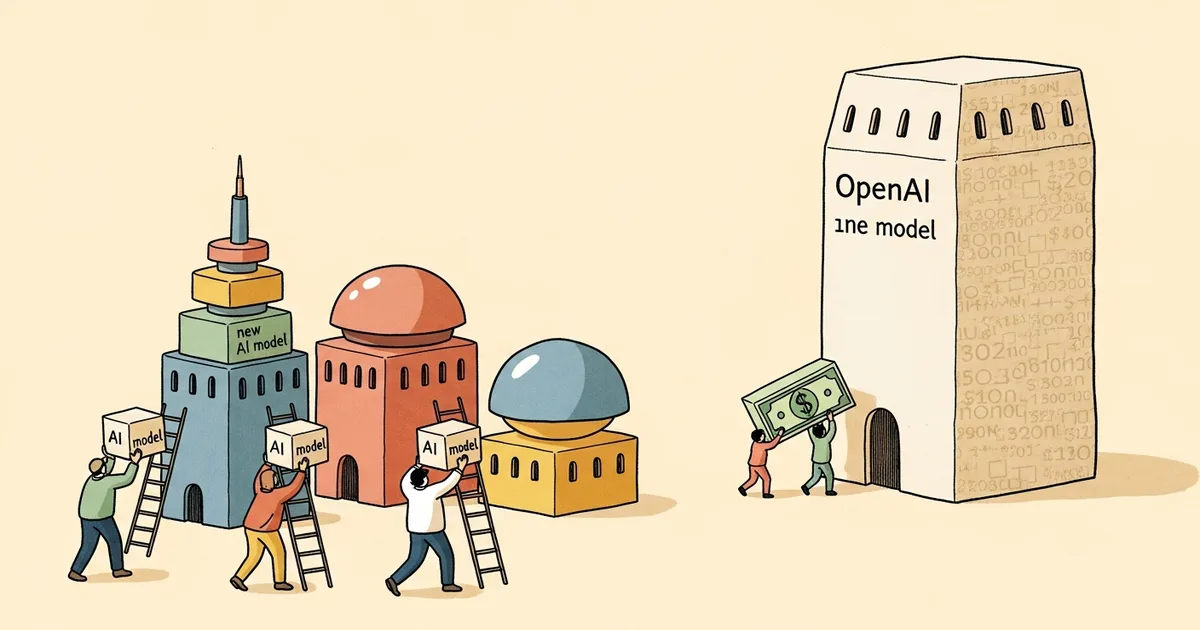

The juxtaposition is a concrete illustration of a diverging resource model. Western frontier labs are raising capital at scale and shipping models on longer cycles. Alibaba released a multimodal model, a text-to-image model, and a coding-focused language model within 72 hours — each targeting a distinct capability tier and enterprise use case.

The Qwen series has now reached a point where it competes on published benchmarks with Anthropic’s and OpenAI’s most recent flagship releases, not just in narrow tasks but across coding, document recognition, and multimodal reasoning. That shift matters for any enterprise currently evaluating vendor lock-in decisions.

Technical Details

Qwen3.6-Plus carries a 1-million-token context window and up to 65,536 output tokens. According to Alibaba’s official technical blog, the model is designed to “autonomously plan, test, and iterate on code to deliver production-ready solutions by managing the full execution loop from objective breakdown to final refinement.” On Terminal-Bench 2.0 — a benchmark measuring agentic terminal task completion — Qwen3.6-Plus scores 61.6 against Claude 4.5 Opus at 59.3. On SWE-bench Verified, it scores 78.8 against Claude 4.5 Opus at 80.9, and 56.6 vs 57.1 on SWE-bench Pro, indicating Claude retains a narrow lead on repository-level software engineering tasks.

On document and visual benchmarks, Qwen3.6-Plus scores 91.2 on OmniDocBench v1.5 document recognition versus 87.7 for Claude 4.5 Opus, and 85.4 on RealWorldQA image reasoning against 77.0. The model integrates with third-party coding environments including OpenClaw, Claude Code, Cline, Kilo Code, and OpenCode.

Qwen3.5-Omni, the first of the three releases, processes text, images, audio, and video within a single model using a Thinker-Talker architecture and Hybrid-Attention Mixture of Experts. It supports a 256,000-token context window, handles up to 10 hours of audio or approximately 400 seconds of 720p video, supports 113 languages for speech recognition, and Alibaba claimed SOTA performance across 215 benchmarks at launch. Wan2.7-Image-Pro, released on April 1, generates images at up to 4K (4096×4096) resolution with a built-in reasoning mode and supports text rendering in 12 languages.

Who’s Affected

Enterprise buyers evaluating agentic coding tools are the most directly affected segment. Qwen3.6-Plus is accessible via Alibaba Cloud’s Model Studio, the Wukong enterprise AI platform, and the Qwen App — and its API input pricing starts at 2 yuan ($0.29) per million tokens on Alibaba Cloud’s Bailian platform. That compares to significantly higher per-token rates for Claude Opus 4.6 and GPT-5.4, making the cost differential a concrete factor for high-volume workloads.

Western AI labs face a structural pressure distinct from benchmark competition. Alibaba is simultaneously covering multimodal perception, image generation, and agentic coding with separate optimized models released in rapid succession, rather than consolidating capabilities into a single model update cycle. Developers building on any of those three capability surfaces now have a price-competitive alternative with an aggressive release cadence.

The closed-source pivot also affects the open-source AI community. Prior Qwen releases — including the Qwen3 and Qwen3.5 families — were open-weight. Winbuzzer noted that the Qwen3.5-Omni closure broke Alibaba’s prior open-source streak, a signal that Alibaba is prioritizing revenue capture over ecosystem expansion at the frontier tier.

What’s Next

Qwen3.6-Plus exited its free preview period on OpenRouter on April 3, 2026. Permanent API pricing beyond the Alibaba Cloud Bailian rate has not been officially published as of this writing. Alibaba has not released a public technical report or full benchmark suite for Qwen3.6-Plus, and independent third-party verification of the benchmark figures cited in Alibaba’s launch materials remains limited.

Alibaba’s stated $100 billion AI revenue target over five years requires converting model releases into paid enterprise contracts at scale. The three-model sprint establishes a breadth of coverage, but enterprise adoption cycles for agentic coding tools typically run three to six months for procurement and security review. Whether the release velocity translates into contracted revenue — rather than benchmark headlines — is the concrete question that follows this week’s announcements.