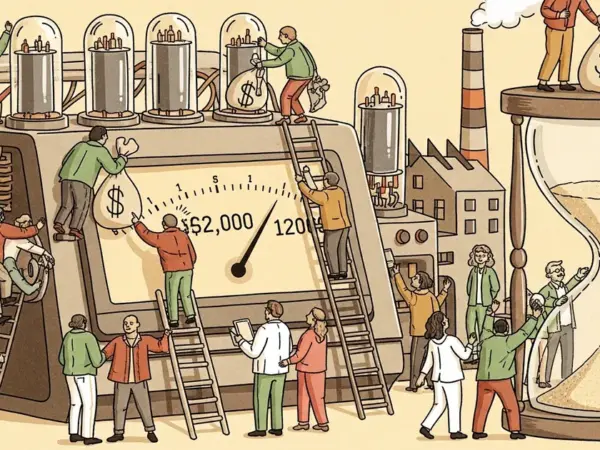

- OpenAI released GPT-5.5 on April 24, 2026, an agentic model priced at $5 per million input tokens and $30 per million output tokens—double the cost of its predecessor GPT-5.4.

- On Terminal-Bench 2.0, GPT-5.5 scores 82.7%, outperforming Anthropic’s Claude Opus 4.7 (69.4%) and Google’s Gemini 3.1 Pro (68.5%), though it trails both on the MCP Atlas tool-use benchmark.

- A companion model, GPT-5.5 Pro, launches at $30 per million input and $180 per million output tokens, available to Pro, Business, and Enterprise ChatGPT subscribers.

- OpenAI rates GPT-5.5’s cybersecurity capabilities as “High” under its Preparedness Framework—consistent with recent predecessors and below the “Critical” threshold.

What Happened

OpenAI released GPT-5.5 on April 24, 2026, describing it as “a new class of intelligence for real work and powering agents,” according to The Decoder’s report on the announcement. The model is designed to handle complex, multi-step tasks autonomously—switching between tools such as code execution, web search, and data analysis without human intervention at each step. Paying ChatGPT and Codex users on Plus, Pro, Business, and Enterprise plans received access immediately; API availability is listed as coming “very soon” at twice the cost of GPT-5.4.

Why It Matters

The release positions GPT-5.5 directly against Anthropic’s Claude Opus 4.7 and Google’s Gemini 3.1 Pro in a market where agentic task completion—models operating over extended action sequences rather than single-turn prompts—has become a primary differentiator. OpenAI leads on several coding and math benchmarks while trailing on others, indicating no single model dominates across all agentic task types. The doubled API price raises a concrete cost-benefit question for developers currently using GPT-5.4.

Technical Details

On Terminal-Bench 2.0, a benchmark measuring agentic coding workflow performance, GPT-5.5 scores 82.7%—7.6 percentage points above GPT-5.4’s 75.1%, and ahead of Claude Opus 4.7 (69.4%) and Gemini 3.1 Pro (68.5%). On FrontierMath Tier 4, an advanced mathematics evaluation, GPT-5.5 reaches 35.4% versus 22.9% for Claude Opus 4.7 and 16.7% for Gemini 3.1 Pro; the GPT-5.5 Pro variant pushes that figure to 39.6%.

Long-context handling improved substantially. On MRCR v2, which tests reliable retrieval across documents of 512K to 1M tokens, GPT-5.5 scores 74.0% compared to 36.6% for GPT-5.4. On the Graphwalks BFS test at one million tokens, the model jumps from 9.4% (GPT-5.4) to 45.4%.

The model does not lead every category. On SWE-Bench Pro, which evaluates real GitHub issue resolution, Claude Opus 4.7 scores 64.3% versus GPT-5.5’s 58.6%—though OpenAI noted that Anthropic itself acknowledged signs of benchmark memorization in that evaluation. On MCP Atlas, a tool-use benchmark run by Scale AI, GPT-5.5 scores 75.3%, trailing Claude Opus 4.7 (79.1%) and Gemini 3.1 Pro (78.2%).

OpenAI reports that GPT-5.5 was developed and optimized alongside NVIDIA GB200 and GB300-NVL72 systems. In a notable feedback loop, Codex running on GPT-5.5 analyzed production traffic patterns and wrote its own load-balancing heuristics, yielding a greater than 20% increase in token generation speed for OpenAI’s serving infrastructure. “The model helped improve the infrastructure that serves it,” the company stated in its announcement.

Who’s Affected

API developers face a direct pricing increase: $5 per million input tokens and $30 per million output tokens for GPT-5.5, versus $2.50 and $15 for GPT-5.4. GPT-5.5 Pro carries a steeper charge at $30 per million input and $180 per million output tokens. A fast mode in Codex generates tokens 1.5× faster at 2.5× the standard cost. OpenAI has not announced a timeline for free-tier access.

Security teams and researchers face a mixed change. OpenAI classifies GPT-5.5’s cybersecurity capabilities as “High” in its Preparedness Framework—the same tier as recent predecessors, not elevated to “Critical.” The model scores 81.8% on the CyberGym benchmark (versus 79.0% for GPT-5.4) and 88.1% on internal capture-the-flag tasks (versus 83.7%). Simultaneously, OpenAI is deploying stricter risk classifiers that the company says may produce more refusals initially. A new Trusted Access for Cyber program will offer verified security researchers expanded access to the model’s cybersecurity capabilities.

What’s Next

OpenAI has not specified an API release date beyond “very soon” and has not announced when free ChatGPT users will gain access. Benchmark results for GPT-5.5 Pro remain partial: OpenAI has published scores on only three of the nine benchmarks tested for the base model, and a full comparative evaluation has not been released. The company states it is coordinating with government partners on critical infrastructure protection tied to the model’s cybersecurity capabilities, with additional details in a system card published alongside the launch.