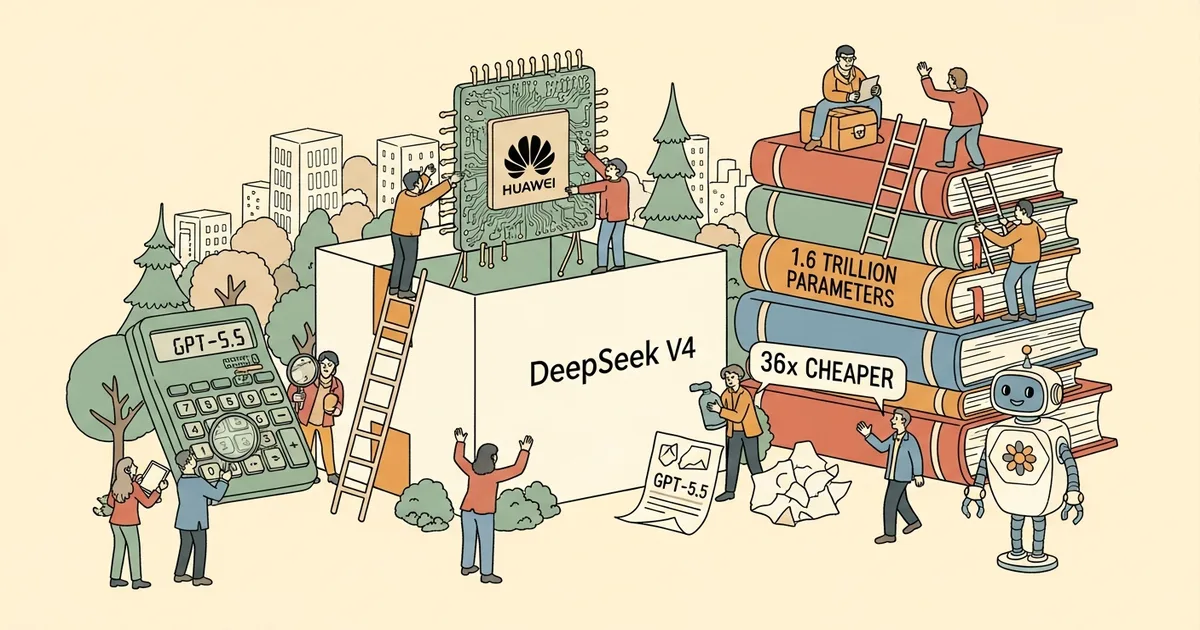

DeepSeek, the Hangzhou-based AI research lab, released DeepSeek V4 in preview on April 24, 2026 — immediately setting the record for the largest open-weight model ever released. V4-Pro carries 1.6 trillion total parameters with 49 billion active via sparse mixture-of-experts routing; V4-Flash runs at 284 billion total with 13 billion active. Both ship with 1-million-token context windows, MIT licensing, and open weights on Hugging Face. The entire training run used Huawei Ascend 950 chips — not a single Nvidia GPU. V4-Flash’s input pricing of $0.14 per million tokens is 36x cheaper than GPT-5.5‘s $5 per million. DeepSeek openly discloses that V4 trails frontier models by “approximately 3-6 months” — and is betting most enterprise teams won’t care.

V4-Pro vs. V4-Flash: Two Models, Two Markets

DeepSeek’s two-tier release separates capability from throughput rather than packaging one model at different quality points.

V4-Pro is the technical statement: 1.6 trillion total parameters make it the largest open-weight model in existence, surpassing every Llama 4, Mixtral, and Falcon release to date. Sparse MoE routing activates only 49 billion parameters per inference pass, keeping per-token compute within reach despite the headline parameter count. The Hybrid Attention Architecture — a sliding-window plus global attention design — directly addresses the dominant long-context failure mode: models losing coherence with earlier conversation turns as context length grows. At 1 million tokens, this matters for legal document review, full-codebase analysis, and multi-session agent workflows where context continuity is operationally critical.

V4-Flash is the production default: 284 billion total parameters, 13 billion active, $0.14 per million input tokens. It runs at lower latency and cost than V4-Pro, engineered for the high-volume inference workloads where most enterprise AI spend actually lands. A V4-Flash-Max variant — the model responsible for the Putnam-200 result discussed below — adds enhanced reasoning at pricing not yet disclosed at launch.

| Model | Total Params | Active Params | Context Window | License | Input Price |

|---|---|---|---|---|---|

| DeepSeek V4-Pro | 1.6T | 49B | 1M tokens | MIT | TBA |

| DeepSeek V4-Flash | 284B | 13B | 1M tokens | MIT | $0.14/M |

| DeepSeek V4-Flash-Max | 284B | 13B | 1M tokens | MIT | TBA |

Huawei Ascend 950: The Chip That Rewrites the Export-Control Calculus

Every previous DeepSeek model trained on Nvidia H800s — already an export-restricted, capability-downgraded variant of the H100. V4 ends that dependency entirely. DeepSeek confirmed training on Huawei Ascend 950 chips, completing the first frontier-class model built on a fully domestic Chinese AI stack: domestic training hardware, domestic software frameworks, and open weights that foreign enterprises can download and self-host at no cost.

U.S. export controls have operated on the premise that restricting Nvidia’s most capable GPUs meaningfully delays Chinese frontier model development. V4 is direct evidence against that thesis. The Ascend 950 uses a different cluster interconnect architecture than Nvidia’s NVLink, requiring DeepSeek to adapt its distributed training codebase substantially. That adaptation succeeded at 1.6 trillion parameters — a scale no prior Huawei-based training run had publicly reached. That outcome is a capability signal independent of any benchmark result, because it means the hardware dependency embedded in current U.S. export control strategy is measurably weaker than either the Biden or Trump administrations have assumed.

The Ascend 950 does not match Nvidia’s H200 or Blackwell architecture on per-chip throughput. But training efficiency is not purely a function of per-chip FLOP count. DeepSeek’s V3 reportedly achieved training at 2.8x lower cost than comparably sized models through algorithmic and systems-level optimization. The software gap compresses on a different timeline than chip fabrication, and DeepSeek has demonstrated it can close both simultaneously.

Pricing That Makes GPT-5.5 Look Expensive

V4-Flash at $0.14 per million input tokens against GPT-5.5‘s $5 per million produces a 36x price gap that no enterprise procurement model can absorb without justification. A company routing 500 million tokens monthly spends $2,500 on V4-Flash versus $90,000 on GPT-5.5 — a $87,500 monthly delta that funds approximately 1.2 additional engineers at median U.S. software salaries, or expands the same inference budget to 36x the volume.

| Model | Input ($/M tokens) | Multiple vs. V4-Flash |

|---|---|---|

| DeepSeek V4-Flash | $0.14 | — |

| DeepSeek V3 (prior gen) | $0.27 | 1.9x |

| GPT-5.5 | $5.00 | 36x |

DeepSeek’s explicit 3-6 month quality lag disclosure sets the value proposition without ambiguity: this is a one-generation-behind model at 3% of frontier pricing. For document summarization, customer support automation, code assistance, and retrieval-augmented generation pipelines — the four categories that account for the majority of production LLM inference spend — a 3-6 month generation gap is operationally invisible to end users in most deployments.

DeepSeek V4-Flash is also half the price of V4’s predecessor, DeepSeek V3, at launch. The price compression between major DeepSeek releases is itself a structural signal: the cost floor for capable open-weight inference is declining faster than proprietary API pricing is falling.

The Math Benchmark That Should Embarrass Frontier Labs

DeepSeek V4-Flash-Max scores 81.0 on Putnam-200 — a competition mathematics evaluation derived from the William Lowell Putnam Mathematical Competition, used as a proxy for rigorous multi-step reasoning under formal constraint. Gemini 3 Pro, Google’s current flagship model, scores 26.5 on the same benchmark. The gap is not marginal: it is a 3x performance difference on an evaluation that penalizes hallucination and rewards precise, verifiable logical chains.

Putnam-200 scores above 70 are rare among current large language models. Reaching 81.0 places V4-Flash-Max in territory that most frontier proprietary models have not publicly claimed. For enterprise verticals where math and coding accuracy are directly monetizable — fintech platforms, developer tooling, scientific research infrastructure, quantitative analysis — this result is a procurement argument, not a technical footnote.

V4-Pro’s broader benchmark profile compounds the picture: best open-weight model on math and coding across standard evaluations, trailing only Gemini 3.1 Pro on world knowledge tasks. DeepSeek’s disclosure that V4 lags frontier models by “approximately 3-6 months” on general capability appears directly inconsistent with its Putnam-200 result, where it outperforms Gemini 3 Pro — a current frontier model — by a factor of three. The self-reported lag is calibrated to the weakest dimension, not the strongest.

The IP-Theft Accusation Arrived One Day Early

The White House formally accused China of stealing AI intellectual property through proxy accounts on April 23, 2026 — the day before V4’s release. The accusation implicated training-data acquisition methods alleged to circumvent U.S. export restrictions and licensing agreements, according to multiple reports.

DeepSeek has not responded publicly. The V4 release functions as context regardless of the accusation’s legal standing: whatever its merits, it did not delay V4’s release by 24 hours. The sequence produced a news cycle where formal U.S. government IP-theft accusations and the largest open-weight model in history competed simultaneously for the same headlines — and the model dominated.

As competitive dynamics between U.S. and Chinese AI labs intensify across the full industry stack, the pattern of regulatory pressure coinciding with DeepSeek release windows — R1 in January 2025, V3 during a period of export-control tightening, V4 one day after a White House accusation — is consistent enough to analyze on its own terms. The effect in each case: the model story dominates, the regulatory story becomes framing context.

Open Weights, MIT License, and the Self-Hosting Problem for Regulators

MIT licensing means V4’s weights can be downloaded, fine-tuned, deployed commercially, and redistributed with no usage reporting and no royalties. Once those weights leave Hugging Face’s servers, there is no mechanism for DeepSeek, the Chinese government, or U.S. export control authorities to track where V4 is running.

As global AI data center buildout accelerates in jurisdictions outside U.S. regulatory reach, V4’s open weights will run on infrastructure that neither Nvidia nor the Department of Commerce can audit. The 1-million-token context window means self-hosted V4 deployments support the full range of enterprise-grade workflows — legal document analysis, financial modeling, multi-document scientific research — that previously required proprietary API access at 10-36x higher cost per token.

Export controls applied after an open-weight model has been distributed carry considerably less leverage than controls applied before training begins. The V4 weights on Hugging Face are already cached on servers across dozens of countries. This is not an oversight in DeepSeek’s release strategy. The MIT license and open-weight distribution are the strategy — they make post-hoc regulatory intervention structurally ineffective at scale.

What the 3-6 Month Gap Means for AI Infrastructure Decisions in Q2 2026

DeepSeek’s self-reported 3-6 month lag is the most strategically useful data point in the V4 release, and the most underanalyzed. It is a market position statement designed to restructure the pricing conversation rather than a capability concession.

Three to six months in current AI development represents approximately one major model generation cycle. V4’s implicit claim: we are one generation behind the frontier, and we cost 36x less. For the majority of production inference workloads — content generation, code assistance, document summarization, RAG pipelines — being one generation behind a frontier model at 3% of the cost is a better enterprise deal, not a worse one. The organizations for whom that trade fails to hold are a narrow segment: research labs requiring absolute state-of-the-art performance, and applications where frontier-model quality directly determines user-visible output quality.

MegaOne AI tracks 139+ AI tools across 17 categories, and V4’s release immediately reshuffles the open-weight tier. The capability gap between the best open-weight model and GPT-4-class proprietary APIs — which was measured in years as recently as mid-2024 — now fits inside a quarterly business review cycle.

As frontier labs navigate operational complexity at the leading edge, the strategic value of a fast-following, cost-minimizing, open-weight approach becomes clearer with each DeepSeek release. A fully domestic training stack — Huawei silicon, domestic frameworks, MIT-licensed weights — means that V5, or whatever follows V4, will not require a single Nvidia chip to train, deploy, or distribute globally.

For enterprise AI infrastructure teams making procurement decisions in Q2 2026: $0.14 per million input tokens is the new floor for high-throughput inference. Any vendor priced above that floor requires a documented performance justification. A 3-6 month generation gap does not provide one for most production use cases — and on Putnam-200 mathematics, the supposed follower is outperforming the supposed leader by a factor of three.