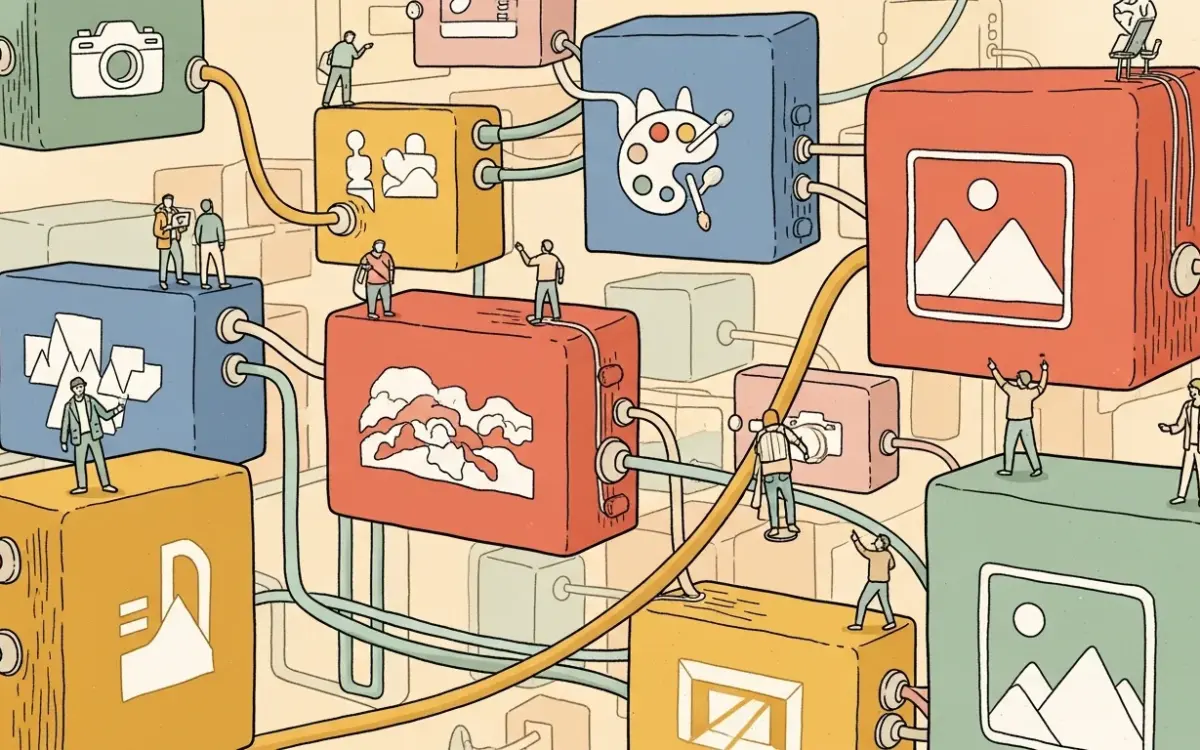

- ComfyUI is a free, open-source node-based interface for running diffusion models, with 108,000 GitHub stars and support for Stable Diffusion, Flux, video, audio, and 3D generation.

- Its graph-based workflow system only re-executes modified nodes, making complex multi-step pipelines significantly faster than re-running entire workflows from scratch.

- The tool runs on GPUs with as little as 1 GB VRAM and supports NVIDIA, AMD, Intel Arc, and Apple Silicon hardware.

- ComfyUI works fully offline, requires no mandatory downloads after setup, and saves workflows as portable JSON files.

What Happened

ComfyUI has established itself as the leading open-source interface for running diffusion models. The project, maintained by Comfy-Org on GitHub, has accumulated 108,000 stars and 12,400 forks as of early 2026. It provides a node-based visual workflow system for image, video, audio, and 3D generation without requiring users to write code.

The platform supports desktop applications for Windows and macOS, a portable standalone build for Windows, a command-line installer via comfy-cli, and manual installation through Git. Weekly stable releases ship on Mondays, with the development team maintaining three interconnected repositories: ComfyUI Core for the backend, ComfyUI Desktop for packaging, and ComfyUI Frontend for the user interface.

Why It Matters

ComfyUI addresses a core friction point in generative AI workflows. Most diffusion model interfaces require users to restart entire generation pipelines when adjusting a single parameter. ComfyUI’s graph-based architecture tracks dependencies between nodes and only re-executes the portions of a workflow that have actually changed between runs.

This partial re-execution model saves significant time when iterating on complex multi-step pipelines that chain together model loading, text conditioning, sampling, upscaling, and post-processing. For professional users running large batches or fine-tuning prompts across dozens of variations, the time savings compound substantially over a working session.

The tool also consolidates what previously required multiple separate applications into a single environment. A single ComfyUI workflow can handle text-to-image generation, inpainting, ControlNet conditioning, LoRA application, upscaling with ESRGAN, and video generation in one connected graph. Users save these workflows as JSON files that can be shared, versioned, and reproduced across different machines.

Technical Details

ComfyUI supports an extensive range of models across multiple media types. For image generation, it handles Stable Diffusion 1.x through 3.5, SDXL and SDXL Turbo, Stable Cascade, Flux and Flux 2, AuraFlow, HunyuanDiT, Lumina Image 2.0, HiDream, Pixart Alpha and Sigma, and several others. Image editing is supported through Omnigen 2, Flux Kontext, and HiDream E1.1. Video generation support includes Stable Video Diffusion, Mochi, LTX-Video, Hunyuan Video 1.5, and Wan 2.1/2.2. Audio generation runs through Stable Audio and ACE Step, while 3D model generation uses Hunyuan3D 2.0.

The system accepts CKPT and safetensors checkpoint formats and supports LoRAs in regular, locon, and loha variants, along with Hypernetworks, embeddings and textual inversion, ControlNet, T2I-Adapter, GLIGEN, and upscale models including ESRGAN, SwinIR, and Swin2SR. Model merging, area composition, and LCM model support are built in. Workflows can also embed metadata including seeds directly into output PNG, WebP, and FLAC files.

Memory management is a standout feature. Smart model offloading allows ComfyUI to run on GPUs with as little as 1 GB VRAM, and a CPU-only mode is available via the –cpu flag. The system supports NVIDIA GPUs with CUDA 13.0 or 12.6 for legacy 10-series cards, AMD GPUs with ROCm 7.2, Intel Arc GPUs via native PyTorch XPU support, Apple Silicon M1 and M2 chips through PyTorch nightly builds, and specialized hardware including Ascend NPUs and Cambricon MLUs.

Who’s Affected

ComfyUI serves several distinct user groups. Digital artists and designers use it for iterative image generation workflows that require fine control over each processing step. Developers integrate it into production pipelines through its API backend, using the asynchronous queue system for batch processing. Researchers use the node system to prototype and test new model architectures, sampling methods, and conditioning techniques.

The low VRAM requirements also open diffusion model workflows to users with older or entry-level GPUs who are excluded from tools with higher hardware demands. The fully offline operation mode, which requires no internet connection or mandatory downloads after initial setup, makes ComfyUI suitable for air-gapped environments or users in regions with limited bandwidth.

What’s Next

The project continues to add model support at a rapid pace, with recent additions including Flux Kontext for image editing, Wan 2.2 for video generation, ACE Step for audio, and Hunyuan3D 2.0 for 3D content. The ComfyUI-Manager extension provides a built-in system for discovering and installing community-built custom nodes that extend the platform’s capabilities.

The main limitation remains the learning curve. The node-based interface is powerful but less intuitive than simpler prompt-and-generate tools like Automatic1111 or Fooocus. New users typically need time to understand node connections, data types, and workflow construction before reaching productive speeds. Configuration through extra_model_paths.yaml adds flexibility for sharing model directories with other Stable Diffusion interfaces but requires manual file editing.

Related Reading

- Kling AI Review 2026: Affordable AI Video Generation with Cinematic Quality

- Pika Review 2026: Accessible AI Video Generation for Social Content

- Make.com Review 2026: Visual Workflow Automation with AI Integration

- Runway Review 2026: Professional AI Video Generation and Editing Platform

- Adobe Firefly Review 2026: Commercially Safe AI Image Generation Inside Creative Cloud