- Stanford University researchers tested single AI agents against five multi-agent architectures and found solo agents performed equally or better when given the same compute budget.

- The apparent advantage of multi-agent systems is largely attributable to their use of more total compute, not collaborative synergy, the study argues.

- Teams did outperform solo agents under two specific conditions: high input corruption and when built on weaker base models.

- The preprint tested four models—including Gemini 2.5 Pro and DeepSeek-R1-Distill-Llama-70B—on two multi-step reasoning benchmarks and is explicitly limited to text-based tasks.

What Happened

Researchers at Stanford University published a preprint challenging the foundational assumption behind multi-agent AI systems: that agent collaboration produces better results than a single model working alone. According to reporting by The Decoder, the study’s central finding is that when a single agent and a team of agents are given the same compute budget, the solo agent performs at least as well in nearly all tested conditions. The work was reported in late March 2026.

Why It Matters

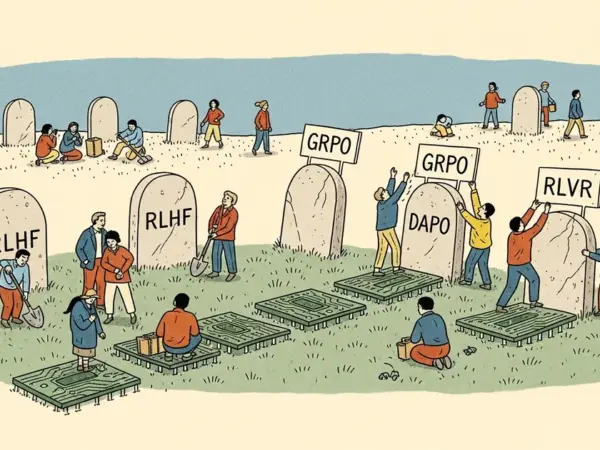

Multi-agent frameworks have become a dominant design pattern in both AI research and production deployment. Systems built on orchestration layers—including those using sequential chains, debate architectures, and ensemble methods—are premised on the idea that dividing complex tasks among multiple models improves accuracy and robustness. The Stanford findings complicate that premise by introducing compute budget as the controlling variable, a factor that prior multi-agent benchmarks have rarely held constant.

Earlier work from multiple institutions demonstrated accuracy improvements from multi-agent setups, but those comparisons typically allowed agent teams to consume substantially more compute than their single-agent baselines—a confound the Stanford study was designed to isolate.

Technical Details

The Stanford team evaluated four models: Qwen3-30B-A3B, DeepSeek-R1-Distill-Llama-70B, Gemini 2.5 Flash, and Gemini 2.5 Pro. Each was tested as a single agent and as part of five distinct team architectures, including sequential chains, debate setups, and ensemble approaches, across two multi-step reasoning benchmarks.

The researchers’ explanation for single-agent parity centers on information loss at handoff points. Each time one agent passes intermediate results to another, relevant context risks being dropped or compressed. A solo agent, by contrast, maintains a continuous reasoning chain without inter-model transfers.

The study identified two specific failure modes that erode the solo agent’s theoretical advantage at long contexts: “context rot,” a general degradation in coherence over extended reasoning chains, and the “lost in the middle” effect, in which models systematically deprioritize information positioned in the center of long input sequences. In experiments using deliberately corrupted input text, structured multi-agent teams outperformed the single agent when distortion was high—because splitting the task helped filter noise more effectively. The debate architecture was identified as the strongest-performing team configuration overall.

Who’s Affected

AI engineers building production pipelines on multi-agent orchestration frameworks face a direct cost-efficiency question from these findings. The study also found that multi-agent teams provided greater benefit when constructed from weaker base models, suggesting the optimal architecture depends significantly on the capability tier of the underlying model being used.

For teams running at scale—where compute costs compound across millions of inference calls—the implication is that defaulting to multi-agent setups without controlling for compute budget may introduce unnecessary expense without a corresponding accuracy benefit.

What’s Next

The preprint explicitly limits its scope to text-based reasoning tasks. The researchers note that whether multi-agent teams offer advantages for tool use, code execution, or image processing remains outside the study’s scope and untested. Those domains—particularly agentic settings involving external API calls or multimodal inputs—are where practitioners most commonly deploy multi-agent architectures today, leaving open questions about whether the compute-parity finding generalizes.