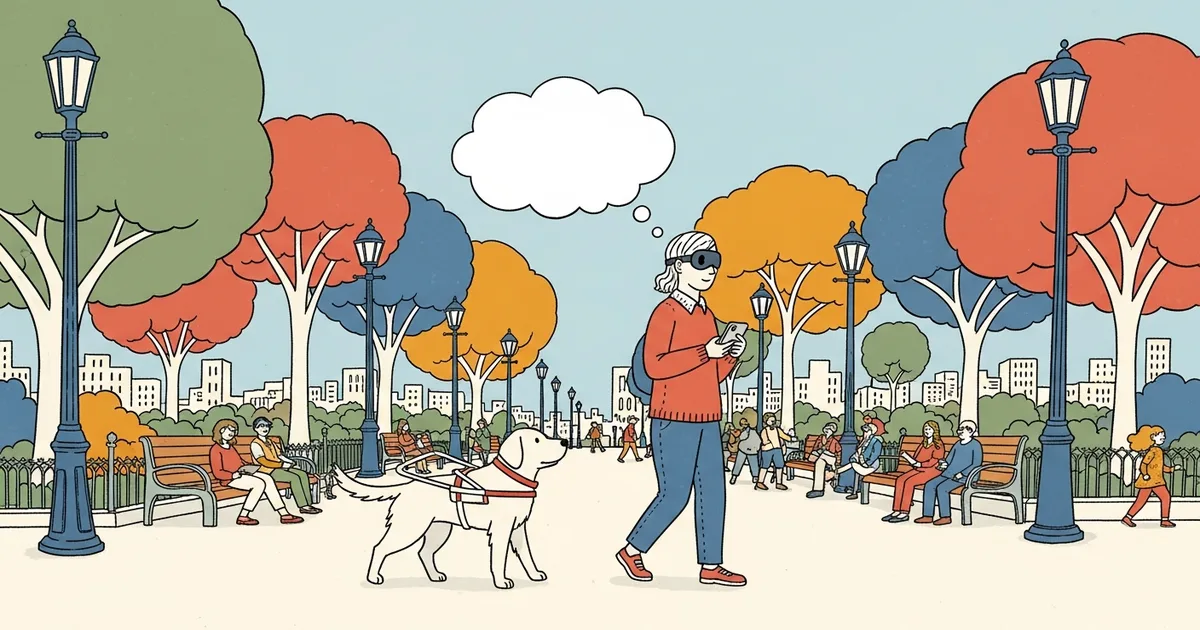

Researchers at Binghamton University (State University of New York) published findings in April 2026 on an LLM-powered harness system that enables guide dogs to verbally relay navigation information, environmental context, and behavioral signals to their visually impaired handlers in real time. The system pairs large language models with an onboard sensor suite to translate canine behavior into spoken language — giving working guide dogs a functional voice for the first time in the 100-year history of formalized guide dog programs.

The gap it addresses is concrete: guide dogs are exceptional navigators, but they cannot explain what they see, why they stopped, or what hazard they just avoided. The Binghamton system closes that gap.

Guide Dogs Have Always Been Silent Partners

An estimated 253 million people live with visual impairment worldwide, including 36 million who are fully blind, according to the World Health Organization. Of these, only a fraction — roughly 10,000 in the United States — work with trained guide dogs, constrained by program capacity and training costs that average $50,000 per dog through accredited organizations.

When a guide dog stops unexpectedly, the handler must interpret the halt through leash tension and body position alone: Is it a curb? A pedestrian? A construction barrier? An off-leash dog? That ambiguity forces constant cognitive effort to decode a silent partner’s decisions across every block of urban navigation.

Guide dog training programs spend months teaching handlers to read behavioral cues — a skill that varies widely between individuals. The Binghamton system does not replace that skill. It supplements it with language.

How the LLM Integration Works

The research team embedded a multi-sensor harness onto working guide dogs, feeding continuous data streams into an LLM interface running on a connected mobile device. The sensor stack includes:

- A forward-facing camera with real-time object detection

- Accelerometers and gyroscopes tracking gait and body orientation

- Environmental audio processing for traffic and crowd density signals

- GPS and mapping integration for route context

The LLM correlates incoming sensor data with the dog’s trained behavioral responses and generates natural language outputs delivered to the handler via earpiece. The model does not direct the dog — the dog’s decision-making remains fully intact. The LLM’s sole function is translation: converting what the dog detects and does into words the handler can hear immediately.

Outputs are brief and action-relevant. When the dog halts at a curb, the system says: Shadow stopped. Curb ahead, no immediate traffic. When the dog routes around an obstacle: Bicycle on your left. Shadow rerouted. When gait analysis flags potential fatigue: Shadow’s pace has slowed — possible rest needed.

Three Output Categories Define the System’s Scope

Navigation events cover stops, turns, and route deviations — the core of daily mobility. These replace what handlers previously decoded from leash tension alone, and they carry explicit hazard identification: stairs, road crossings, narrow passages, and moving obstacles flagged by name and direction.

Environmental context synthesizes camera and audio data to describe surroundings as the handler moves through them: entering a lobby, a crowded platform, construction noise on the right. Guide dogs communicate navigation decisions but have no mechanism to provide ambient scene description. This output category is entirely new to the handler-dog interface.

Dog welfare signals flag when the working dog shows stress or fatigue through gait pattern changes. Guide dog overwork is a documented welfare problem — real-time behavioral monitoring creates accountability that did not previously exist in field conditions, benefiting both handler and animal.

Robotic Guide Dogs Are the Obvious Comparison — and They Still Fall Short

Robotic guide dog development has been active for years, with prototypes from teams at the Berkeley Artificial Intelligence Research Lab and elsewhere demonstrating competent obstacle navigation in controlled environments. The appeal is straightforward: no biological needs, no retirement at age 10, full sensor integration from the start.

But robotic systems consistently underperform real guide dogs in unstructured urban settings. Dogs adapt to social context — pausing for a distressed pedestrian, navigating sudden crowd surges, responding to handler hesitation with nuance that no current robotic system reliably replicates. The Binghamton approach does not attempt to replace that biological intelligence. It adds a communication layer to an already-functional partnership. This pattern mirrors challenges in autonomous AI navigation systems, where real-world deployment consistently surfaces edge cases that controlled testing misses.

Cost remains a structural barrier for robotic alternatives. Purpose-built robotic guide configurations with full sensor stacks can approach the price of a trained biological dog, but without the century-old support infrastructure — accredited training organizations, veterinary networks, and legal protections under the Americans with Disabilities Act — that established guide dog programs provide.

The Emotional Dimension Is Not Anecdotal

Researchers reported that verbal feedback altered how handlers perceived their relationship with their dogs — not just as a mobility tool, but as a partner that could describe shared experience. Participants consistently described the interaction as collaborative rather than interpretive.

This has documented clinical relevance. A 2021 review published in Disability and Rehabilitation found that guide dog users reported significantly lower anxiety levels in unfamiliar environments compared to white cane users — a gap attributed to the perceived social presence of the dog. Verbal communication — the dog effectively narrating what it sees — has the potential to deepen that psychological effect substantially and extend it to more complex and stressful navigation scenarios.

The ongoing conversation about human-AI interaction typically centers on screen interfaces and productivity tools. The guide dog application operates at a more fundamental level: AI mediating an already-trusted human-animal bond, where the emotional and safety stakes exceed any consumer application context by a wide margin.

LLMs as Embedded Translators: A Deployment Pattern Worth Tracking

The Binghamton guide dog system reflects a broader shift in how LLMs are being deployed — not as conversational chatbots but as real-time translators of physical-world data streams. AI has been moving steadily into embedded utility roles across consumer applications, but most of those deployments carry low stakes. A misread weather pattern is inconvenient. A misread traffic signal in a guide dog context is a different category of failure entirely.

The Binghamton team built conservatism into the system explicitly: when sensor confidence drops below a set threshold, the system defaults to cautionary outputs rather than potentially incorrect ones. That failure-mode design is standard in safety-critical engineering and notably absent from most consumer LLM deployments. It signals the research team understood they were working in a life-safety domain.

MegaOne AI tracks 139+ AI tools across 17 categories, and assistive AI applications — tools built for users with disabilities — remain among the most undercovered segments despite clear societal impact. The guide dog system represents a category where LLM capability and human need intersect in a way that most enterprise LLM deployments do not.

What Stands Between This Research and Field Deployment

Four documented gaps separate the current prototype from deployment in accredited guide dog programs:

- Battery life: Continuous multimodal sensor processing is power-intensive. Current prototypes require mid-day recharging — a significant usability problem for handlers with full working schedules.

- Latency: Navigation-critical verbal outputs must arrive in under 500ms to be actionable. The team reported average latency of 340ms in controlled tests, which clears the threshold — but real-world network variability in urban environments has not been fully stress-tested.

- Output calibration: Early prototypes generated too much verbal feedback, fatiguing users and interfering with ambient awareness. Tuning information density for different users, environments, and task demands remains an open research problem.

- Regulatory review: Guide dog programs in the United States operate under ADA Title II and III frameworks. A harness system that modifies how guide dogs communicate their trained behaviors to handlers will require formal review from accredited organizations and potentially federal guidance before deployment.

The Binghamton team has indicated plans for field trials with guide dog organizations within 18 months. That timeline is optimistic relative to the regulatory pathway, but controlled-environment results justify the continued investment.

Guide dogs have been working beside visually impaired handlers since the 1920s. For the first time, the partnership has a mechanism to speak — not metaphorically, but in plain language, in real time. The handlers who participated in this research did not just navigate better. They reported understanding their dogs better. That distinction is the finding worth watching as this moves toward field trials.