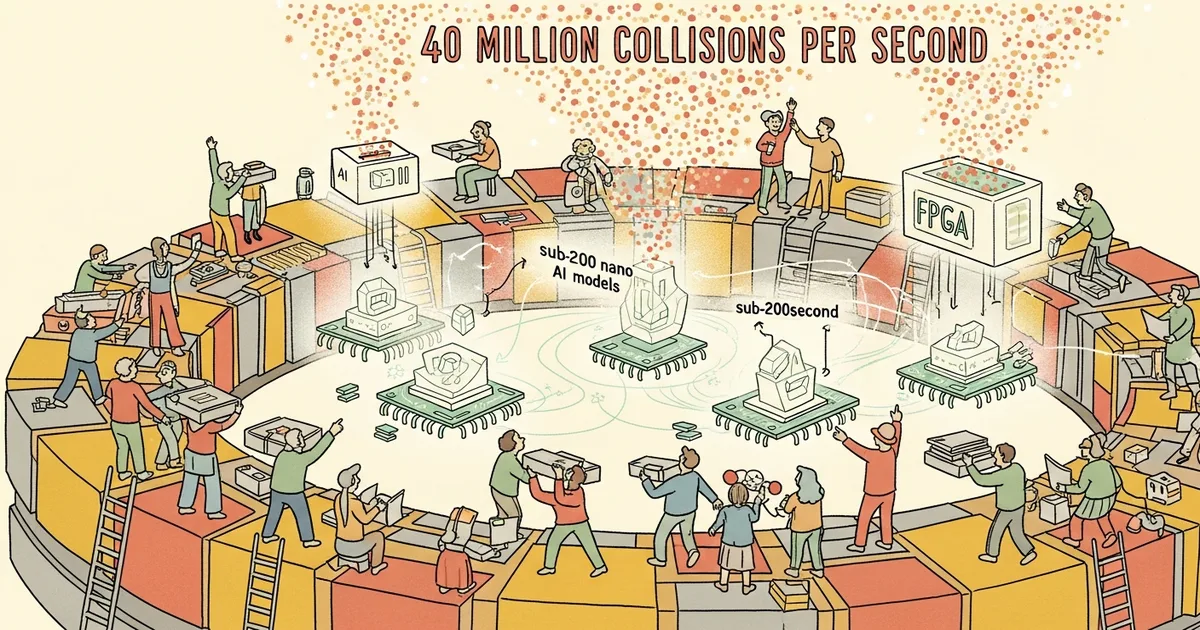

CERN has deployed ultra-compact artificial intelligence models directly onto FPGA chips inside the Large Hadron Collider’s trigger system, enabling real-time filtering of particle collision data at a rate of 40 million events per second. The system must decide within nanoseconds which collision events to keep and which to discard permanently, retaining less than 0.02 percent of all data generated.

The scale of the data challenge is staggering. The LHC generates roughly 40,000 exabytes of raw sensor data per year, equivalent to approximately one quarter of all data on the entire internet. Proton bunches cross every 25 nanoseconds, producing about 60 collisions per crossing and generating several megabytes of data per event. The final daily output after filtering is approximately one petabyte of scientifically valuable data.

Two primary AI algorithms handle the filtering. AXOL1TL is an anomaly detection model combining a VICReg-trained feature extractor with a Variational Autoencoder, operating with a decision window under 50 nanoseconds. It uses precomputed lookup tables instead of full floating-point calculations to achieve near-instantaneous outputs. CICADA, the Calorimeter Image Convolutional Anomaly Detection Algorithm, uses a convolutional autoencoder to process calorimeter energy deposits with inference times under 200 nanoseconds on FPGAs. CICADA has been deployed on the CMS Level-1 Trigger since October 2024 and has already demonstrated the ability to trigger on top quark pair production events.

Both models use aggressive optimization techniques including heterogeneous quantization with unique bitwidths per parameter, pruning, parallelization, and knowledge distillation to compress larger teacher models into compact student models that fit on FPGA hardware. The key enabling tool is hls4ml, an open-source framework developed by a cross-institutional collaboration that translates standard machine learning models into FPGA firmware.

The approach represents a significant proof point for edge AI deployment in extreme environments. If AI models can make reliable decisions in under 200 nanoseconds while processing petabytes of data per day on embedded hardware, the implications extend well beyond particle physics to autonomous systems, telecommunications, and real-time industrial monitoring where latency and throughput constraints are similarly severe.