Alibaba released Qwen 3.5, a major upgrade designed for agentic multimodal tasks including the ability to analyze videos up to two hours long. Some variants run on high-end consumer hardware without GPU clusters, continuing the open-weights strategy that mirrors Meta’s Llama playbook.

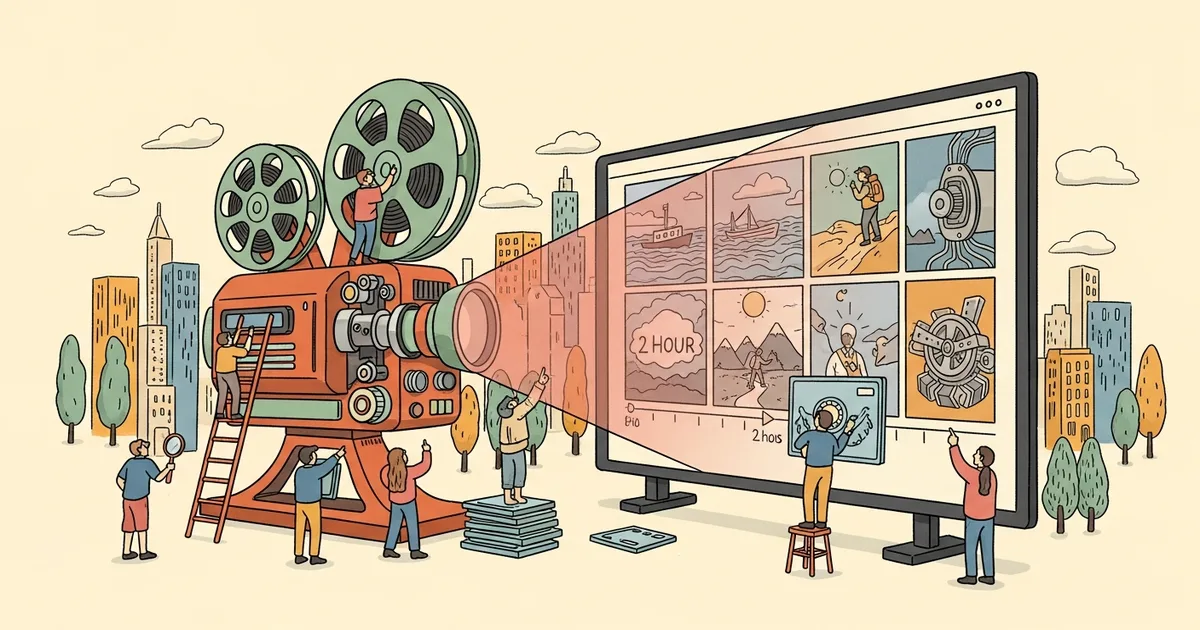

The 2-Hour Video Capability

Previous multimodal models handled video in short clips — typically under 5 minutes for cloud models, under 30 seconds for local ones. Qwen 3.5 processes up to 120 minutes of video by combining temporal sampling (analyzing frames at variable intervals based on scene complexity) with a long-context architecture that maintains coherent understanding across the full duration.

The practical difference: you can feed it an entire meeting recording, documentary, or surveillance feed and ask questions about any moment. “What was discussed at the 47-minute mark?” “Show me every instance of a specific person appearing.” “Summarize the key decisions from this 2-hour board meeting.”

Benchmarks vs Competitors

Alibaba published comparison benchmarks on video understanding tasks:

- Video-QA accuracy (long-form): Qwen 3.5 scored 78.3% vs GPT-5.4‘s 74.1% and Gemini 3 Pro‘s 76.8%

- Temporal reasoning: Qwen 3.5 led on tasks requiring understanding event sequences across hours of footage

- Scene description: Competitive with GPT-5.4 on short clips, superior on clips longer than 15 minutes

These benchmarks reflect Alibaba’s internal evaluation suite. Independent benchmarks from third parties are pending.

Hardware Requirements

Qwen 3.5 comes in multiple variants:

- Qwen 3.5-Max: Full capability, requires 4x A100 or equivalent (cloud deployment)

- Qwen 3.5-Plus: Strong video analysis up to 60 minutes, runs on 2x A100

- Qwen 3.5-Turbo: Text and image focused, runs on a single RTX 4090

The open-weights release means organizations can deploy these locally, avoiding API costs and data privacy concerns. Like Arcee’s Trinity, the open-weights strategy enables self-hosted deployment at a fraction of proprietary API costs.

Practical Use Cases

Two-hour video analysis unlocks applications that short-context models can’t address:

- Surveillance review: Analyzing full shifts of security footage for specific events

- Content moderation: Reviewing entire livestreams or uploaded videos for policy violations

- Sports analysis: Breaking down complete games with tactical insights

- Medical imaging: Analyzing lengthy surgical recordings or patient monitoring footage

- Education: Generating summaries and study guides from recorded lectures

The Open-Weights Playbook

Alibaba’s strategy mirrors Meta’s Llama approach: release powerful open models to build ecosystem adoption, drive developer mindshare, and commoditize the technology layer where competitors charge premium prices. With efficiency techniques like self-distillation improving rapidly, the gap between open and proprietary models continues to narrow. For video understanding specifically, Qwen 3.5 is now the strongest openly available option.