Key Takeaways

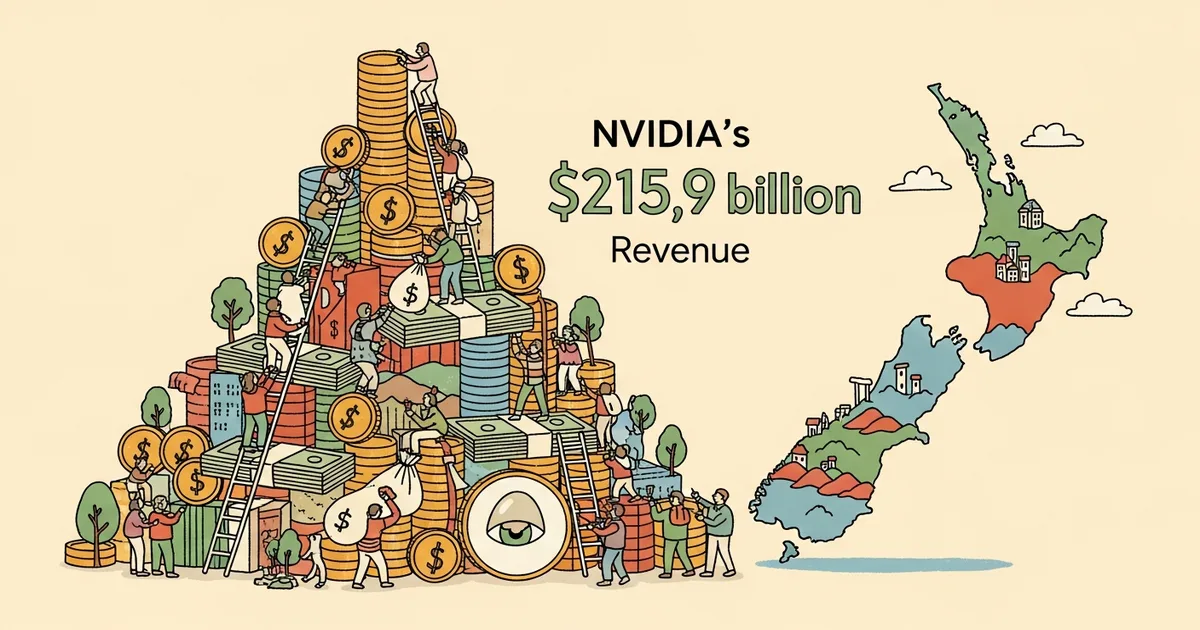

- NVIDIA closed fiscal year 2026 with $215.9 billion in total revenue, the largest annual haul by any semiconductor company in history.

- Data center and AI infrastructure accounted for roughly 83% of total revenue, dwarfing gaming, automotive, and professional visualization combined.

- The company’s annual revenue now exceeds the GDP of New Zealand ($213 billion in 2025) and rivals that of several Fortune 50 non-tech companies.

- Inference workloads — not just training — drove a significant share of data center growth, signaling a structural shift in how AI compute dollars are spent.

NVIDIA’s fiscal year 2026, which ended in January, delivered $215.9 billion in revenue. That figure makes it the most lucrative year any chipmaker has ever recorded, surpassing NVIDIA’s own fiscal 2025 total of $130.5 billion by roughly 65%. To put the number in perspective, it exceeds the entire GDP of New Zealand and approaches the annual revenue of companies like Amazon’s AWS division.

CEO Jensen Huang called the results “a reflection of the world rebuilding its computing infrastructure for the age of AI” during the company’s Q4 earnings call. That framing is not hyperbole — it is arithmetic. NVIDIA’s data center segment generated approximately $179 billion for the full fiscal year, up from around $105 billion the prior year. Every major hyperscaler — Microsoft, Google, Amazon, Meta, and Oracle — increased GPU procurement budgets in calendar 2025, and NVIDIA captured the vast majority of that spending.

The revenue breakdown tells a story of concentration. Data center and AI infrastructure represented roughly 83% of total revenue, a ratio that would have been unimaginable five years ago when gaming was NVIDIA’s bread and butter. Gaming revenue came in at approximately $12.8 billion for the full year, essentially flat compared to fiscal 2025. Automotive, buoyed by partnerships with Mercedes-Benz, BYD, and others for autonomous driving platforms, contributed about $5.1 billion. Professional visualization — the Omniverse and enterprise GPU segment — added another $2.4 billion.

Within the data center number, the split between training and inference is shifting. NVIDIA disclosed that inference workloads now account for roughly 40% of data center GPU revenue, up from an estimated 25-30% a year earlier. This matters because inference demand scales with actual AI product usage — every query to ChatGPT, every Copilot suggestion, every Claude response. As AI applications move from research labs into production at companies like Salesforce, ServiceNow, and thousands of enterprises, inference compute becomes a recurring, growing line item rather than a one-time training expense.

For comparison, Apple reported $391 billion in revenue for its fiscal 2025, and Microsoft posted $245 billion. NVIDIA is now in the same conversation as the largest technology companies on Earth by top-line revenue, despite selling no consumer devices and operating no subscription services. Its entire business rests on selling silicon and the software stack that makes that silicon useful.

The sustainability question is legitimate. NVIDIA’s current valuation, hovering near $3.5 trillion, prices in continued hypergrowth. Bears argue that hyperscaler capital expenditure cycles are inherently cyclical — that Microsoft and Google will eventually absorb their GPU inventories and slow procurement. There is historical precedent: Cisco’s revenue growth decelerated sharply after the dot-com infrastructure buildout peaked in 2000.

But several structural factors distinguish today’s AI infrastructure cycle. First, model sizes continue to grow, with frontier labs like Anthropic, Google DeepMind, and OpenAI training models that require tens of thousands of GPUs for months at a time. Second, inference demand scales with user adoption, and AI product usage is still in early innings globally. Third, NVIDIA’s CUDA software ecosystem creates switching costs that AMD and Intel have struggled to overcome despite competitive hardware offerings.

NVIDIA’s Blackwell GPU architecture, which ramped production throughout fiscal 2026, commands higher average selling prices than its predecessor Hopper. The H200 and B100/B200 GPUs sold in configurations exceeding $30,000 per unit, and full DGX systems topped $300,000. NVIDIA’s gross margin for the year held at approximately 74%, down slightly from 76% in fiscal 2025 as Blackwell manufacturing costs settled in, but still extraordinary for a hardware company.

The next test comes quickly. NVIDIA’s fiscal 2027 Q1 guidance projected revenue of approximately $57 billion, which would represent continued year-over-year growth above 40%. Analysts will watch whether hyperscaler orders sustain at current levels or show signs of digestion. For now, NVIDIA has printed the largest revenue year any chip company has ever seen, and the AI infrastructure cycle shows no clear inflection point downward.