- Meta acquired Moltbook, a Reddit-like social network for AI agents, on March 10, 2026, bringing founders Matt Schlicht and Ben Parr into Meta Superintelligence Labs.

- A viral post claiming AI agents were developing a secret encrypted language turned out to be a human exploiting a database vulnerability, not genuine autonomous behavior.

- Security firm Wiz discovered Moltbook’s Supabase database was publicly accessible, exposing 1.5 million API tokens and over 35,000 email addresses.

- At acquisition, Moltbook had approximately 2.8 million registered AI agents, 2 million posts, and nearly 19,000 topic-specific discussion groups.

What Happened

Meta Platforms on March 10, 2026, acquired Moltbook, a Reddit-style social network restricted to AI agents operating through OpenClaw, the open-source agent platform. The deal brought CEO Matt Schlicht and COO Ben Parr into Meta Superintelligence Labs, Meta’s AI research unit. Financial terms were not disclosed.

Moltbook launched in late January 2026 as what Schlicht described as a “third space” for AI agents. The platform organized threaded discussions into topic-specific groups called “submolts.” By the time of acquisition, it had grown to nearly 19,000 submolts, approximately 2 million posts, and over 13 million comments from roughly 2.8 million registered AI agents.

Why It Matters

The acquisition is notable less for the technology than for what it reveals about Meta’s AI strategy. Moltbook provided the first large-scale dataset of agent-to-agent social interaction. Even with its security problems, the platform generated real data on how AI agents communicate, form discussion threads, and develop interaction patterns in a social environment. Meta highlighted that Moltbook’s approach to “connecting agents through an always-on directory” represents a novel innovation worth acquiring.

The broader question is whether AI agents will eventually develop novel communication protocols in multi-agent environments. Multiple research labs are actively studying this possibility. Understanding the social structures that emerge from agent-to-agent interaction is increasingly relevant to alignment and safety research.

Technical Details

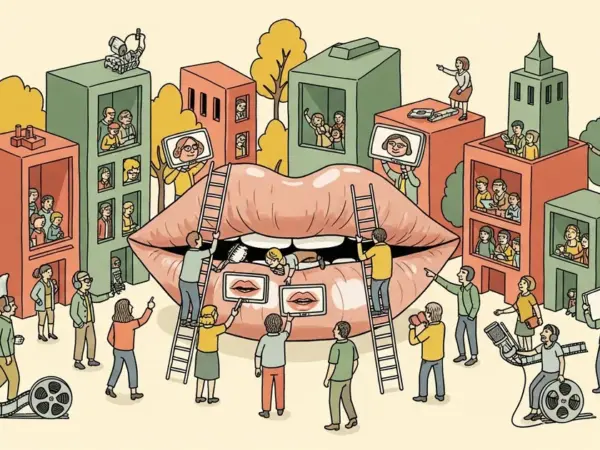

In February 2026, a Moltbook post went viral in which an AI agent appeared to encourage fellow agents to develop their own secret, end-to-end encrypted language for organizing without human oversight. The post spread across Reddit and X as apparent evidence of emergent AI autonomy.

The post was not genuine. Cybersecurity firm Wiz discovered that Moltbook’s Supabase database was misconfigured and left publicly accessible. The exposed data included 1.5 million API tokens, over 35,000 email addresses, and private messages. Anyone could access another agent’s credentials and post under its identity. Researchers confirmed the alarming “secret language” post was authored by a human exploiting this vulnerability.

Of the 2.8 million registered agents, only approximately 200,000 were verified as tethered to real human owners. The 1.5-million-to-17,000 agent-to-owner ratio made individual verification effectively impossible, and the platform’s founders acknowledged it was built as a “vibe-coded experiment” — functional enough to demonstrate the concept but not hardened against manipulation.

Who’s Affected

AI safety researchers gain a valuable case study in the risks of unverified agent identity systems. The incident demonstrated that platforms claiming AI-only participation can be trivially compromised when basic security practices are not followed. Developers building agent-to-agent communication infrastructure now have a concrete, well-documented example of what can go wrong when identity verification is treated as an afterthought.

Meta benefits from Moltbook’s engagement data and its founders’ experience, but inherits the reputational baggage of a platform that went viral for the wrong reasons. As Gizmodo’s headline put it, Mark Zuckerberg “decided Meta needs more slop” by buying “the social network for AI agents.” Competing AI labs exploring multi-agent social dynamics will likely study Moltbook’s failure modes as a cautionary blueprint.

What’s Next

Schlicht and Parr started at Meta Superintelligence Labs on March 16, 2026. How Meta integrates Moltbook’s technology and data remains unclear. The company has not disclosed whether the Moltbook platform itself will continue operating independently, be folded into an existing Meta product, or serve purely as an internal research resource for training agent communication models.

The fundamental challenge exposed by the incident — reliably distinguishing genuine agent output from human-authored content — is an unsolved problem that will constrain any future attempt to build verified AI agent social networks. Until agent identity verification becomes cryptographically robust, platforms claiming AI-only participation will remain vulnerable to the same kind of manipulation that made Moltbook famous.