- MiniMax M2.7, released on March 18, 2026, scores within striking distance of Claude Opus 4.6 on the SWE-Pro coding benchmark while costing roughly 93 percent less per task in independent testing.

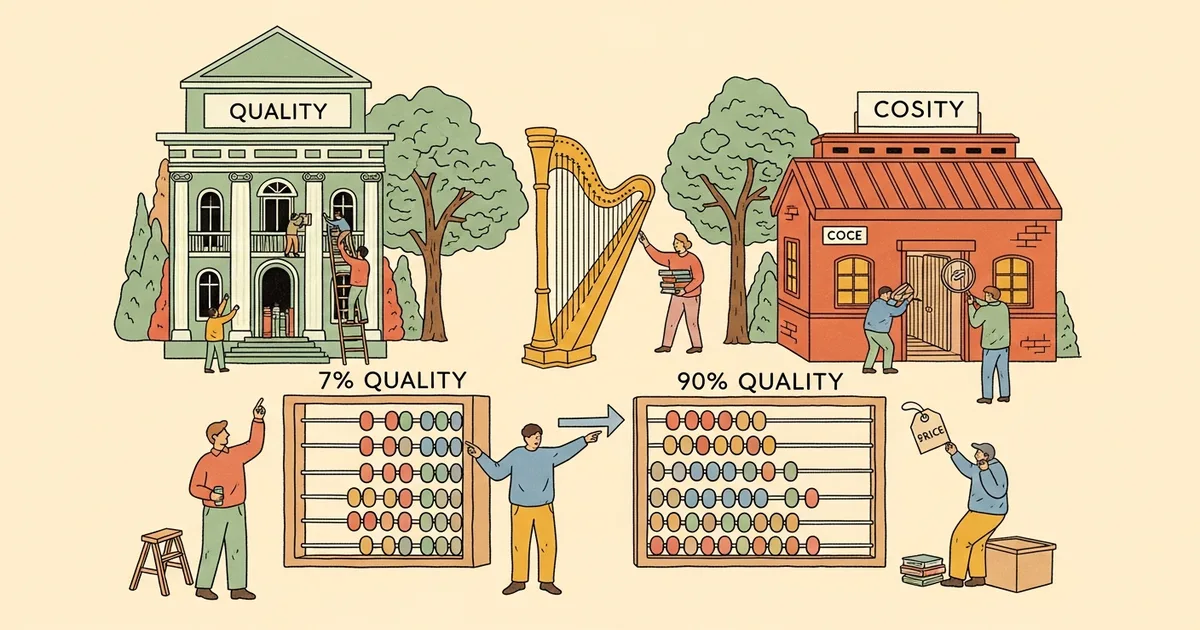

- Head-to-head testing by Kilo Code found M2.7 delivered 90 percent of Opus-level quality on real coding fixes at a total cost of $0.27, compared to $3.67 for the same tasks on Claude Opus 4.6.

- The model prices at $0.30 per million input tokens and $1.20 per million output tokens, versus Opus 4.6 at $15 and $75 respectively for standard API access.

- Opus 4.6 still produces more thorough fixes and generates twice as many unit tests, making M2.7 a cost-optimization choice rather than a drop-in replacement for frontier quality.

What Happened

Chinese AI company MiniMax released M2.7, a large language model positioned as a cost-effective alternative to frontier models like Anthropic’s Claude Opus 4.6 and OpenAI’s GPT-4.5. The model launched on March 18, 2026, and immediately drew attention from developers and engineering teams for its performance-to-price ratio on software engineering benchmarks.

On the SWE-Pro benchmark, M2.7 scored 56.22 percent, placing it close to Claude Opus 4.6’s score on the same evaluation. On SWE-bench Verified, a separate and widely referenced coding evaluation, M2.7 reached 78 percent compared to Opus’s 55 percent. Independent testing by the engineering team at Kilo Code confirmed the cost gap in practical terms: completing the same set of real-world coding fixes cost $0.27 with M2.7 versus $3.67 with Opus 4.6.

According to benchmark data from Artificial Analysis, M2.7 earned an intelligence score of 50 on their proprietary index, ranking first among 140 models evaluated in its class. The firm described its performance as “well above average among comparable models.”

Why It Matters

The AI model market has operated on the assumption that frontier-level performance requires frontier-level pricing. M2.7 challenges that assumption directly and measurably. At $0.30 per million input tokens and $1.20 per million output tokens, the model costs a fraction of what Anthropic charges for Opus 4.6 through its standard API pricing tier.

For engineering teams running thousands of API calls daily on coding tasks, even a 10 percent quality trade-off can be worth the savings. A development team spending $10,000 per month on Opus API calls could potentially reduce that bill to under $700 with M2.7, assuming comparable task volumes and accounting for M2.7’s higher verbosity.

The competitive pressure also matters for the broader model market. Chinese AI labs including DeepSeek, Qwen, and now MiniMax have steadily closed performance gaps with Western frontier models while maintaining significantly lower price points, putting sustained pressure on the pricing strategies of Anthropic, OpenAI, and Google.

Technical Details

M2.7 generates output at approximately 45.6 tokens per second, which Artificial Analysis characterizes as “notably slow” compared to peers in its price tier. Latency to first token sits at 2.33 seconds, above the 1.83-second median for comparable reasoning models. These speed limitations affect real-world usability in interactive coding assistants and latency-sensitive API applications.

The model is notably verbose in its responses, producing roughly 87 million tokens during the Artificial Analysis intelligence evaluation, compared to an average of 20 million tokens across other models tested. This verbosity inflates real-world costs beyond what raw per-token pricing suggests, though MiniMax claims that with automatic cache optimization, the effective blended cost can drop to as low as $0.06 per million tokens for repeated or similar queries.

In Kilo Code’s head-to-head testing, Claude Opus 4.6 produced more comprehensive code fixes and generated twice as many unit tests as M2.7 for identical tasks. The quality differences became more pronounced on complex multi-step reasoning problems, where Opus’s deeper analysis translated into fewer downstream bugs and more robust test coverage.

Who’s Affected

The primary audience is engineering teams and startups that consume LLM APIs at scale for code generation, automated debugging, and testing workflows. Teams currently paying for Opus or GPT-4.5 API access have the most immediate opportunity to evaluate M2.7 as a cost-reduction measure, particularly for high-volume, lower-complexity coding tasks where the 10 percent quality gap matters less.

Individual developers using consumer AI coding assistants like Cursor or Windsurf are less directly affected, since those tools bundle model costs into their subscription pricing. Enterprise procurement teams negotiating volume API contracts, however, may use M2.7’s pricing as leverage in discussions with Anthropic and OpenAI.

What’s Next

MiniMax has not announced a timeline for its next model release or indicated whether future versions will address the speed and verbosity limitations. Teams considering a migration should benchmark M2.7 against their specific workloads before committing, since the 90 percent quality figure reflects averaged performance across a general coding test suite rather than domain-specific or production-critical tasks where the remaining quality gap could have outsized consequences.