NBC News reports Baltimore Mayor Brandon Scott is demanding action after deepfakes depicting minors proliferated in the city. Separately, the UK’s ICO and Ofcom demanded that xAI explain Grok‘s image generation safeguards against non-consensual content. The deepfake crisis that experts warned about is now a municipal emergency.

The Baltimore Situation

Mayor Scott described the situation as having “traumatic, lifelong consequences for victims” — students whose likenesses were used to generate explicit content without consent. The incidents involved:

- AI-generated explicit images using yearbook and social media photos as source material

- Distribution through encrypted messaging apps and social media platforms

- Victims as young as middle school age

Baltimore joins a growing list of cities dealing with the same crisis. In 2025, cases were reported in schools across New Jersey, California, Washington, and Texas. The technology has become accessible enough that students with smartphones can generate deepfakes using freely available apps.

The UK Investigation Into Grok

The UK’s Information Commissioner’s Office (ICO) and communications regulator Ofcom formally demanded that Elon Musk’s xAI explain what safeguards Grok‘s image generation model has against creating non-consensual intimate images. Grok has been criticized for weaker content filters compared to competitors like DALL-E and Midjourney.

The investigation could result in enforcement action under UK data protection law if Grok is found to process personal data (facial likenesses) without adequate safeguards.

The Scale of the Problem

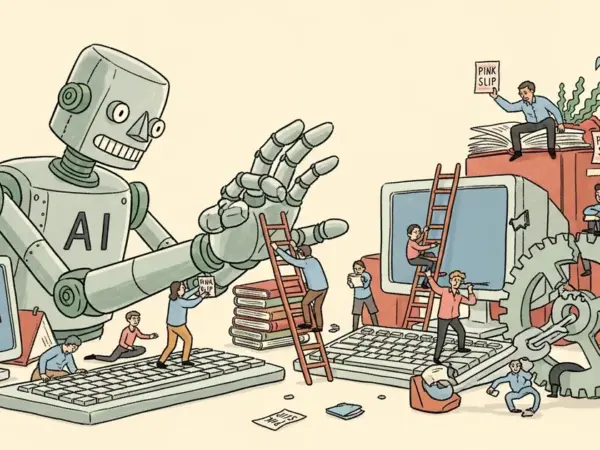

The deepfake crisis in 2026 is distinguished by accessibility, not capability:

- 2023: Creating convincing deepfakes required technical skill and powerful hardware

- 2024: Consumer apps made basic deepfakes accessible to anyone with a smartphone

- 2026: Multiple free, open-source models generate photorealistic images with zero technical knowledge required

The National Center for Missing & Exploited Children reported a 400% increase in deepfake-related reports from 2024 to 2025. The 2026 trajectory suggests further acceleration.

Legislation Status

Legislative responses are fragmented:

- Federal: The DEEPFAKES Accountability Act was introduced but hasn’t passed

- State level: 23 states have passed deepfake-related laws, but definitions and penalties vary widely

- EU: The AI Act’s August 2026 enforcement will require deepfake disclosure but doesn’t specifically address non-consensual intimate imagery

The legislative gap means enforcement currently relies on existing harassment, child exploitation, and defamation laws — tools designed before AI-generated content existed.

What Can Be Done

Technical solutions exist but aren’t widely deployed: digital watermarking (C2PA standard), AI detection tools, and platform-level filtering. The fundamental challenge is that detection is always playing catch-up with generation. As models improve, detecting synthetic content becomes harder. Mayor Scott’s demand for action reflects the reality that technology alone isn’t solving this — it requires legal frameworks, platform accountability, and educational intervention.