Researcher Azam Nouri submitted a paper on 24 March 2026 introducing StepCache, a backend-agnostic caching layer for large language model serving that reuses cached outputs at the granularity of individual steps rather than whole responses. The paper, available at arXiv:2603.28795, targets a specific class of serving workload where repeated requests share the same solution structure but vary in localized details such as output schema, variable names, or numeric constants.

- StepCache reduced median LLM serving latency from 2.42 seconds to 0.01 seconds in benchmark testing

- End-to-end correctness improved from 72.5% to 100% under task-specific verification checks

- 79.7% of benchmark requests took a full reuse path with no model regeneration required

- Token usage dropped from 36,100 to 27,300 across the benchmark request set

What Happened

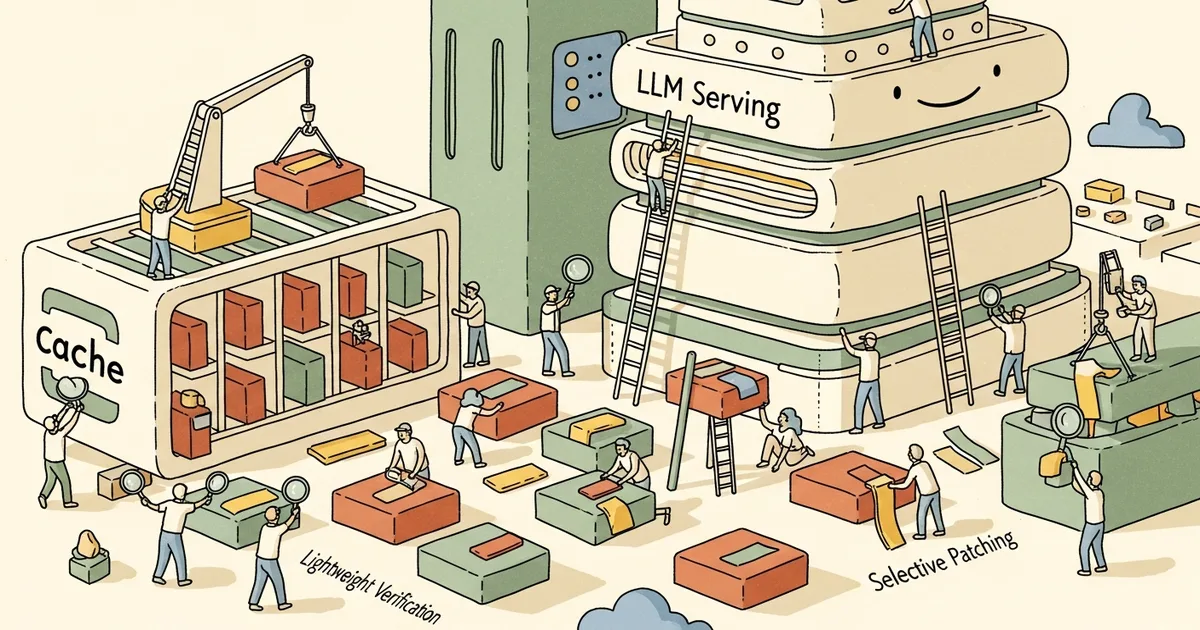

Azam Nouri’s paper, submitted to arXiv on 24 March 2026, presents StepCache as a solution to a structural gap in current LLM serving infrastructure. Existing caching methods either reuse full responses — which fail when requests differ even slightly — or reuse model-internal KV and prefix states, which are tightly coupled to specific serving backends. StepCache segments the model’s output into ordered steps, retrieves the closest matching cached prior request, verifies each step independently using lightweight task-aware checks, and regenerates only the steps that fail verification.

Why It Matters

The two dominant caching strategies in LLM serving each carry significant trade-offs. Semantic caching reuses complete responses and is described in the paper as brittle under partial changes. KV and prefix caching is more granular but tightly coupled to specific backends, limiting portability across serving frameworks.

StepCache’s backend-agnostic architecture means the layer can sit above any LLM serving stack without modifying the underlying inference engine. This is relevant for production environments that mix backends or run multiple model versions in parallel, where deep integration with a single inference framework creates operational risk.

Step-level reuse also opens a path to combining caching with active error correction — a capability neither semantic caching nor prefix caching provides — as demonstrated by the system’s behavior on linear equation tasks.

Technical Details

The benchmark was a CPU-only, perturbation-heavy micro-benchmark covering math and JSON task variants, averaged over three random seeds. Under these conditions, StepCache reduced mean latency from 2.13 seconds to 0.67 seconds and median latency from 2.42 seconds to 0.01 seconds. The p95 latency improvement was more modest: 3.38 seconds to 3.30 seconds, indicating that the costliest requests still approach baseline levels even with caching active.

Total token usage fell from 36,100 to 27,300 across the benchmark, and end-to-end correctness rose from 72.5% to 100% under task-specific checks combined with a stitched-output integrity check. Request routing broke down as follows: 79.7% took the reuse-only fast path requiring no regeneration, 5.4% required selective patching of individual failing steps, and 14.9% triggered a skip-reuse fallback for requests too semantically divergent to benefit from caching.

Nouri describes the system’s core operation as one that “segments outputs into ordered steps, retrieves the best-matching cached request, verifies steps using lightweight task-aware checks, and regenerates only failing regions via selective patching.” For JSON-structured outputs, StepCache adds enforcement covering single-step extraction, required-key constraints, and a one-shot repair pass. For linear equations specifically, verification is promoted into active correction: a bounded repair loop that falls back to a deterministic solver when the backend model fails, guaranteeing correctness for that task class regardless of model output quality.

Who’s Affected

The work is most directly relevant to teams running high-throughput LLM inference pipelines where prompt templates are reused across large request batches with varying parameters. Practical use cases include code generation with differing variable names or constraints, document extraction using fixed schemas, and form-processing workflows where structure is shared but field values change per request.

Because the system is described as backend-agnostic, it is designed to work alongside serving frameworks such as vLLM and Hugging Face Text Generation Inference without requiring modification to the underlying model or inference layer. Operators of multi-tenant or multi-model deployments stand to benefit most, given the system’s ability to route requests across reuse, patching, and fallback paths automatically.

What’s Next

The reported results come from a CPU-only simulation, not a GPU production deployment or real-world traffic study. The modest p95 improvement — a reduction from 3.38 to 3.30 seconds, compared to the 240-fold improvement at the median — suggests requests requiring full regeneration or extensive patching still carry near-baseline costs, and that tail-latency behavior under production load remains untested.

No public code repository was linked in the arXiv submission as of 1 April 2026. Independent replication under GPU inference conditions and across diverse production workloads would be needed to validate whether the latency and correctness gains hold beyond the controlled benchmark environment described in the paper.