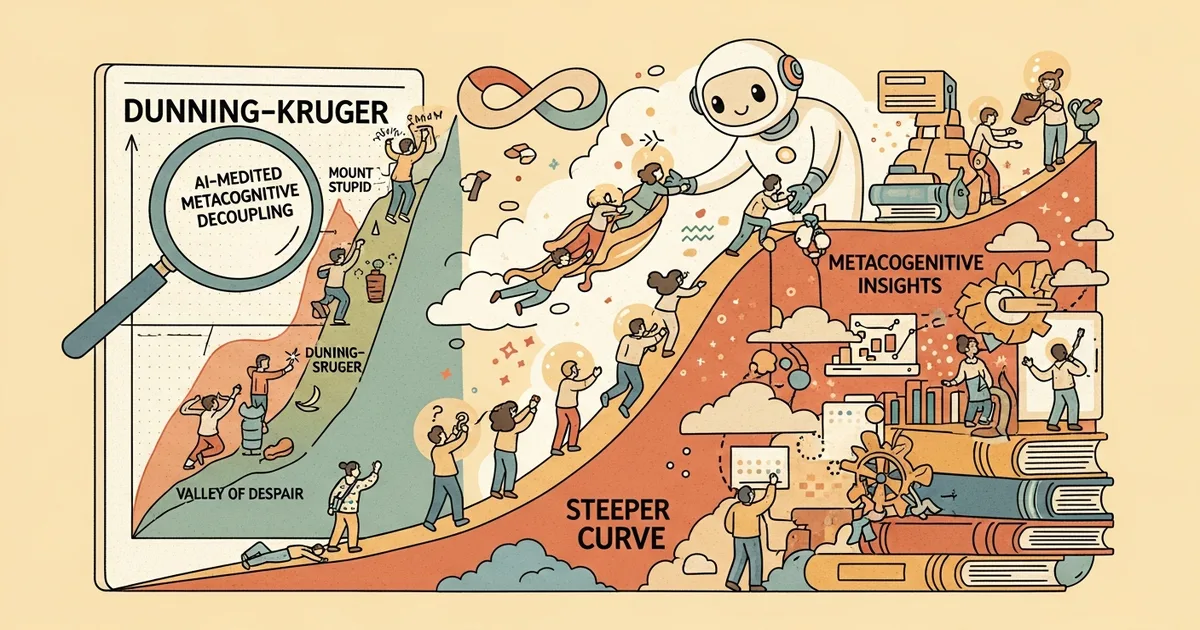

A theoretical paper submitted to arXiv on March 31, 2026 challenges the widespread characterisation of large language models as straightforward Dunning-Kruger amplifiers, proposing a more precise four-variable framework that separates what users produce, what they understand, how accurately they judge their own performance, and how they rate their own ability.

- LLM use can improve observable output and short-term task performance while simultaneously degrading metacognitive accuracy, according to a synthesis of existing research.

- The competence-confidence gradient flattens across skill groups when LLMs are used, rather than simply steepening as the standard Dunning-Kruger amplification claim suggests.

- Christopher Koch proposes “AI-mediated metacognitive decoupling” as a four-variable alternative to the simpler Dunning-Kruger framing.

- The framework is designed to explain overconfidence, over- and under-reliance, crutch effects, and weak transfer of AI-assisted knowledge.

What Happened

Christopher Koch submitted “Beyond the Steeper Curve: AI-Mediated Metacognitive Decoupling and the Limits of the Dunning-Kruger Metaphor” to arXiv on March 31, 2026 (arXiv:2603.29681). The paper directly contests a widely repeated claim in AI discourse: that generative AI tools cause users to overestimate their abilities in a pattern reducible to an amplified Dunning-Kruger effect. Koch writes that this framing is “too coarse to capture the available evidence.”

The paper does not present new empirical data. It synthesises findings from human-AI interaction research, learning science, and model evaluation literature to construct a revised theoretical account of how LLM use affects self-knowledge and confidence calibration.

Why It Matters

The Dunning-Kruger effect describes the tendency of low-competence individuals to overestimate their ability relative to experts. The standard AI-era extension holds that LLMs make everyone more overconfident, amplifying this pattern uniformly across skill levels. Koch argues this is an inaccurate simplification that obscures more nuanced empirical patterns present in the existing literature.

The distinction carries real weight for how organisations design AI tools, how educators assess AI-assisted student work, and how researchers interpret reliability and confidence data from LLM deployments. A gradient that flattens across skill groups produces different practical problems than one that simply steepens.

Technical Details

Koch’s central contribution is the working model of “AI-mediated metacognitive decoupling,” which he defines as “a widening gap among produced output, underlying understanding, calibration accuracy, and self-assessed ability.” These four variables are treated as separable rather than as a single axis of competence — a structural departure from the two-variable Dunning-Kruger framing.

The key empirical claim drawn from the synthesis is that LLM use flattens the classic competence-confidence gradient across skill groups. High-skill and low-skill users converge in their self-assessed confidence even as their actual output quality diverges. This means the data simultaneously show overconfidence among novices and underconfidence among experts — a pattern a uniformly steeper Dunning-Kruger curve cannot account for.

The four-variable framework is constructed to explain five specific failure modes identified across the surveyed literature: overconfidence in produced outputs, over-reliance on LLM suggestions, under-reliance in domains where LLMs underperform, crutch effects (degraded independent performance when the tool is removed), and weak transfer of knowledge gained during AI-assisted tasks to novel situations.

Who’s Affected

The paper’s immediate audience is researchers in human-AI interaction and cognitive science, but its implications extend to organisations deploying LLMs in knowledge work roles. Assessment designers — in formal education and professional certification alike — face a specific challenge: if users produce high-quality output while their underlying understanding degrades, standard output-based evaluation no longer measures what it intends to.

Tool designers at companies building LLM-powered productivity software are also directly implicated, particularly in how confidence-signalling features and explanations are surfaced in interfaces. If calibration accuracy declines regardless of skill level, interface design choices that reinforce user confidence without grounding it in demonstrated understanding may compound the problem the paper describes.

What’s Next

Koch identifies tool design, assessment, and knowledge work as the three domains where the framework carries the most immediate practical weight. The paper does not propose specific interventions but implies that each area requires evaluation criteria capable of distinguishing between the four variables — output quality, underlying understanding, calibration, and self-assessment — rather than treating any one of them as a proxy for the others.

As a synthesis paper, the framework’s validity depends on the robustness of the primary studies it draws from. Koch describes it explicitly as a “working model,” signalling that direct empirical validation remains outstanding. Independent studies that test the four-variable account against primary data — particularly its claim about gradient flattening across skill groups — would be the logical follow-up work. Author contact details are available via the arXiv submission at arXiv:2603.29681.