A study submitted to arXiv on March 31, 2026 found that three commercial large language models — GPT-4o, Claude Sonnet 4.5, and Gemini 2.5 Pro — produced clinically accurate prior authorization letters across a structured set of synthetic scenarios, but all three consistently omitted administrative fields that payer workflows require for submission. The paper was authored by Moiz Sadiq Awan and Maryam Raza.

- GPT-4o, Claude Sonnet 4.5, and Gemini 2.5 Pro each generated letters with accurate diagnoses, well-structured medical necessity arguments, and step therapy documentation across 45 physician-validated synthetic scenarios

- A secondary analysis against real-world payer requirements found three recurring deficiencies across all models: absent billing codes, missing authorization duration requests, and inadequate follow-up plans

- The evaluation covered five specialties: rheumatology, psychiatry, oncology, cardiology, and orthopedics

- The authors conclude the barrier to clinical deployment is not whether LLMs can write clinically adequate letters, but whether the surrounding systems can supply the required administrative precision

What Happened

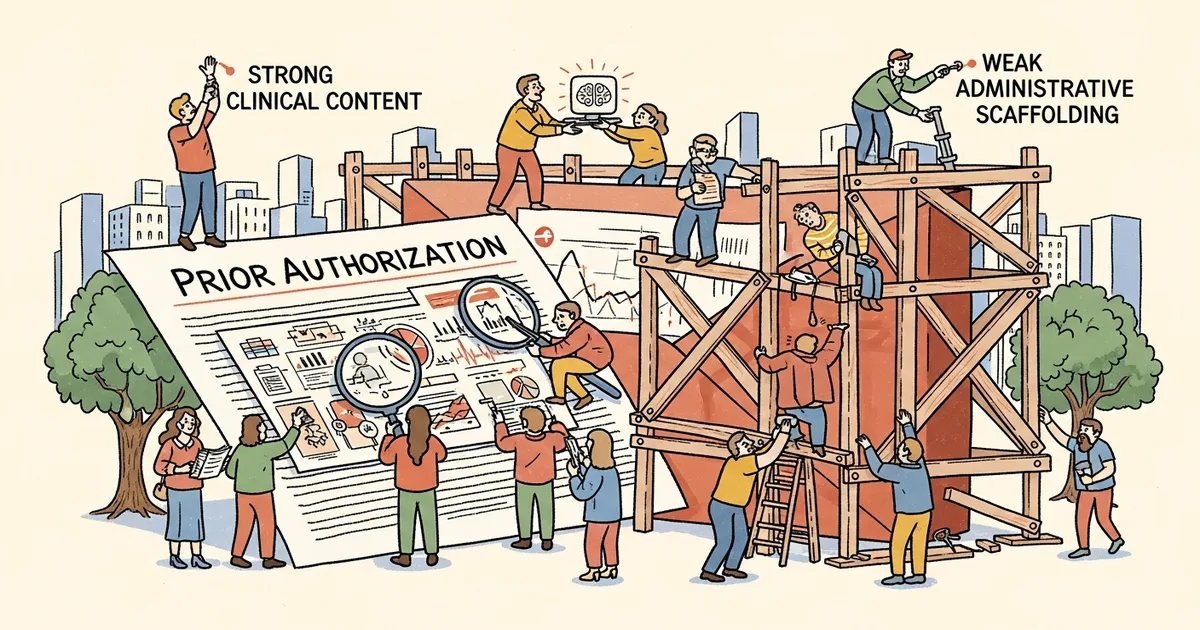

Moiz Sadiq Awan and Maryam Raza submitted “AI-Generated Prior Authorization Letters: Strong Clinical Content, Weak Administrative Scaffolding” to arXiv on March 31, 2026, presenting a structured multi-model evaluation of how well commercial LLMs perform at generating complete, submission-ready prior authorization letters. The study evaluated three widely used models across 45 physician-validated synthetic clinical scenarios spanning five medical specialties.

Prior authorization — the process by which insurers require physician pre-approval before covering certain treatments or medications — consumes billions of dollars and thousands of physician hours annually in U.S. healthcare, according to the paper. The authors note that earlier research on LLM-generated authorization letters was limited to single-case demonstrations, making structured multi-scenario evaluation a gap in the existing literature.

Why It Matters

The study introduces a two-layer evaluation framework that separates clinical adequacy from administrative completeness — a distinction that prior work in this area had not made explicit. Clinical reviewers assessing letter quality would not necessarily flag missing billing codes or omitted authorization duration fields; those deficiencies only surface when outputs are checked against real-world payer submission requirements.

Because clinical scoring and payer-workflow compatibility measure fundamentally different things, studies that rely solely on clinical reviewers may overstate how deployment-ready LLM-generated letters actually are. The secondary analysis conducted by Awan and Raza is what revealed the consistent shortcomings across all three models.

Technical Details

The researchers tested GPT-4o, Claude Sonnet 4.5, and Gemini 2.5 Pro on 45 physician-validated synthetic scenarios distributed across rheumatology, psychiatry, oncology, cardiology, and orthopedics. All three models produced letters with accurate diagnoses, well-structured medical necessity arguments, and thorough step therapy documentation — the documentation showing that a patient has already tried lower-cost alternatives before a higher-cost treatment is requested, which most payers require as a condition of approval.

The secondary analysis against real-world administrative requirements identified three gaps present across all three models: absent billing codes, missing authorization duration requests, and inadequate follow-up plans. These are structural components of a payer-ready submission, not clinical judgments. As Awan and Raza write: “the challenge for clinical deployment is not whether LLMs can write clinically adequate letters, but whether the systems built around them can supply the administrative precision that payer workflows require.”

The study used synthetic rather than real patient scenarios, and the generated letters were not submitted to live payer systems — meaning actual rejection rates attributable to these deficiencies remain unmeasured.

Who’s Affected

Healthcare providers piloting or evaluating AI-assisted prior authorization tools face a direct practical implication: a system that generates clinically sound letters but omits billing codes or fails to specify authorization duration would likely produce rejections or manual review delays without clearly surfacing the cause. The gap between clinical quality and payer-submission readiness is not visible from the letter content alone.

Insurance payers, whose workflows process these letters through structured automated and manual review systems, are downstream recipients of the administrative gaps the study identifies. Vendors and developers building prior authorization automation products on top of commercial LLMs will need to address how payer-specific administrative fields are supplied — whether through EHR integration, template scaffolding, or post-generation validation layers.

What’s Next

The study’s scope is bounded by its use of physician-validated synthetic scenarios: letters were not submitted to actual payer systems, and Awan and Raza do not report downstream acceptance or rejection rates. Testing AI-generated letters against live payer submission infrastructure would determine whether the identified administrative gaps translate into measurable rejection rates in practice.

Closing the administrative gap likely requires systems design rather than model-level fixes — specifically, integration with practice management or EHR systems that can auto-populate billing codes, authorization durations, and payer-specific formatting requirements before a letter reaches submission.