- Replicate provides cloud API access to thousands of open-source ML models with pay-per-second billing starting at $0.0001/second for CPU inference.

- Models scale to zero when idle, meaning users pay nothing between requests, but cold starts add latency to the first call after a period of inactivity.

- The platform recently joined Cloudflare, signaling a shift toward edge-based ML inference at scale.

- High-volume production workloads may find per-second billing more expensive than reserved GPU instances from traditional cloud providers.

What Happened

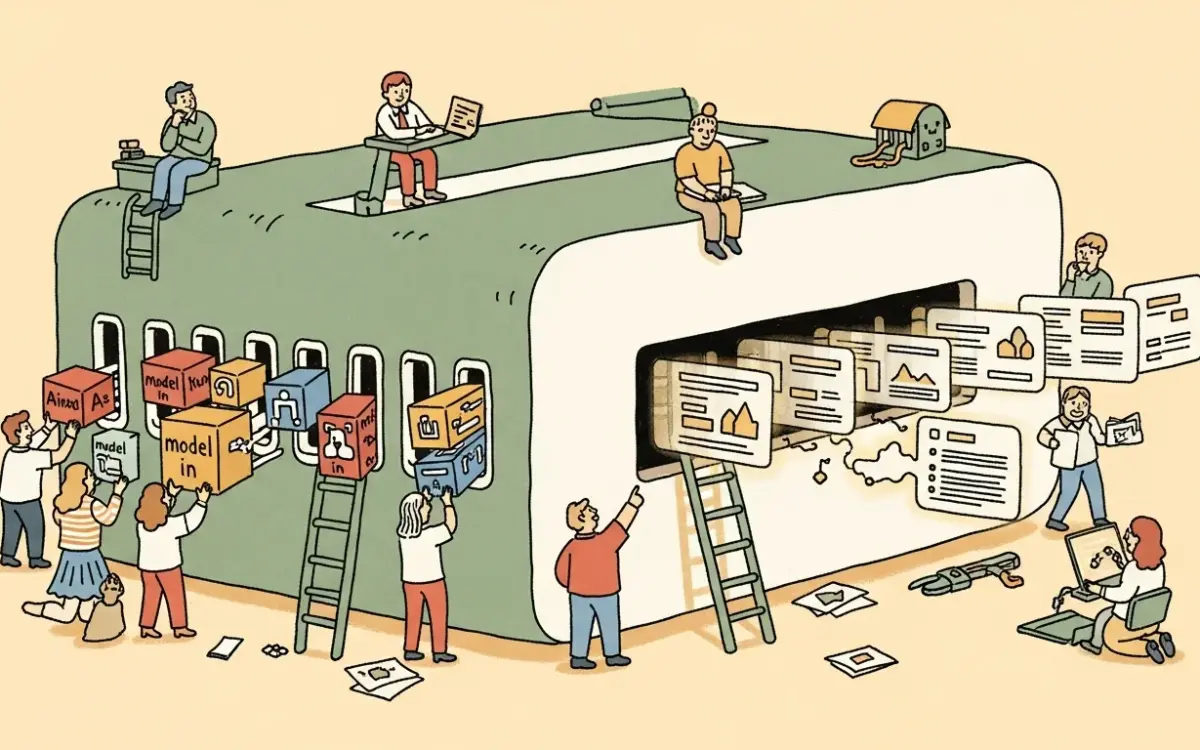

Replicate has positioned itself as the simplest path from machine learning model to production API endpoint. Founded with the goal of making ML accessible to any developer regardless of infrastructure expertise, the platform now hosts thousands of community-contributed models spanning image generation, large language models, audio processing, video generation, and computer vision. Users can run any model with a single API call, with no GPU provisioning, container orchestration, or infrastructure management required.

The company recently announced it has joined Cloudflare, indicating a significant partnership that could bring ML inference closer to the network edge and reduce latency for global applications. Replicate supports model deployment through Cog, its open-source packaging tool that converts ML models into production-ready Docker containers with built-in API endpoints.

Why It Matters

Running ML models in production traditionally requires deep infrastructure expertise. Teams need to provision GPUs, manage Docker containers, handle scaling, and monitor performance. Replicate eliminates this entire operational stack. A developer with no ML infrastructure experience can deploy a model and start serving predictions within minutes, which has made the platform a default choice for startups that need AI capabilities without hiring dedicated ML operations engineers.

The pay-per-second billing model aligns costs with actual usage. CPU inference costs $0.0001 per second. GPU pricing varies by hardware tier: NVIDIA T4 at $0.000225/second, L40S at $0.000975/second, and A100 (80GB) at $0.0014/second. There is no minimum spend, and models that receive no traffic incur zero cost.

Technical Details

Replicate’s model catalog includes current-generation tools such as FLUX for image generation, Runway Gen 4.5 for video, ElevenLabs for text-to-speech, and Google Gemini 3 Flash for text generation. New models from the open-source community are added regularly, and popular models like Stable Diffusion and Llama variants often appear on the platform within days of their public release. The platform provides SDKs for Python and Node.js, plus raw HTTP endpoints for any programming language. Authentication uses API tokens, and built-in dashboards provide logging, cost tracking, and usage metrics.

Custom model deployment works through Cog, which packages a model’s weights, dependencies, and inference code into a standardized container. Once pushed, Replicate handles scaling, hardware allocation, and request routing automatically. The auto-scaling system spins instances down to zero during idle periods and scales up on demand.

The cold-start tradeoff is the platform’s primary technical limitation. Models that have scaled to zero must reload weights and initialize the inference pipeline before serving the first request. For large models on GPU hardware, this can add 10-30 seconds of latency to the initial call. Subsequent requests within the keep-alive window are served at normal speed.

Who’s Affected

Startups and indie developers building AI-powered features benefit most. Companies like Character.ai, Photo.ai, and Magnific use Replicate to serve ML predictions without maintaining GPU infrastructure. The platform is particularly well-suited to variable workloads where traffic is unpredictable and scaling to zero between bursts saves significant cost.

Teams with consistent, high-volume inference workloads may find Replicate more expensive than alternatives. At sustained throughput, the per-second billing model can exceed the cost of reserved GPU instances from AWS, GCP, or dedicated providers. The Cog packaging format also adds a learning curve for teams deploying custom models.

What’s Next

The Cloudflare partnership could reduce cold-start latency by distributing model serving across edge locations, but specific implementation details remain limited. For latency-sensitive applications, Replicate does offer dedicated deployments that keep models warm, though this removes the scale-to-zero cost advantage.

Developers evaluating Replicate should benchmark their expected request volume against per-second pricing to determine whether the convenience premium justifies the cost compared to self-managed infrastructure. For workloads under a few thousand requests per day with variable traffic patterns, Replicate’s pricing model typically wins. Beyond that threshold, a cost comparison with reserved GPU instances is worth running before committing.

Related Reading

- Mistral Review 2026: European AI Model Provider Balancing Open-Source and Commercial

- Qwen Review 2026: Alibaba’s Open-Source Model Takes On GPT-5

- Flux Review 2026: The Open-Source Image Model That Rivals Midjourney

- Kimi K2.5 Review: The Trillion-Parameter Open-Source Model Behind Cursor That Nobody Noticed