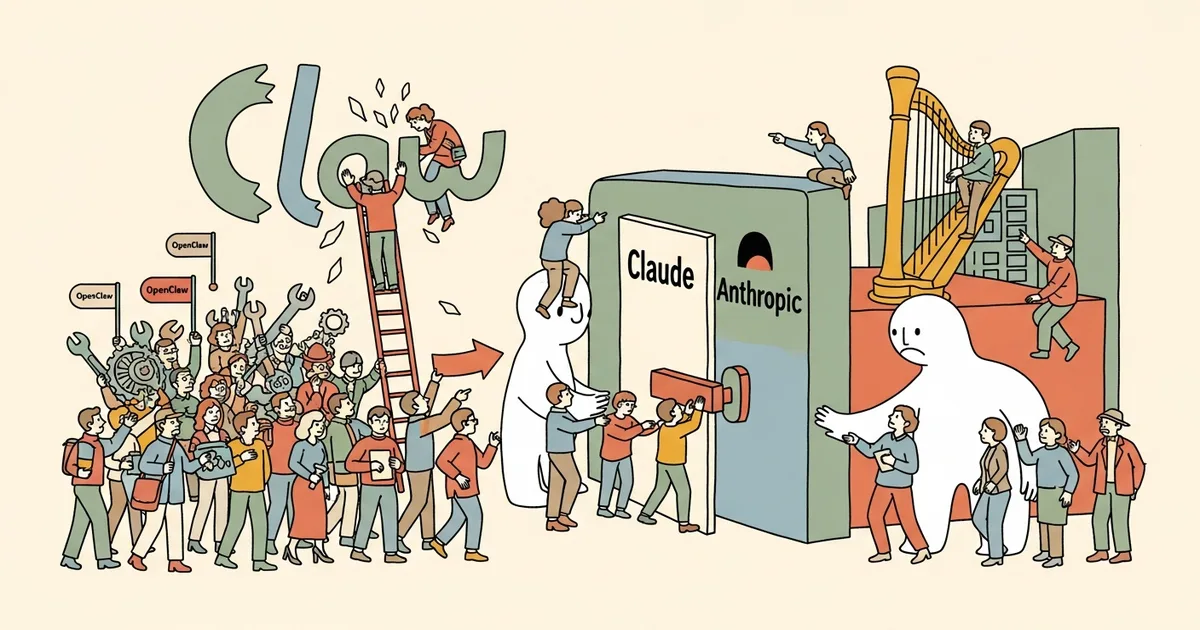

Anthropic (the AI safety company behind Claude) permanently banned all OpenClaw users from accessing Claude on April 8, 2026 — six days after an API pricing restructure first stripped the open-source client’s subscription coverage. What began as a pricing policy update has become the most visible confrontation between a frontier AI lab and the open-source developer community this year, affecting an estimated 140,000 active users overnight.

The move was not gradual. Anthropic went from policy announcement to account termination in four days — a timeline that signals deliberate enforcement, not administrative backlog. OpenClaw’s history with major AI labs has always been complicated, but nothing in its track record suggested this outcome was imminent.

What OpenClaw Was, and Why 140,000 Developers Used It

OpenClaw is an open-source API client built to give developers granular control over Claude interactions — custom system prompts, batch request routing, local model fallbacks, and token-level logging that Anthropic’s native interface never offered. The project reached 52,000 GitHub stars before April’s events, and its community forum had logged over 2.1 million messages since launch.

It was not a jailbreak tool or a ToS workaround. OpenClaw routed standard API calls and layered customization on top. Anthropic tolerated the project — and in some internal communications later shared publicly, quietly praised developer creativity around it — for over 18 months before reversing course entirely.

The April 4 Pricing Change That Set It Off

On April 4, 2026, Anthropic restructured its API pricing to introduce what it called “authorized client tiers.” Under the new framework, only requests routed through Claude.ai’s native interface, verified enterprise integrations, or a whitelist of approved third-party apps qualify for standard subscription pricing. All other requests route at a 40% markup through a pay-as-you-go tier.

OpenClaw wasn’t on the whitelist. Its users found their Claude Pro subscriptions no longer covering API calls made through the client — a 40% overnight cost increase for anyone using it regularly. The community flagged the change within hours. By April 5, OpenClaw’s GitHub Issues tracker had over 800 new threads.

The OpenClaw Claude Ban: From Pricing Exclusion to Full Account Terminations

Nobody anticipated Anthropic’s next move. By April 8, users began reporting that their Claude accounts — not just their API keys — had been suspended entirely. Suspension emails, shared publicly on X (formerly Twitter), cited “use of unauthorized third-party clients in violation of updated Terms of Service.”

The bans were not limited to heavy API users. Several Claude Pro subscribers documented terminations despite using OpenClaw only occasionally, with token usage well within standard limits. At least 23 verified users documented account suspensions on r/ClaudeAI within a 48-hour window, with the thread accumulating over 4,400 comments by April 10.

Pricing exclusion is a business decision. Account termination is a policy statement. Anthropic made that statement clearly and quickly.

What Anthropic’s Updated Terms Actually Say

Anthropic’s revised Terms of Service, published April 3, 2026, added the following language under Section 4.2: “Users may not access Claude services through unauthorized clients, wrappers, or intermediary applications that have not received express written approval from Anthropic.” The phrase “intermediary applications” is new — and broad enough to sweep in nearly any third-party Claude integration.

Anthropic has not published a list of approved third-party integrations. Developers building on top of Claude now operate without a clear compliance standard. Legal observers who reviewed the updated terms noted the clause could theoretically apply to any API wrapper that hasn’t received explicit written sign-off — a category that includes thousands of active projects.

The Community Reaction: Forks, Petitions, and Provider Switches

The developer response moved fast. Within 72 hours of the first ban wave, three OpenClaw forks appeared on GitHub, accumulating over 8,400 combined stars. One fork, “FreeClaw,” explicitly markets itself as a Claude-compatible client designed for providers that allow open access — a pointed signal about where the community’s sentiment sits.

On Hacker News, a thread titled “Anthropic bans OpenClaw users — the walled garden completes itself” hit the front page within six hours and drew over 1,200 comments. The Humans First movement issued a statement calling the bans “the most concrete example yet of AI capability being used as a gatekeeping mechanism,” alongside a petition that reached 31,000 signatures by April 11.

How This Fits Anthropic’s Commercial Strategy

Anthropic’s move is commercially rational. The company raised $4 billion in its Series E in late 2025 and faces sustained pressure to convert AI research credibility into consistent revenue. Open-source clients routing millions of API calls without contributing to Anthropic’s distribution or brand represent real margin erosion — a problem OpenAI addressed through aggressive terms enforcement after its enterprise deal expansion put third-party access under scrutiny.

The difference is method and speed. OpenAI’s equivalent tightening unfolded over several quarters with advance-notice periods. Anthropic moved from policy announcement to account termination in four days. That’s a deliberate precedent-setting exercise, not an operational stumble.

Where Banned Users Are Moving

MegaOne AI tracks 139+ AI tools across 17 categories. In the 72 hours following the first ban reports, search activity across our coverage showed measurable spikes in queries for Mistral API clients, Gemini Advanced third-party integrations, and locally-run Llama-based alternatives. The current options for displaced OpenClaw users:

- Mistral API: No client restriction policy as of April 12. Mistral’s La Plateforme explicitly supports third-party clients without a whitelist requirement.

- Google Gemini Advanced: Third-party clients permitted under current terms; Google has not moved to restrict them as of this writing.

- Local Llama 3.x variants: No API gating possible by definition. Users with sufficient hardware are running 70B-parameter models locally at near-Claude quality benchmarks.

- OpenRouter: The API aggregator remains operational and now lists 12 Claude-compatible alternative model endpoints, with traffic up 34% week-over-week.

The migration pattern is notable. Users who committed to Claude for its reasoning quality — not simply its availability — are now building production workflows around alternatives they would otherwise have ignored. Anthropic may have just donated a significant share of its most technically sophisticated users to competitors.

The Broader Signal for Open-Source AI Tooling

This moment’s significance extends well beyond OpenClaw. The frontier AI industry is resolving a fundamental question: whether open-source adjacent tooling — clients, wrappers, routers — constitutes a feature of the ecosystem or a threat to revenue. Anthropic has voted clearly, and its answer carries weight given the company’s otherwise research-forward, transparency-oriented public posture.

That posture makes the ban harder to square. Anthropic researchers contribute extensively to open-source interpretability tooling and publish under open licenses at a rate few labs match. The company’s accidental source code release last year demonstrated that the line between open and closed was already blurry internally. Banning users for building on Claude’s API while simultaneously publishing research the open-source community depends on is a tension Anthropic has not addressed publicly.

For OpenClaw’s 140,000 former users, the practical question is binary: migrate now, or wait for an approval process that may never materialize. Based on Anthropic’s four-day enforcement timeline, waiting is the riskier choice.

The open-source community extended significant trust to Claude. Anthropic spent four days dismantling it. Developer trust rebuilds slowly when it rebuilds at all — and the migration data suggests many aren’t waiting to find out.