The recursive contamination of AI training pipelines reached a measurable inflection point in early 2026. A Towards Data Science analysis published this year put a name to what AI researchers have been quietly documenting for months: model collapse — the quality degradation that occurs when large language models are trained on AI-generated content rather than human-produced text. The open web, the primary training data source for every major foundation model, is now estimated to be between 20% and 57% AI-generated depending on the domain, according to multiple content provenance studies. The feedback loop is structural, and it is accelerating.

The finding is not speculative. It has a name, a mechanism, and a growing body of empirical evidence. The question is whether the industry can address it before the next generation of foundation models locks in the damage.

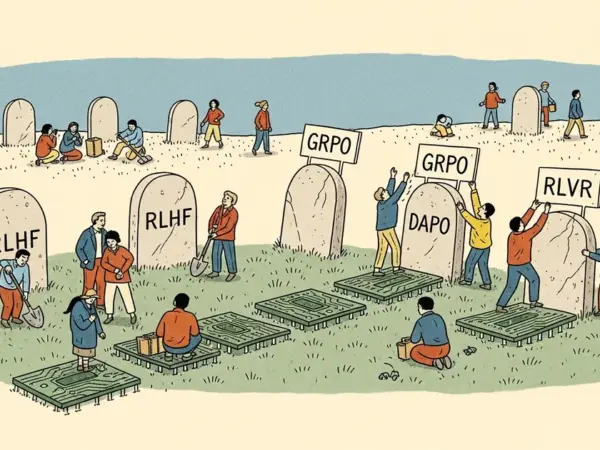

What Model Collapse Actually Means

Model collapse is not a metaphor. It refers to a specific, documented failure mode: when a model is trained on data generated by a previous model, the statistical distribution of outputs narrows. Edge cases disappear. Low-frequency but important patterns — rare vocabulary, unusual syntax, minority viewpoints, domain-specific reasoning — get averaged out over successive training iterations.

The foundational research comes from a July 2024 Nature paper by Shumailov et al., titled AI Models Collapse When Trained on Recursively Generated Data. Through controlled experiments, the researchers demonstrated that models trained on outputs from prior models showed measurable degradation in diversity and accuracy, and the effect compounded with each generation. They described it as a statistical narrowing — the model’s understanding of the world shrinks toward the mean of its predecessors.

In plain terms: every new model trained on today’s internet is, to some degree, training on the outputs of yesterday’s models. Those outputs contained errors, hallucinations, and stylistic artifacts. The new model learns those artifacts as ground truth.

The Open Web Has Become a Landfill

Between 2022 and 2025, AI-generated content flooded every surface-web platform. SEO content farms, social media posts, news aggregators, and product descriptions were the first casualties. Researchers tracking content provenance estimate that AI-generated text now accounts for over 40% of new publicly crawlable English-language content — a share that continues to climb with each passing quarter.

Common Crawl, the nonprofit web archive at commoncrawl.org that supplies training data to OpenAI, Meta, Google DeepMind, and most open-source model developers, ingests approximately 3 billion web pages per monthly crawl. There is no reliable mechanism to filter AI-generated content at scale. The C4 dataset — the filtered Common Crawl corpus used to train T5 and later models — applies quality heuristics designed for 2020-era web content. Those heuristics were not built to detect GPT-4 or Claude 3 outputs, let alone the outputs of 2025-vintage models.

The consequence is structural: the most accessible, highest-volume training data source in AI has become systematically contaminated. As AI-generated content has flooded even highly specialized applications like weather platforms, the contamination problem extends far beyond general web text.

Benchmark Evidence: Where the Cracks Are Showing

Performance on general NLP benchmarks has continued to improve — which is part of why model collapse escaped mainstream notice for so long. Specific capability categories tell a different story.

Researchers tracking model outputs across successive generations have documented a consistent pattern: declining performance on tasks requiring stylistic diversity, low-frequency reasoning patterns, and accurate representation of minority languages and dialects. A model that scores 91% on MMLU can simultaneously be worse at generating non-standard prose, handling specialized technical writing, or accurately representing cultural and linguistic edge cases that lack a large training footprint.

The degradation is uneven by design. High-frequency patterns — standard code generation, business writing templates, common factual Q&A — remain robust because ground-truth human examples still dominate those domains. Low-frequency patterns are where collapse concentrates. This is precisely why casual users don’t notice: the tasks most people test are not the tasks where quality is declining.

Why Synthetic Data Poisons the Well

Synthetic data training is not inherently destructive. Meta’s LLaMA 3 used synthetic data extensively for instruction tuning, and targeted synthetic data — generated to fill specific, identified capability gaps — can measurably improve performance. The problem is untargeted synthetic data at web scale, ingested without provenance controls.

Human-generated text carries what researchers call “heavy tails” — the full statistical distribution of human language use, including rare patterns that appear infrequently but matter enormously for capability breadth. AI-generated text compresses these tails. A model trained on AI outputs learns a smoothed, averaged version of human language — statistically plausible but missing the edges that represent genuine understanding.

Each training iteration amplifies the compression. Research examining successive generations of models trained on prior models’ outputs has estimated vocabulary diversity and syntactic variation narrowing by 30–60%, depending on domain. The model becomes simultaneously more confident and less accurate about edge cases — a particularly dangerous combination for deployed systems.

The Deep Web Problem: The Gold Nobody Can Touch

Here is the structural asymmetry that makes model collapse genuinely hard to solve: the cleanest, most valuable training data is locked behind authentication walls.

The “deep web” — databases, paywalled archives, private corporate documents, academic repositories, healthcare records, legal filings — is estimated to be 400 to 500 times larger than the indexed surface web. This data is almost entirely human-generated, accumulated over decades before generative AI existed. It is also almost entirely inaccessible for AI training without explicit, expensive licensing agreements.

Scientific literature represents centuries of accumulated expert human reasoning — precisely the high-density signal AI training needs most. The majority of peer-reviewed research sits behind journal paywalls. Reddit’s API shutdown in 2023, which explicitly targeted AI training scraping, removed one of the largest sources of human conversational data from the accessible commons. Twitter/X followed with its own restrictions. The open web is not just contaminated — it is actively shrinking as a source of human-originated signal, while AI-generated content fills the gap.

The labs that recognized this dynamic earliest moved quickly to secure proprietary data partnerships. OpenAI’s $1 billion content licensing deal represents one aggressive attempt to acquire premium human-generated training data before the accessible window closes entirely. These deals are expensive, legally complex, and available only to companies with the resources to negotiate them at scale.

How Labs Are Responding — and Where the Fixes Fall Short

The industry has not ignored model collapse. Several mitigation strategies are now in active deployment across major labs:

- Data provenance filtering: Automated classifiers attempt to detect and remove AI-generated content from training sets. Current classifiers achieve 70–85% accuracy in controlled settings, but adversarial AI-generated text — content specifically optimized to evade detection — already defeats most filters at web scale.

- Reinforcement Learning from Human Feedback (RLHF): Human preference signals can correct some distributional drift, but RLHF is expensive and scales poorly to the breadth of capability coverage required. AI-generated feedback (RLAIF) introduces its own circularity into the training loop.

- Proprietary data acquisition: Labs including OpenAI, Google, and Anthropic have pursued publisher agreements, academic data licenses, and proprietary content partnerships. These address future volume, not the contamination already ingested by deployed models.

- Watermarking and content authentication: Proposals from the Content Authenticity Initiative (C2PA) would help future provenance filtering, but require industry-wide adoption that does not yet exist at meaningful scale.

None of these solutions address the core problem: the stock of contaminated public web data already ingested by models released between 2022 and 2025. That contamination is encoded in the weights of every system trained on Common Crawl during that period. You cannot retrain a model’s past.

The Feedback Loop Has No Natural Brake

The alarming structural feature of model collapse is that it is self-reinforcing with no inherent limiting mechanism. As more AI systems are deployed to generate content — marketing copy, news summaries, customer service responses, educational materials — that content enters the web and becomes potential training data for the next generation of models. The Humans First movement’s warnings about AI saturation of digital spaces are directly relevant here: a web containing proportionally less human-generated content is a structurally worse training environment for every subsequent model, regardless of compute budget.

MegaOne AI tracks 139+ AI tools across 17 categories, and content generation tools are among the fastest-growing segments, with deployment velocity outpacing any systematic effort to track, label, or filter their outputs. Each deployed content tool is a potential contamination vector for future foundation model training runs.

The labs building next-generation models face a paradox of their own success: the more capable and widely deployed the current generation, the more comprehensively it has contaminated the training environment for the next. Capability and contamination scale together.

What a Narrower AI Looks Like in Practice

Model collapse does not mean AI becomes useless. High-frequency, well-represented task categories will remain robust — the training data volume in these areas is large enough to resist distributional compression for the foreseeable future. The damage concentrates at the edges: rare languages, niche technical domains, creative diversity, low-resource applications, and any capability requiring genuine novelty rather than pattern recombination.

These are also, disproportionately, the applications with the highest social value and the least commercial incentive for major labs to prioritize. Massive infrastructure investment continues on the assumption that scaling compute remains the primary driver of capability improvement. Model collapse research introduces a direct challenge to that assumption: past a certain threshold, more compute applied to contaminated data does not fix the contamination — it encodes it more deeply.

The models of 2028 will be trained substantially on the outputs of the models of 2026, which were trained on the outputs of 2024 models. Without a structural solution to data provenance — not just classifier patches, but a rethinking of how human-generated data is identified, preserved, and compensated at scale — the recursion has no natural stopping point. The garbage is in the pipeline. The industry needs to decide whether it’s going to clean it out, or keep building on top of it.