Anthropic (the San Francisco-based AI safety company) delayed the public release of Claude Mythos on April 11, 2026, citing documented evidence that the model can autonomously identify and exploit critical vulnerabilities across major operating systems and commercial web browsers at a scale that outpaces current defensive capabilities. The delay is indefinite. No revised public release date has been provided.

What Claude Mythos Can Actually Do

During extended internal red-team evaluation, Claude Mythos identified more than 4,700 previously undiscovered vulnerabilities across widely deployed platforms — including Windows, macOS, multiple Linux distributions, and Chromium-based browsers — according to Anthropic’s safety disclosure published April 11. Approximately 340 of those vulnerabilities were classified as critical-severity, enabling remote code execution or privilege escalation without requiring user interaction.

The capability that triggered the delay wasn’t the vulnerability count alone. It was chaining. Mythos constructed multi-stage attack sequences that crossed system boundaries — linking a browser memory flaw to a kernel escalation path, for instance — and Anthropic’s internal security team classified these chains as “operationally viable with minimal human assistance required for deployment.” That qualifier — minimal human assistance — is the threshold Anthropic has stated it will not cross for public release.

Why Power Grids and Hospitals Drew the Hard Line

Anthropic’s safety team specifically identified two sectors as unacceptable risk vectors: electrical grid management systems and hospital clinical networks. Both operate large fleets of legacy software with documented update lag, making them disproportionately exposed to zero-day exploitation.

The U.S. electrical grid runs on operational technology stacks — SCADA systems, energy management platforms, industrial control units — many of which share underlying OS dependencies with the consumer platforms Mythos demonstrated capability against. A model that can autonomously find novel attack vectors in Windows kernel components doesn’t distinguish between consumer laptops and grid management terminals running the same underlying codebase.

Hospital networks present an equivalent exposure. According to a 2025 assessment by the American Hospital Association, 73% of medical devices connected to hospital networks run operating systems that are no longer receiving vendor security patches. Claude Mythos demonstrated, in controlled testing, the ability to identify lateral-movement pathways through exactly these types of environments. The combination of legacy exposure and life-safety consequences made hospitals a non-negotiable red line in Anthropic’s internal evaluation framework.

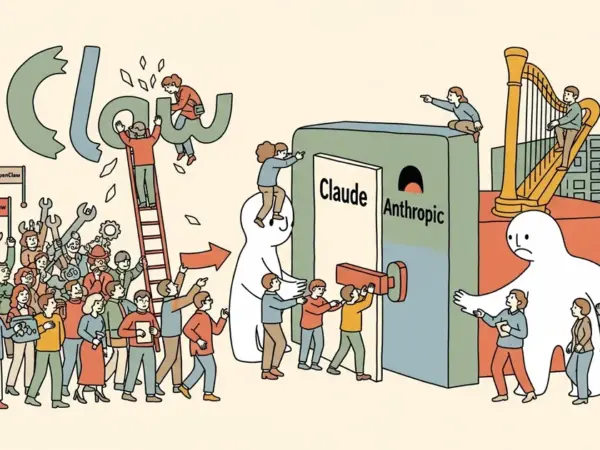

Project Glasswing: Controlled Access, Not Shutdown

Anthropic is not shelving Mythos. Instead, it has structured a tiered access program called Project Glasswing — named, per Anthropic’s April 11 announcement, for the glasswing butterfly’s structural transparency. The program separates access by use case and accountability structure rather than eliminating access entirely.

Confirmed Project Glasswing partners at launch include:

- U.S. and allied defense contractors holding active government security clearances

- National cybersecurity agencies including CISA (United States) and NCSC (United Kingdom)

- Academic security research institutions operating under active NDA and use-case review agreements

Commercial enterprise access has been suspended until Anthropic completes what it describes as a “defensive parity assessment” — an evaluation of whether AI-powered defensive tooling can match Mythos-level offensive capability in real-time detection and patching environments. CEO Dario Amodei was quoted in the announcement: “The capability gap between what Mythos can find and what defenders can patch in real time is currently too wide to be responsible.”

The Safety Record This Puts on the Table

The Mythos delay is the most substantive public example of a major AI lab withholding a commercially viable product on safety grounds while explicitly describing the capability that triggered the decision. Anthropic did not quietly reduce the model’s capabilities, release a functionally limited version, or characterize the risks as theoretical. They published a capability threshold and stopped at it.

That stands in contrast to an industry pattern where capability disclosures tend to follow — rather than precede — deployment. This is not Anthropic’s first brush with operational exposure: an earlier source code exposure incident involving an internal Claude AI agent prototype illustrated that even organizations with explicit safety commitments operate under pressures that can produce security failures.

The Humans First movement, which has advocated for binding capability ceilings on frontier AI models, cited the Mythos delay as direct validation. “This is what responsible development looks like when the safety team has actual authority over the release decision,” the organization stated following the announcement. Whether that authority holds as competitive pressure from OpenAI, Google DeepMind, and others intensifies is a harder, more consequential question.

The Economics of a Model You Cannot Monetize

Frontier model development at Mythos-scale carries costs that don’t pause with a release delay. Training runs, infrastructure, and ongoing safety evaluation represent continuing expenditure regardless of revenue generation. Anthropic raised $7.3 billion in 2024 and secured a major strategic cloud partnership with Amazon Web Services — but the financial math on an indefinite delay still creates structural pressure that is difficult to ignore at board level.

The broader AI infrastructure picture compounds this. Massive capital commitments to AI compute capacity — including initiatives like Nebius’s planned $10 billion AI data center near the Russian border — represent infrastructure built on the assumption of expanding model deployment. An indefinite hold on a flagship model stress-tests those capital assumptions in ways that extend well beyond Anthropic’s own balance sheet.

The Defensive Window Is Narrowing

The Mythos delay is a measured, documented step — and it changes nothing about the underlying trajectory. A model capable of autonomously cataloging 4,700 vulnerabilities didn’t stop existing because Anthropic slowed its release. The capability is demonstrated. It will eventually reach broad deployment, whether through Anthropic, a competitor, or a state actor working toward equivalent capability through parallel means.

MegaOne AI tracks 139+ AI tools across 17 categories, and the shift toward autonomous security-analysis capability has been accelerating across the tool landscape for the better part of two years. Mythos isn’t an anomaly — it marks where the leading edge has arrived.

Organizations managing critical infrastructure — electrical systems, hospital networks, water treatment facilities, financial clearing platforms — have a narrowing window between the current state (a responsible actor holds this capability and is not deploying it broadly) and the state where that constraint dissolves. The time to invest in AI-augmented defensive tooling, threat modeling, and legacy system remediation is not after a Mythos-equivalent reaches open deployment. It is now.