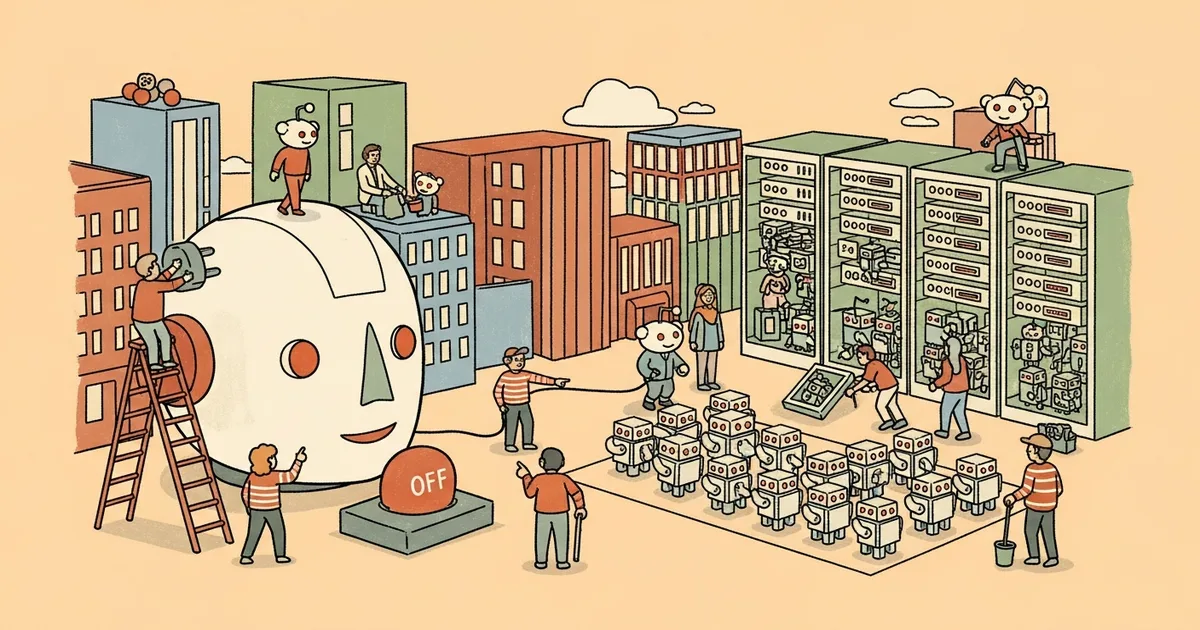

- Reddit announced new bot labeling, human verification requirements, and reporting tools on March 25, 2026, with enforcement starting March 31.

- Accounts flagged for suspicious behavior must verify via passkeys, Face ID, or potentially World ID — without exposing real-world identity to Reddit.

- The platform removes approximately 100,000 spam accounts per day and will label compliant automated accounts with an “[App]” tag.

- Reddit has no plans to restrict AI-generated content posted by verified humans, stating that “using AI to write is part of how people will communicate in the future.”

What Happened

Reddit CEO Steve Huffman announced on March 25, 2026 that the platform will begin requiring suspected automated accounts to verify they are human. The new rules take effect March 31, 2026, introducing bot labeling, targeted verification challenges, and expanded reporting tools as part of Reddit’s most aggressive anti-bot measures to date.

The platform currently removes approximately 100,000 spam accounts per day. Huffman emphasized that the verification system “will not apply to most users” — only accounts displaying signals of automated posting or bot-like behavior will be challenged.

Why It Matters

Reddit is the most-cited domain in AI search results. ChatGPT, Perplexity, and Google AI Overviews draw heavily from Reddit threads because they represent authentic human discussion. If Reddit successfully reduces bot-generated content, its value as both an AI training source and citation reference increases. Cleaner, verified-human threads produce more reliable data and more trustworthy citations.

The crackdown also protects Reddit’s advertising business. Bot activity inflates engagement metrics and degrades advertiser trust. With Reddit’s growing ad revenue tied to authentic user engagement, cleaning up automated accounts safeguards CPM rates and advertiser confidence. As Huffman noted, Reddit’s predecessor Digg “shut down because it couldn’t get a handle on the bots overrunning its site.” The bot problem is simultaneously an integrity issue, an AI data quality issue, and a revenue issue — which explains why Reddit is investing in verification infrastructure rather than continuing to play whack-a-mole with individual accounts.

Technical Details

Reddit’s detection system uses specialized tooling that analyzes account-level signals, including how quickly an account posts or writes content and patterns of automated behavior. Accounts flagged as suspicious face verification through on-device methods: passkeys from Apple, Google, and YubiKey; biometric services like Face ID; and potentially Sam Altman’s World ID face-scanning identification system. Accounts that fail verification may be restricted from the platform entirely.

The verification architecture is designed to “confirm humanness” rather than verify actual identity. Reddit states that “your account information, usage data, and identity never mix” — the system does not expose real-world identity to Reddit or Reddit activity to any third party. Accounts that fail verification may be restricted from the platform.

For legitimate bots, Reddit introduced two label categories: “Developer Platform App” for bots built on Reddit’s official developer tools, and “App” for other compliant automation. Bot developers can register starting March 31 to receive the appropriate label on their account profiles.

Who’s Affected

Regular Reddit users should see no change — the verification requirements target only accounts exhibiting automated behavior. Marketers who relied on Reddit bots for visibility lose a distribution channel, particularly those posting AI-generated content to threads that AI search engines then cite.

Bot developers operating legitimate services must register through Reddit’s developer program by March 31 to receive the “[App]” label, or risk having their accounts flagged and restricted. Moderators gain new reporting tools to flag suspected bot accounts more efficiently. Subreddit communities that have relied on automated moderation bots, reminder bots, or information-fetching bots will need to ensure those services register and receive proper labeling to avoid disruption.

What’s Next

Reddit is “exploring” methods to comply with emerging age verification laws without compromising user privacy, suggesting the verification infrastructure may expand beyond bot detection. Notably, the company has no current plans to restrict AI-generated content shared by verified humans. Reddit’s position that “using AI to write is part of how people will communicate in the future” means the platform is targeting automated accounts, not AI-assisted content creation — a distinction that will become increasingly difficult to enforce as AI writing tools become ubiquitous. Whether the verification system can scale effectively against increasingly sophisticated bot operators who use residential proxies and human-like posting patterns remains an open question.