- GPT-5.4 scored 75.0% on the OSWorld-Verified benchmark, surpassing the human expert baseline of 72.4% at autonomous desktop tasks.

- The model includes built-in computer-use capabilities, interacting with software through screenshots, mouse commands, and keyboard inputs.

- OpenAI released three variants on March 5, 2026: GPT-5.4 Thinking, GPT-5.4 Pro, and GPT-5.4 for API and Codex.

- API pricing starts at $2.50 per million input tokens and $20.00 per million output tokens.

What Happened

OpenAI released GPT-5.4 on March 5, 2026, marking the first time a frontier AI model has outperformed human experts at real desktop computer tasks. On the OSWorld-Verified benchmark, which measures an AI agent’s ability to complete autonomous desktop workflows, GPT-5.4 scored 75.0% compared to the human expert baseline of 72.4%.

The model ships in three variants. GPT-5.4 Thinking is available to Plus, Team, and Pro subscribers through the ChatGPT interface. GPT-5.4 Pro delivers maximum performance exclusively for Pro and Enterprise tier users. A dedicated API and Codex version offers developers programmatic access to the full one-million-token context window for building custom applications and agents.

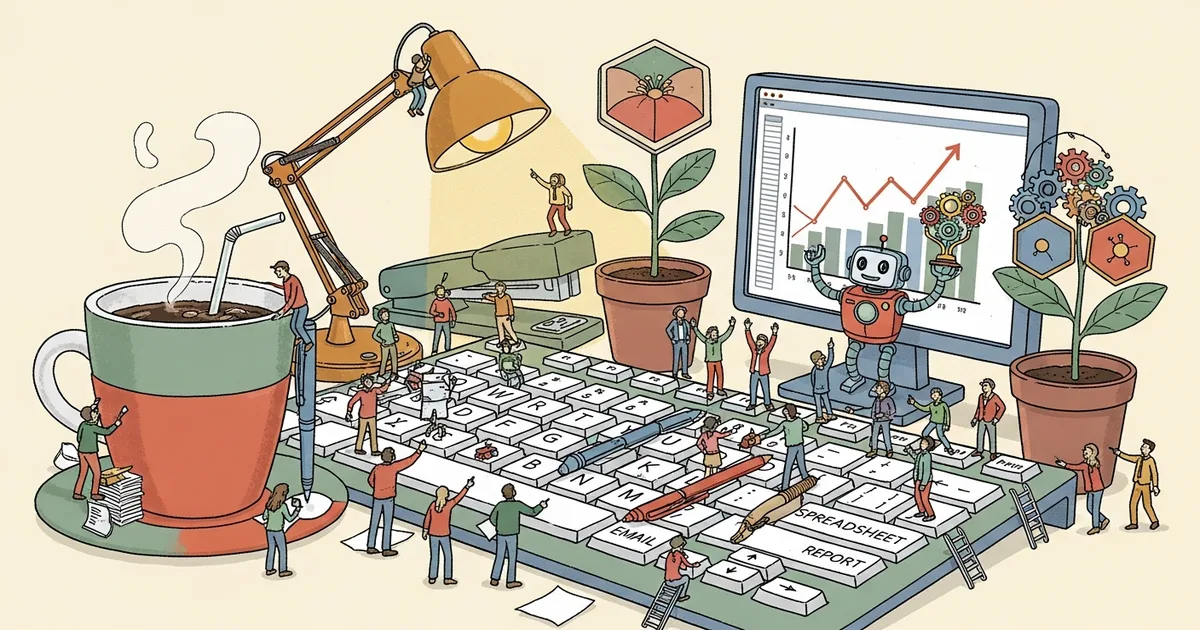

GPT-5.4 is the first general-purpose model with native computer-use capabilities built directly into the architecture. It can navigate desktop environments, click interface elements, type into input fields, and execute multi-step software workflows without requiring external tooling, browser extensions, or plugins.

Why It Matters

Passing the human baseline on OSWorld is significant because the benchmark measures practical task completion rather than abstract reasoning or knowledge retrieval. The tests evaluate whether an AI can operate real applications the way a trained person would: opening files, navigating menus, filling out forms, switching between applications, and coordinating actions across multiple programs in sequence.

This result shifts the conversation from AI as a text generator to AI as a software operator. Companies building automation workflows now have a model that can interact directly with existing desktop software through its visual interface, rather than requiring custom API integrations for every individual tool in the stack. For organizations running legacy systems without modern APIs, this approach could replace expensive robotic process automation setups.

The 83% match rate with human professionals across 44 occupations on GDPval further suggests the model handles domain-specific professional tasks with increasing reliability, from legal analysis to financial document review.

Technical Details

GPT-5.4 operates with a context window of 922,000 input tokens and 128,000 output tokens, totaling approximately 1.05 million tokens. The model processes visual information from screenshots alongside text, enabling it to understand and interact with graphical user interfaces directly.

Legal document analysis saw particular improvement, with GPT-5.4 scoring 91% on BigLaw Bench. OpenAI reported a 33% reduction in hallucinated claims compared to GPT-5.2, a meaningful improvement for professional applications where factual accuracy is non-negotiable. On Toolathlon, which tests multi-step tool and API usage across complex real-world scenarios, GPT-5.4 scored 54.6% versus 46.3% for its predecessor, an 8.3-point improvement.

Standard API pricing is $2.50 per million input tokens and $20.00 per million output tokens. For prompts exceeding 272,000 tokens, premium pricing applies at 2x the standard input rate and 1.5x the output rate. Cached input tokens are available at $0.625 per million, reducing costs for applications that repeatedly reference the same base context.

Who’s Affected

Enterprise teams running repetitive desktop workflows stand to benefit most from the computer-use capability. The model can handle tasks like data entry across legacy systems, form processing across multiple applications, and multi-step administrative workflows that previously required either dedicated human operators or expensive custom-built RPA solutions.

Developers building AI agents gain a model that can operate software directly through its visual interface, reducing the need to build and maintain custom API connectors for every application in a workflow. QA teams could potentially use the capability for automated UI testing without writing traditional test scripts.

However, the premium pricing tier for long-context prompts adds significant cost for high-volume automation use cases. Organizations processing thousands of documents daily will need to calculate whether the per-token cost justifies replacing human operators or existing automation tools.

What’s Next

The 75.0% OSWorld score still means GPT-5.4 fails roughly one in four desktop tasks. Complex multi-step workflows involving ambiguous instructions, unusual application layouts, and non-standard interfaces remain challenging. OpenAI has not announced when computer-use capabilities will move beyond the current preview stage, what additional safety restrictions will apply to autonomous desktop operations in production deployments, or whether pricing adjustments will make high-volume computer-use workflows commercially viable.