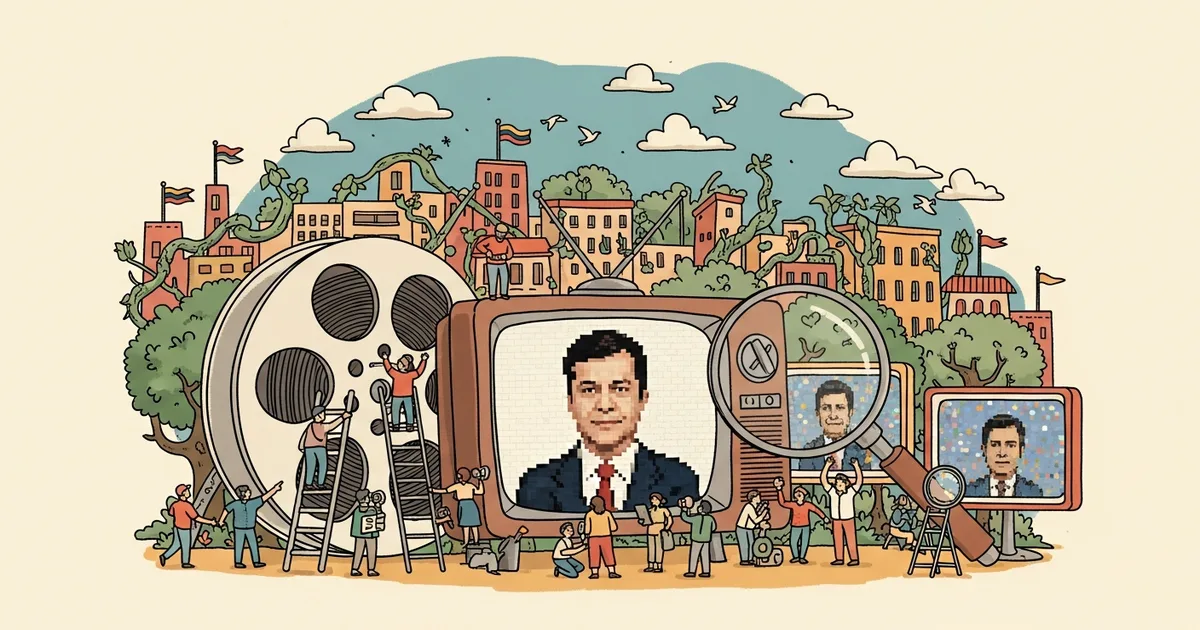

- AI-generated deepfake images and videos depicting Venezuelan President Nicolás Maduro’s capture by U.S. forces on January 3, 2026, accumulated over 14 million views on X in under two days.

- High-profile figures including Elon Musk, Donald Trump, and Flávio Bolsonaro shared the fabricated content before some later deleted their posts.

- Google’s SynthID watermark detection tool and German startup Detesia identified AI generation markers in multiple circulating images.

- Experts described the incident as one of the first cases where AI-generated imagery of a political figure was produced and spread in real time during a breaking news event.

What Happened

Following the U.S. military operation that resulted in the capture of Venezuelan President Nicolás Maduro on January 3, 2026, a flood of AI-generated images and videos spread across social media platforms. Disinformation watchdog NewsGuard identified seven fabricated or misrepresented images and videos related to the operation, which collectively garnered more than 14 million views on Elon Musk’s platform X in under 48 hours.

One AI-generated video clip alone was viewed over 5.6 million times and reshared by at least 38,000 accounts. Fact-checkers at the BBC and AFP traced the earliest known version of that clip to the TikTok account @curiousmindusa, which regularly posts AI-generated content. Other fabricated images showed Maduro disembarking an aircraft (2.6 million views on a single Spanish-language X post) and appearing in white pajamas aboard a military cargo plane (4.6 million views).

A separate video mislabeled as “Caracas today” that purported to show crowds supporting Maduro actually depicted a November 2025 march at Miraflores Palace. That clip accumulated over 1 million views before being flagged.

Why It Matters

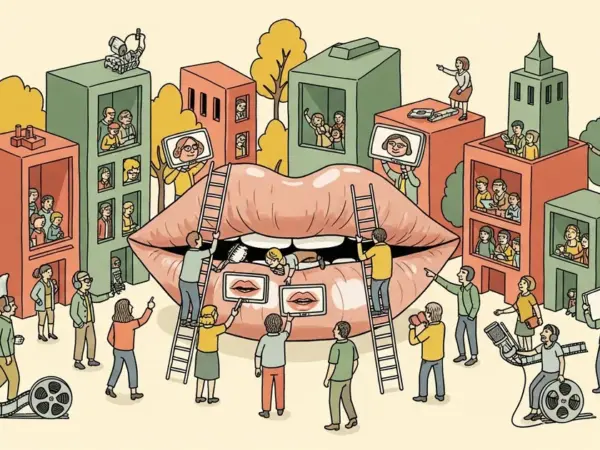

The Venezuela deepfake incident marked what experts described as a turning point in AI-generated misinformation. Unlike previous cases where deepfakes targeted celebrities or were created days after events, these fabricated images and videos were produced and distributed in real time as breaking news unfolded. The speed filled an information vacuum before verified reporting could reach audiences.

Information warfare analyst Tal Hagin said the episode demonstrated a dangerous new reality: “We are no longer at the stage where it’s six months away, we are already there: unable to identify what’s AI and what’s not.” He added that “when you have this vacuum of information, it needs to be filled somehow,” explaining how fabricated content rushed in to meet public demand for visual confirmation of the military operation.

The incident also demonstrated a compounding trust problem. Hagin warned that the sheer volume of AI-generated content now causes people to distrust even authentic footage, because they have been exposed to so many fakes that skepticism becomes the default response to any visual evidence.

Technical Details

Several detection methods exposed the deepfakes. Google’s SynthID verification tool identified invisible digital watermarks indicating AI generation or editing in the aircraft disembarkation image. Detesia, a German deepfake detection startup, conducted independent analysis and found “substantial evidence” of AI generation in multiple circulating images.

Visual artifacts also betrayed the fabricated content. Some images contained soldiers rendered with three hands — a common generative AI error. The video shared by Musk displayed unnatural human movements, abnormal skin tones, and fake license plates on vehicles in the background. A French-language video showed fireworks that appeared to originate within crowds without appropriate smoke effects, accompanied by mismatched background imagery that did not correspond to any known Venezuelan location.

Later versions of the Maduro images showed increasingly obvious errors, including depicting the president covered in blood — details that were absent from earlier, more convincing iterations. The deepfakes spread simultaneously across X, TikTok, and Instagram, complicating platform-level moderation efforts as content was cross-posted faster than review teams could respond.

Who’s Affected

The fabricated content was amplified by high-profile figures with massive audiences. Donald Trump, Elon Musk, and Flávio Bolsonaro (son of former Brazilian President Jair Bolsonaro) all shared deepfake material. Portuguese far-right Chega party officials, Polish MEP Mariusz Kamiński, and Colombian President Gustavo Petro also distributed false claims or misleading imagery. Musk eventually deleted his repost of the 5.6-million-view video, but not before it had been widely reshared.

Millions of ordinary social media users encountered the content without context, potentially shaping their understanding of a major geopolitical event based on fabricated visuals.

What’s Next

The incident intensified calls for improved AI content detection at the platform level and broader adoption of watermarking standards like Google’s SynthID. However, detection technology remains a step behind generation capabilities, and not all AI tools embed watermarks. No major platform announced policy changes in direct response to the Venezuela deepfakes, leaving the same vulnerabilities in place for the next breaking news event.

Related Reading

- A Mayor Declared a Deepfake Emergency After AI-Generated Images of Children Spread

- OpenAI’s Child Safety Blueprint: Six Months Into the Deepfake Crisis

- EU Bans AI-Generated Images and Videos From Official Institutional Communications

- Iran Turned Trump and Netanyahu Into LEGO Characters Using AI — And Millions Shared It Before Realizing It’s Propaganda

- AI models confidently describe images they never saw, and benchmarks fail to catch it