Highly realistic AI-generated images depicting the capture of Venezuelan President Nicolas Maduro went viral on January 3-4, 2026, after U.S. forces conducted airstrikes and a ground operation in Venezuela. CNBC reported that fabricated images circulated widely before official confirmation or authentic imagery was available, filling an information vacuum that AI content exploited.

How the Fakes Spread

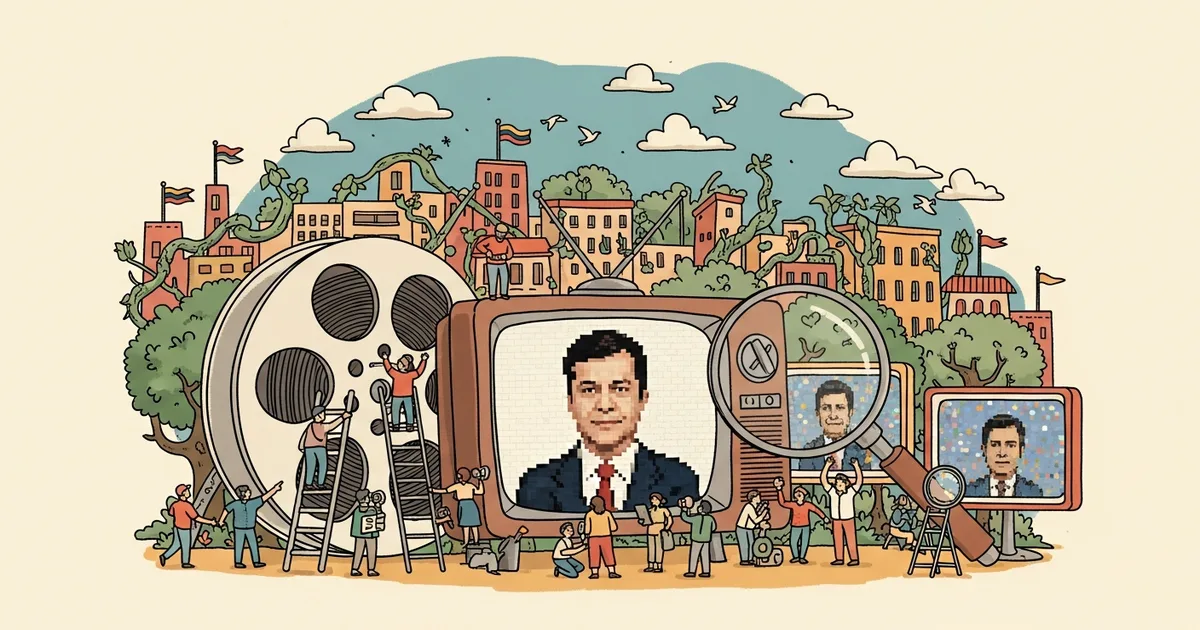

Multiple AI-generated images showed Maduro being escorted by DEA officers — created using Google’s Nano Banana Pro image generator, which embeds watermarks proving AI origin. An image of “Maduro in prison” posted January 4 came from the same tool. Viral celebration videos showing Venezuelan citizens crying with joy were posted by the “Wall Street Apes” account on X, amassing 5.6 million views before being flagged as fake.

NewsGuard identified 7 fabricated or misrepresented images and videos that collectively garnered over 14 million views in under 2 days on X alone. Snopes, PolitiFact, France 24, and EBU Spotlight all published debunks — but the corrections arrived hours after the fakes had already shaped public perception.

The Election Cycle Implication

The Venezuela deepfakes demonstrated that AI-generated political imagery can fill information vacuums faster than official sources or fact-checkers can respond. The images were not sophisticated — several contained visible errors like outdated military camouflage patterns — but they spread because they confirmed what audiences wanted to believe at a moment of genuine uncertainty.

With the 2026 U.S. midterm elections approaching, the Venezuela incident provides a concrete preview of how AI-generated political content operates in practice: not through perfect deception but through speed. By the time fact-checkers publish corrections, the fabricated images have already been seen by millions and shaped the narrative. The EU AI Act’s transparency requirements for AI-generated content address labeling but not speed — and speed is the actual attack vector.